You can divide by \(5\), but not by \(0\). Matrices work in a surprisingly similar way: some matrices can be "undone," and some cannot. The key to telling the difference is a single number called the determinant. Along the way, two special matrices—the zero matrix and the identity matrix—play roles that closely match the roles of \(0\) and \(1\) in the real numbers.

When you work with real numbers, certain numbers have special jobs. The number \(0\) leaves a number unchanged when added: \(a + 0 = a\). The number \(1\) leaves a number unchanged when multiplied: \(a \cdot 1 = a\). Matrices have special versions of these ideas too.

In matrix algebra, the zero matrix is the additive identity, and the identity matrix is the multiplicative identity. These ideas are not just vocabulary. They help explain how matrix operations work, how inverses are defined, and why determinants matter.

You already know that adding real numbers is different from multiplying them. You also know that not every operation can be undone in every situation: division by \(0\) is impossible. Matrix inverses follow a similar pattern.

Matrices are rectangular arrays of numbers, and they are used to represent data, transformations, and systems of equations. Because matrices can act like machines that change vectors or points, knowing which matrix does "nothing" and which matrices can be reversed is extremely important.

A matrix can be added to another matrix only if they have the same dimensions. For example, two \(2 \times 2\) matrices can be added, but a \(2 \times 2\) matrix and a \(2 \times 3\) matrix cannot.

The zero matrix is the matrix whose entries are all \(0\). Its size matters. A \(2 \times 2\) zero matrix is different from a \(3 \times 3\) zero matrix because they belong to different matrix sizes.

Zero matrix: a matrix in which every entry is \(0\). For any matrix \(A\) of the same size, \(A + 0 = A\), where \(0\) means the zero matrix of that size.

Additive identity: an element that leaves another element unchanged under addition.

For example, if

\[A = \begin{bmatrix} 3 & -1 \\ 5 & 2 \end{bmatrix}, \quad O = \begin{bmatrix} 0 & 0 \\ 0 & 0 \end{bmatrix}\]

then

\[A + O = \begin{bmatrix} 3+0 & -1+0 \\ 5+0 & 2+0 \end{bmatrix} = \begin{bmatrix} 3 & -1 \\ 5 & 2 \end{bmatrix} = A\]

This is exactly like \(a + 0 = a\) for real numbers. The zero matrix does not change the matrix you add it to.

The zero matrix also connects to subtraction. If you subtract a matrix from itself, the result is the zero matrix:

\(A - A = O\)

That makes the zero matrix important in solving matrix equations. If you want to know when two matrices are equal, one strategy is to move everything to one side and check whether the result is the zero matrix.

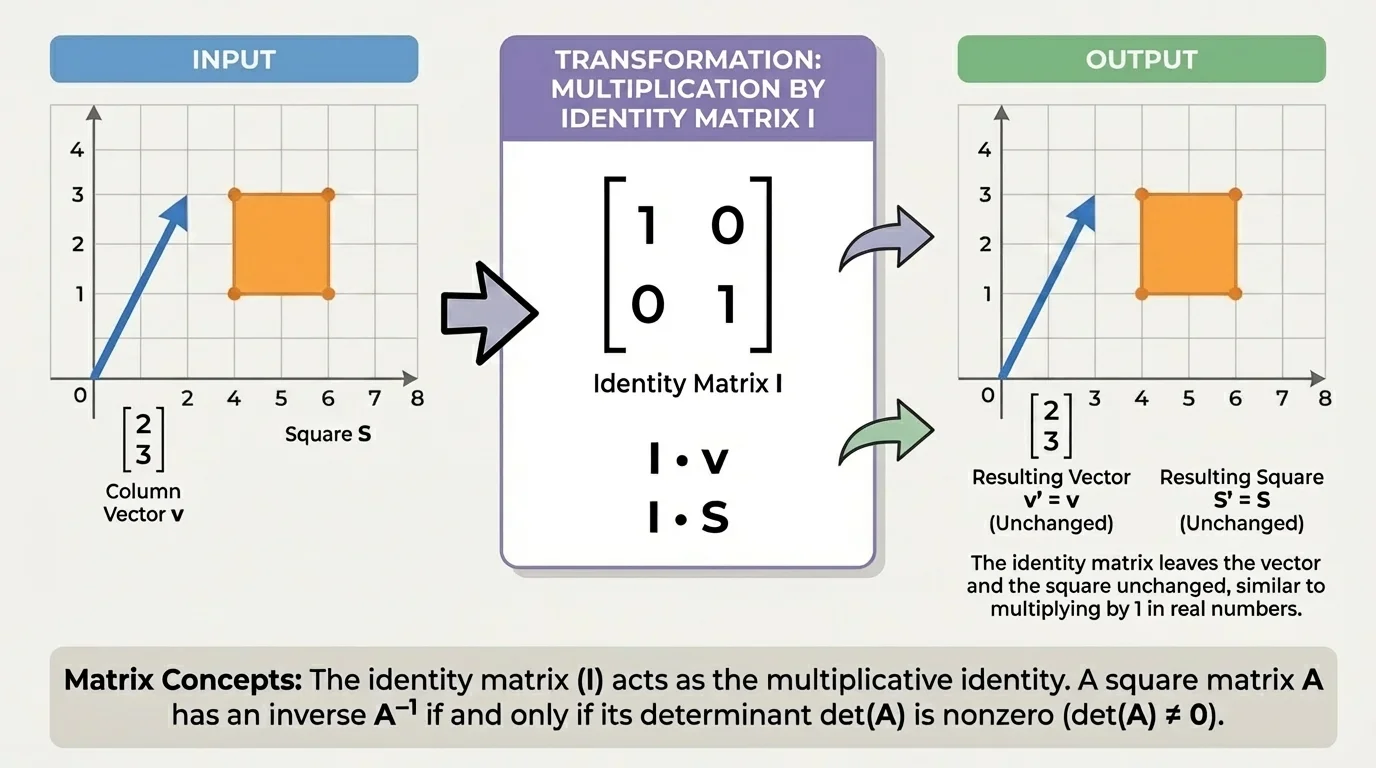

The matrix multiplication rule is more complicated than addition, but one matrix has a very simple effect: as [Figure 1] illustrates, multiplying by the identity matrix leaves a compatible matrix or vector unchanged. This makes the identity matrix the matrix version of the number \(1\).

For a \(2 \times 2\) matrix, the identity matrix is

\[I_2 = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}\]

For a \(3 \times 3\) matrix, it is

\[I_3 = \begin{bmatrix} 1 & 0 & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 1 \end{bmatrix}\]

In general, an identity matrix has \(1\)s on the main diagonal and \(0\)s everywhere else.

If \(A\) is a \(2 \times 2\) matrix, then

\[AI_2 = A \quad \textrm{and} \quad I_2A = A\]

For example, let

\[A = \begin{bmatrix} 4 & 7 \\ 1 & 3 \end{bmatrix}\]

Then

\[AI_2 = \begin{bmatrix} 4 & 7 \\ 1 & 3 \end{bmatrix}\begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix} = \begin{bmatrix} 4 & 7 \\ 1 & 3 \end{bmatrix}\]

and

\[I_2A = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}\begin{bmatrix} 4 & 7 \\ 1 & 3 \end{bmatrix} = \begin{bmatrix} 4 & 7 \\ 1 & 3 \end{bmatrix}\]

The identity matrix matters because it defines what it means for a matrix to have a multiplicative inverse. Just as a real number \(a \neq 0\) has an inverse if there is a number \(a^{-1}\) such that \(aa^{-1} = 1\), a square matrix \(A\) has an inverse if there is a matrix \(A^{-1}\) such that

\[AA^{-1} = I \quad \textrm{and} \quad A^{-1}A = I\]

Notice something important: the identity matrix must have the correct size. A \(2 \times 2\) matrix uses \(I_2\), a \(3 \times 3\) matrix uses \(I_3\), and so on.

Why the identity matrix works

Each row of the identity matrix picks out exactly one entry from the matrix or vector being multiplied. The \(1\)s keep the matching entries, and the \(0\)s remove everything else. That is why the result stays unchanged.

Although the zero and identity matrices act like \(0\) and \(1\), matrices are not exactly like real numbers. One major difference is that multiplication is commutative for real numbers but usually not for matrices.

For real numbers, \(ab = ba\). For matrices, it is possible that \(AB\) exists, \(BA\) exists, and \(AB \neq BA\). In some cases one product may even exist while the other does not.

Another difference is that only square matrices can have multiplicative inverses. A non-square matrix cannot have a two-sided inverse in the usual sense because there is no matching identity matrix on both sides of the correct size.

Also, not every square matrix has an inverse. That is where the determinant becomes powerful.

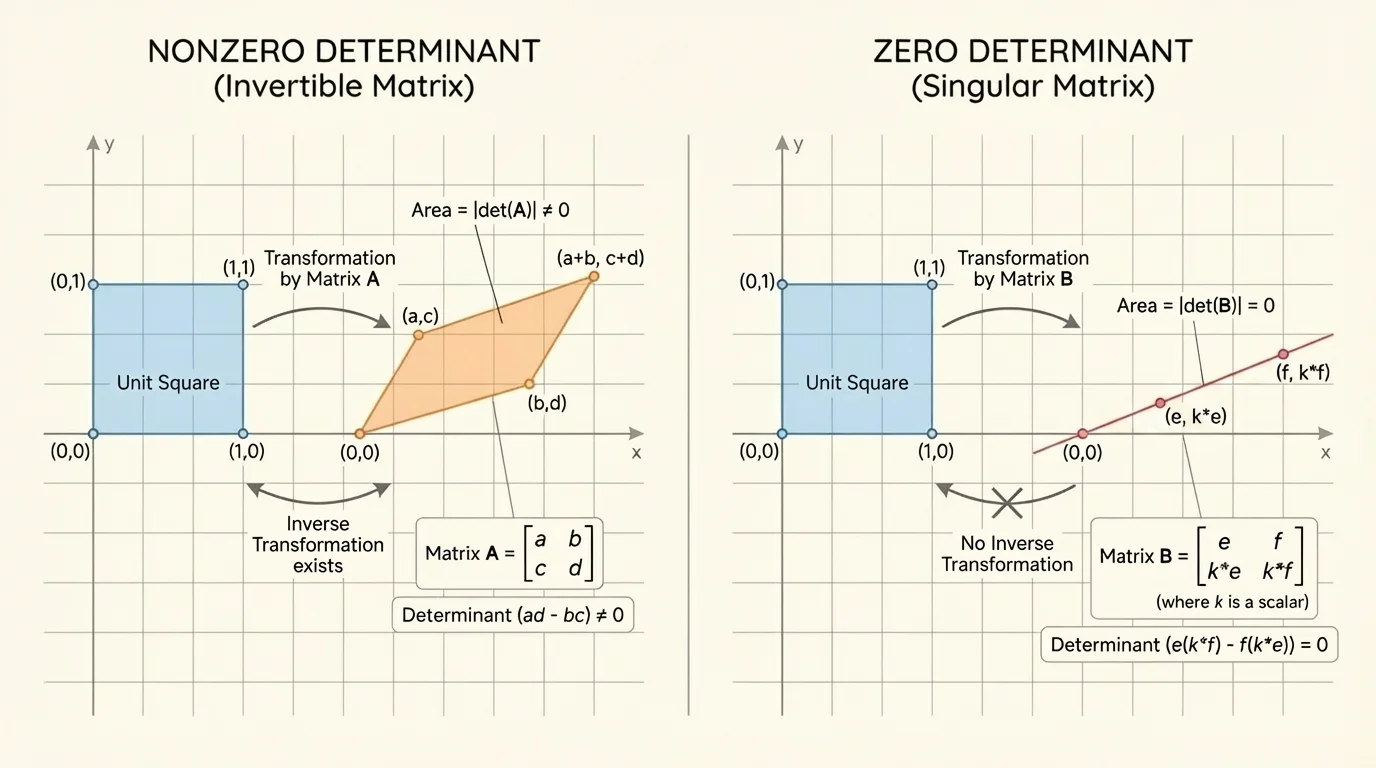

A determinant is a single number associated with a square matrix. It helps measure whether the matrix transformation stretches space, flips it, or collapses it. Geometrically, [Figure 2] shows that a nonzero determinant keeps area from collapsing completely, while a determinant of \(0\) collapses a region into a lower-dimensional shape.

For a \(2 \times 2\) matrix

\[A = \begin{bmatrix} a & b \\ c & d \end{bmatrix}\]

the determinant is

\[\det(A) = ad - bc\]

The key fact is one of the most important ideas in matrix algebra:

\[\det(A) \neq 0 \quad \textrm{if and only if} \quad A \textrm{ has a multiplicative inverse}\]

The phrase if and only if means the statement works in both directions:

If \(\det(A) = 0\), then the matrix is called singular matrix, meaning it has no inverse.

This is similar to real numbers. A real number has a multiplicative inverse exactly when it is not \(0\). For matrices, a square matrix has a multiplicative inverse exactly when its determinant is not \(0\).

For larger square matrices, computing determinants takes more work, but the same idea remains true: a nonzero determinant means the matrix is invertible.

For a \(2 \times 2\) matrix, there is a direct formula for the inverse. If

\[A = \begin{bmatrix} a & b \\ c & d \end{bmatrix}\]

and \(ad - bc \neq 0\), then

\[A^{-1} = \frac{1}{ad - bc}\begin{bmatrix} d & -b \\ -c & a \end{bmatrix}\]

This formula only works when \(ad - bc \neq 0\). If \(ad - bc = 0\), the fraction would involve division by \(0\), and the inverse does not exist.

A matrix and its inverse undo each other. In computer graphics, this lets software rotate, stretch, or shear an image and then restore it exactly—if the transformation matrix is invertible.

To check whether a matrix is really an inverse, multiply the matrix and the proposed inverse. If the product is the identity matrix, the inverse is correct.

These examples show how the zero matrix, identity matrix, and determinant all connect.

Worked example 1

Show that the zero matrix is the additive identity for \(A = \begin{bmatrix} 2 & -4 \\ 6 & 1 \end{bmatrix}\).

Step 1: Write the zero matrix of the same size.

Since \(A\) is \(2 \times 2\), use \(O = \begin{bmatrix} 0 & 0 \\ 0 & 0 \end{bmatrix}\).

Step 2: Add corresponding entries.

\[A + O = \begin{bmatrix} 2+0 & -4+0 \\ 6+0 & 1+0 \end{bmatrix}\]

Step 3: Simplify.

\[A + O = \begin{bmatrix} 2 & -4 \\ 6 & 1 \end{bmatrix} = A\]

The zero matrix leaves \(A\) unchanged, so it is the additive identity.

This result may look simple, but it matters whenever equations involving matrices are rearranged.

Worked example 2

Verify that the identity matrix leaves \(B = \begin{bmatrix} 5 & 2 \\ -3 & 4 \end{bmatrix}\) unchanged under multiplication.

Step 1: Write the correct identity matrix.

Since \(B\) is \(2 \times 2\), use \(I_2 = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}\).

Step 2: Compute \(BI_2\).

\[BI_2 = \begin{bmatrix} 5 & 2 \\ -3 & 4 \end{bmatrix}\begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix} = \begin{bmatrix} 5(1)+2(0) & 5(0)+2(1) \\ -3(1)+4(0) & -3(0)+4(1) \end{bmatrix}\]

Step 3: Simplify.

\[BI_2 = \begin{bmatrix} 5 & 2 \\ -3 & 4 \end{bmatrix} = B\]

Step 4: Check the other side.

\[I_2B = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}\begin{bmatrix} 5 & 2 \\ -3 & 4 \end{bmatrix} = \begin{bmatrix} 5 & 2 \\ -3 & 4 \end{bmatrix} = B\]

The identity matrix is the multiplicative identity because multiplying by it does not change the matrix.

That is exactly the matrix version of multiplying a real number by \(1\).

Worked example 3

Determine whether \(C = \begin{bmatrix} 4 & 1 \\ 2 & 3 \end{bmatrix}\) is invertible, and if so, find its inverse.

Step 1: Compute the determinant.

\[\det(C) = (4)(3) - (1)(2) = 12 - 2 = 10\]

Step 2: Decide whether the inverse exists.

Since \(\det(C) = 10 \neq 0\), the matrix is invertible.

Step 3: Use the \(2 \times 2\) inverse formula.

\[C^{-1} = \frac{1}{10}\begin{bmatrix} 3 & -1 \\ -2 & 4 \end{bmatrix}\]

Step 4: Check by multiplication.

\[CC^{-1} = \begin{bmatrix} 4 & 1 \\ 2 & 3 \end{bmatrix}\frac{1}{10}\begin{bmatrix} 3 & -1 \\ -2 & 4 \end{bmatrix} = \frac{1}{10}\begin{bmatrix} 10 & 0 \\ 0 & 10 \end{bmatrix} = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix}\]

So

\[C^{-1} = \frac{1}{10}\begin{bmatrix} 3 & -1 \\ -2 & 4 \end{bmatrix}\]

The nonzero determinant tells you ahead of time that the inverse exists. That saves time and avoids trying impossible algebra.

Worked example 4

Decide whether \(D = \begin{bmatrix} 2 & 4 \\ 1 & 2 \end{bmatrix}\) has an inverse.

Step 1: Compute the determinant.

\[\det(D) = (2)(2) - (4)(1) = 4 - 4 = 0\]

Step 2: Apply the determinant test.

Since \(\det(D) = 0\), the matrix is not invertible.

Step 3: Interpret the result.

The second row is exactly half of the first row, so the rows do not provide independent information. The transformation collapses space in a way that cannot be undone.

Because the determinant is \(0\), \(D\) is singular and has no multiplicative inverse.

The table below compares the roles of \(0\) and \(1\) in the real numbers with the roles of the zero and identity matrices.

| Real numbers | Matrices | Role |

|---|---|---|

| \(0\) | Zero matrix \(O\) | Additive identity |

| \(1\) | Identity matrix \(I\) | Multiplicative identity |

| \(a + 0 = a\) | \(A + O = A\) | No change under addition |

| \(a \cdot 1 = a\) | \(AI = IA = A\) | No change under multiplication |

| \(a \neq 0\) means inverse exists | \(\det(A) \neq 0\) means inverse exists | Condition for multiplicative inverse |

Table 1. A comparison of special numbers and special matrices under addition and multiplication.

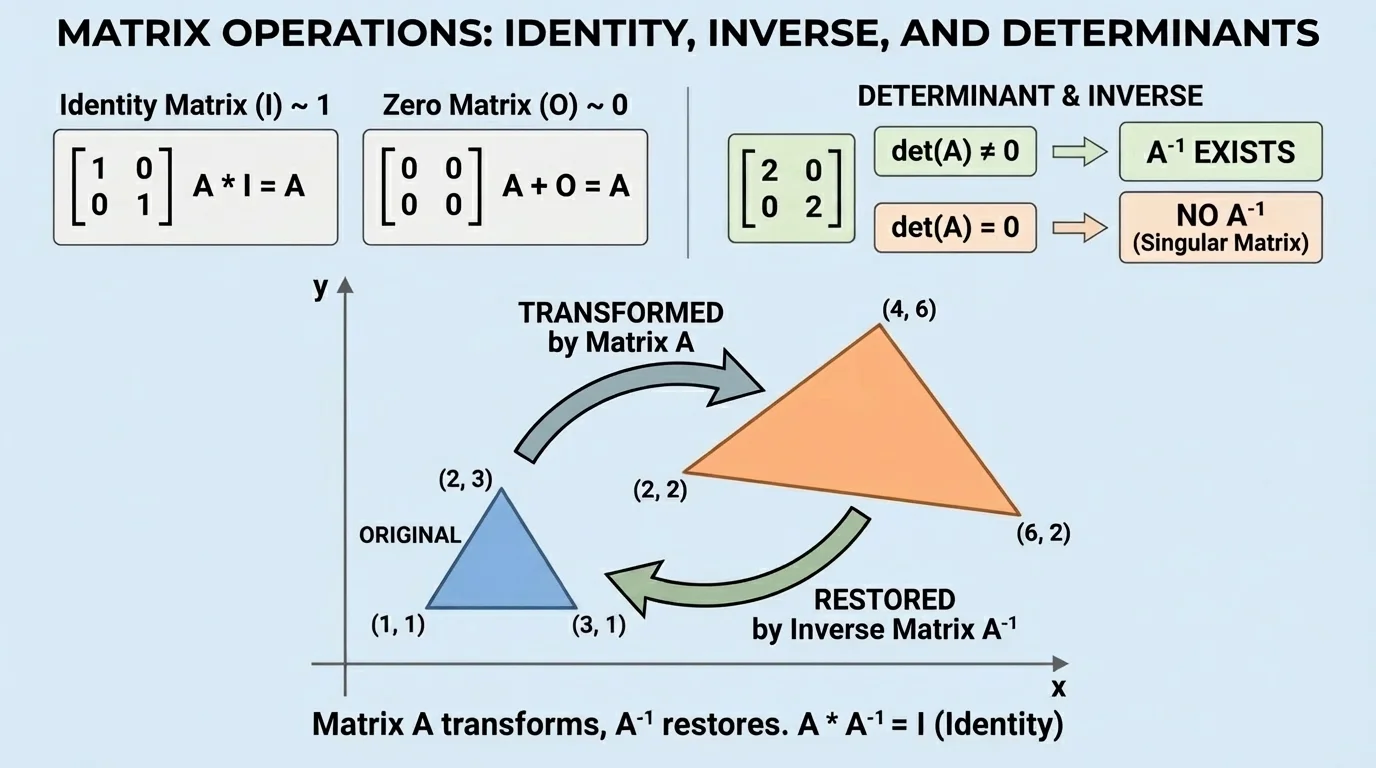

In computer graphics, matrices move and reshape images. A shape on a screen may be rotated, stretched, or reflected by multiplying its coordinate vectors by a matrix. As [Figure 3] shows, the identity matrix leaves the object unchanged, while an inverse matrix restores the original image after a transformation.

This is why invertible matrices are so useful in animation, game design, and 3D modeling. If a transformation has an inverse, software can reverse the change exactly.

Matrices are also used in systems of equations. If a system is written as \(AX = B\), then when \(A\) is invertible, the solution can be written as

\(X = A^{-1}B\)

That idea appears in engineering, economics, and physics whenever several quantities depend on one another at the same time.

Later, when you look back at [Figure 2], the geometric meaning becomes even clearer: if a matrix crushes an entire region into a line or a point, the original information is lost, so the transformation cannot be reversed. That is exactly why determinant \(0\) means no inverse.

Encoding and decoding information can also use invertible matrices. An invertible matrix can transform data into another form, and the inverse matrix can recover the original data. The same logic appears in some models of signal processing and digital communication.

One common mistake is using the wrong size zero or identity matrix. Sizes must match the operation. You cannot add matrices of different dimensions, and the identity matrix used in multiplication must be compatible with the matrix dimensions.

Another mistake is assuming that if \(AB = I\), then matrix multiplication always behaves like number multiplication in every way. Matrices have similarities to numbers, but matrix multiplication can fail to commute.

A third mistake is trying to find an inverse without checking the determinant first. For a \(2 \times 2\) matrix, calculating \(ad - bc\) is a fast test. If it is \(0\), stop immediately: no inverse exists.

"Nonzero determinant means reversible; zero determinant means information has been lost."

That idea is worth remembering because it connects arithmetic, geometry, and algebra all at once. The determinant is not just a rule to memorize; it tells you whether a matrix transformation can be undone.