A single viral post can change opinions in hours, even when it is incomplete, misleading, or flat-out wrong. That is why one of the most important academic and real-world skills is learning how to gather information from different kinds of sources, test whether those sources deserve trust, and combine their evidence into a sound judgment. Whether you are deciding which health advice to follow, which product to buy, which public policy to support, or how to solve a community problem, strong decision-making depends on more than one voice and more than one format.

In school and beyond, information rarely arrives in a neat package. You may hear a classmate give an argument aloud, read an article, examine a graph, compare a table of numbers, and watch a short video clip about the same issue. Each source reveals something useful, but each source also has limits. Real understanding comes from synthesis: bringing those pieces together into a fuller picture.

Suppose a town is debating whether to build a new skate park. One source might be a survey showing that many teenagers support it. Another might be a budget report showing the projected cost. A local resident may speak at a meeting about noise concerns. A map may show that the nearest park is far from neighborhoods where many young people live. If you only listen to one of these, your conclusion may be weak. If you compare all of them, your decision becomes more informed.

Using multiple sources helps you do three important things. First, it allows you to verify claims by checking whether several sources support the same idea. Second, it helps you detect what one source leaves out. Third, it improves fairness because you consider more than one perspective before reaching a conclusion.

Multiple-source integration is the process of combining information from different sources and formats in order to understand an issue, solve a problem, or make a decision. Credibility means how trustworthy and reliable a source is. Accuracy means whether the information is correct, precise, and supported by valid evidence.

Good readers and listeners do not simply collect information. They test it, compare it, and ask what it means when sources agree or disagree. That is especially important when the topic is controversial, emotional, or connected to public choices.

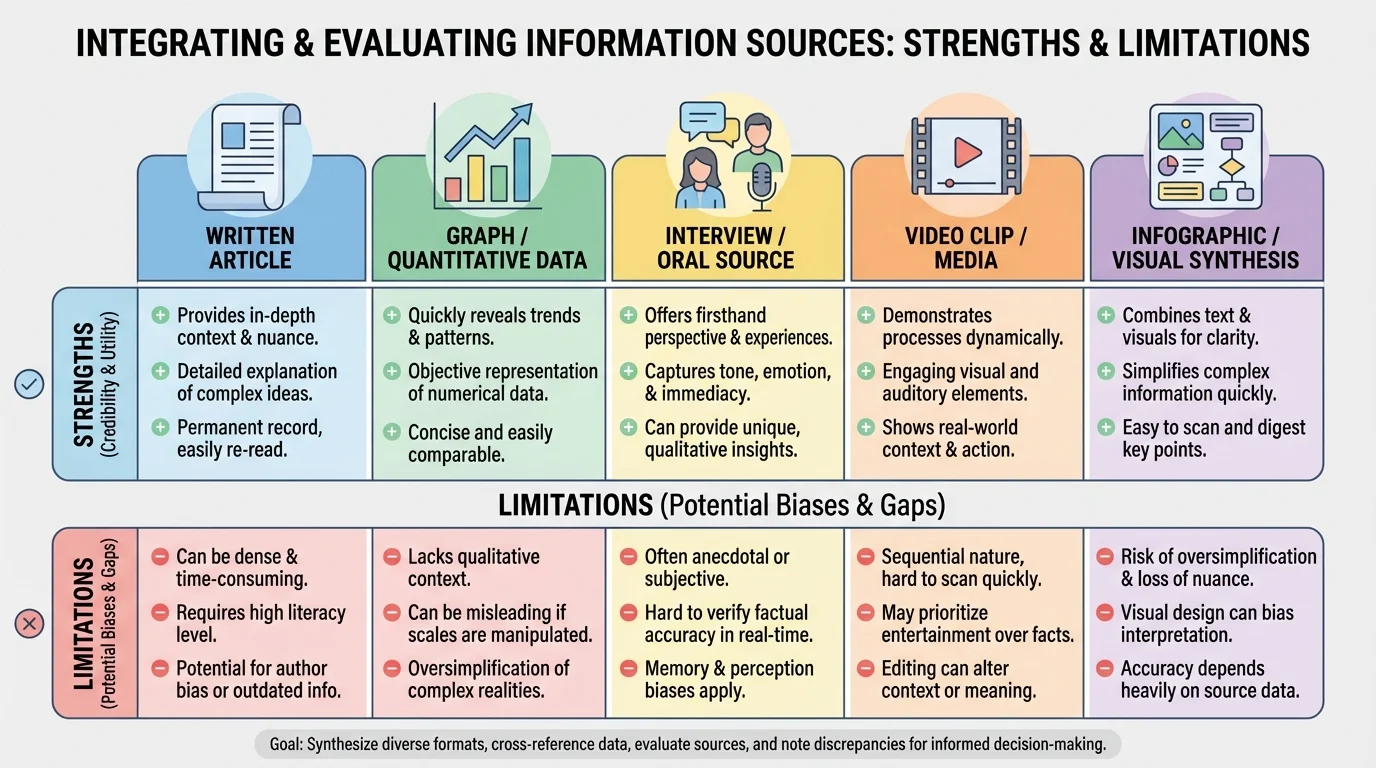

Information comes in many forms, and each one must be read differently, as [Figure 1] shows when comparing visual, oral, and quantitative formats. A written article may provide detailed explanation. A graph may reveal trends quickly. An interview may provide firsthand experience. A speech may show a speaker's priorities and persuasive strategies. A video may combine sound, images, and narration in a way that feels convincing even when its evidence is weak.

A source of quantitative data presents information using numbers, measurements, percentages, or calculations. For example, if school attendance rises from \(92\\%\) to \(95\\%\), the increase is \(95 - 92 = 3\) percentage points. A visual source might display that change as a bar graph. An oral source might be a principal explaining why attendance improved. A written source might describe the attendance policy that changed.

Different formats are strong in different ways. Charts and tables are good for comparison. Speeches and interviews reveal emotion, intent, and personal perspective. Infographics are quick to read but may oversimplify. Research reports often provide methods and evidence, but they may be harder to understand at first. Learning to integrate sources means respecting each format without assuming that any format is automatically correct.

Visual material deserves special attention because it can feel persuasive very quickly. A graph with dramatic colors or a cropped scale can push viewers toward a conclusion even before they examine the numbers closely. A photo or clip can be powerful, but one image rarely tells the whole story. Strong analysis asks what is shown, what is missing, and who selected the image.

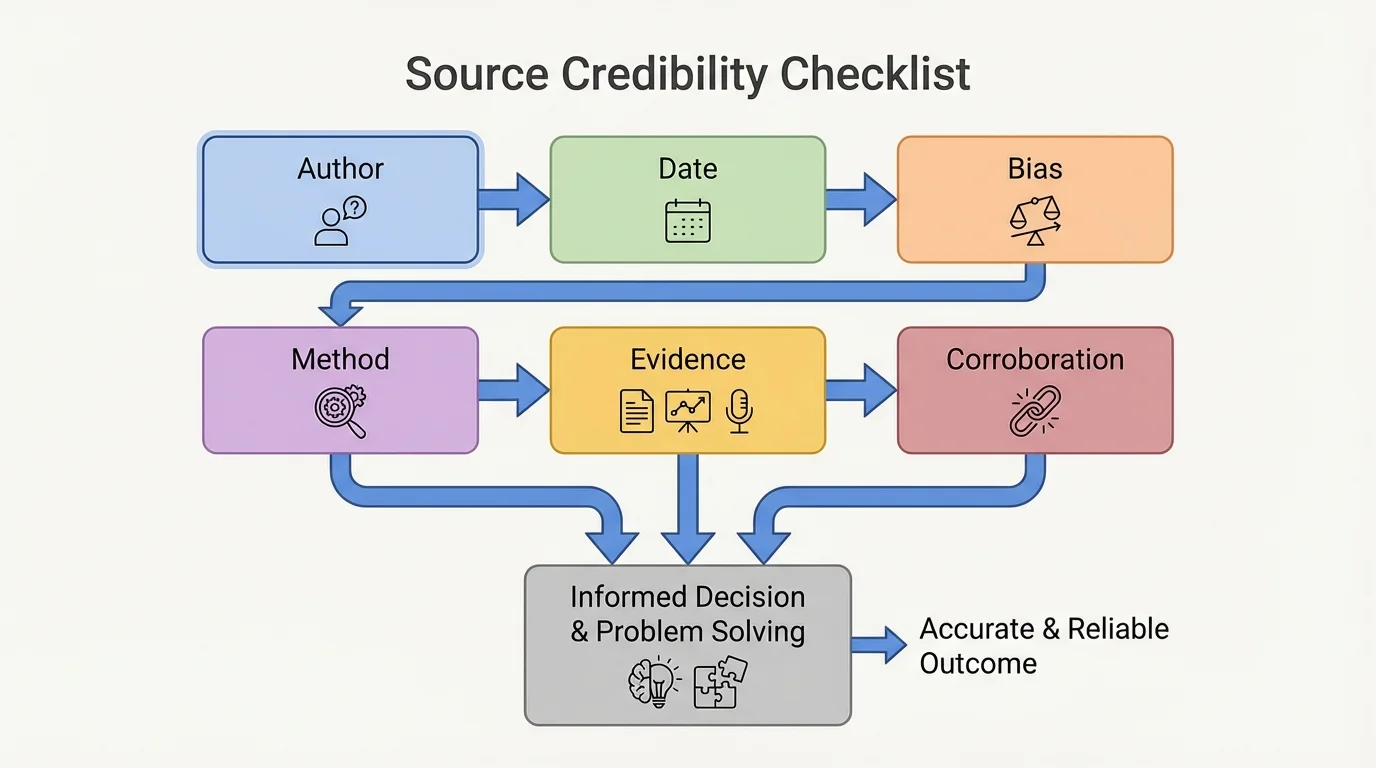

When you judge a source, you should move through a series of questions, as [Figure 2] illustrates, instead of relying on instinct alone. Ask: Who created this? What evidence is provided? How recent is it? What methods were used? Does another trustworthy source support the same claim? These questions turn credibility into something you can examine logically.

Credibility depends partly on expertise and partly on transparency. A medical researcher discussing vaccine data is generally more reliable on that subject than a celebrity with no scientific training. But expertise alone is not enough. A credible source also explains where its information came from and how conclusions were reached.

Bias is another key factor. Bias does not always mean a source is useless; it means the source may lean toward a certain viewpoint, interest, or outcome. An advertisement for a product is designed to persuade you to buy it. A political speech is often designed to win support. A student giving testimony about school lunch quality offers important firsthand experience, but that testimony still reflects one person's perspective.

Date matters too. On fast-changing topics such as technology, medicine, climate events, or economics, information from a few years ago may already be incomplete. Accuracy also depends on method. If a survey reports that \(70\\%\) of students support a policy, you should ask how many students were surveyed and whether the sample was representative. A survey of \(20\) students from one club does not carry the same weight as a survey of \(800\) students across multiple grade levels.

Reliable evaluation also requires checking whether facts can be confirmed elsewhere. This is called corroboration. If several independent, trustworthy sources report the same event or trend, confidence in the claim increases. If only one questionable source makes the claim and others do not support it, caution is necessary.

Credibility is not the same as agreement. A source is not credible just because it matches what you already believe. Strong evaluation means asking whether the source uses sound evidence, fair reasoning, and verifiable information, even when its conclusion challenges your opinion.

You should also distinguish between a source that is incorrect and one that is simply limited. A personal interview may be accurate about one student's experience but limited as evidence about the entire school. A national report may be statistically strong but less useful for understanding one neighborhood. Integration means recognizing those different levels of usefulness.

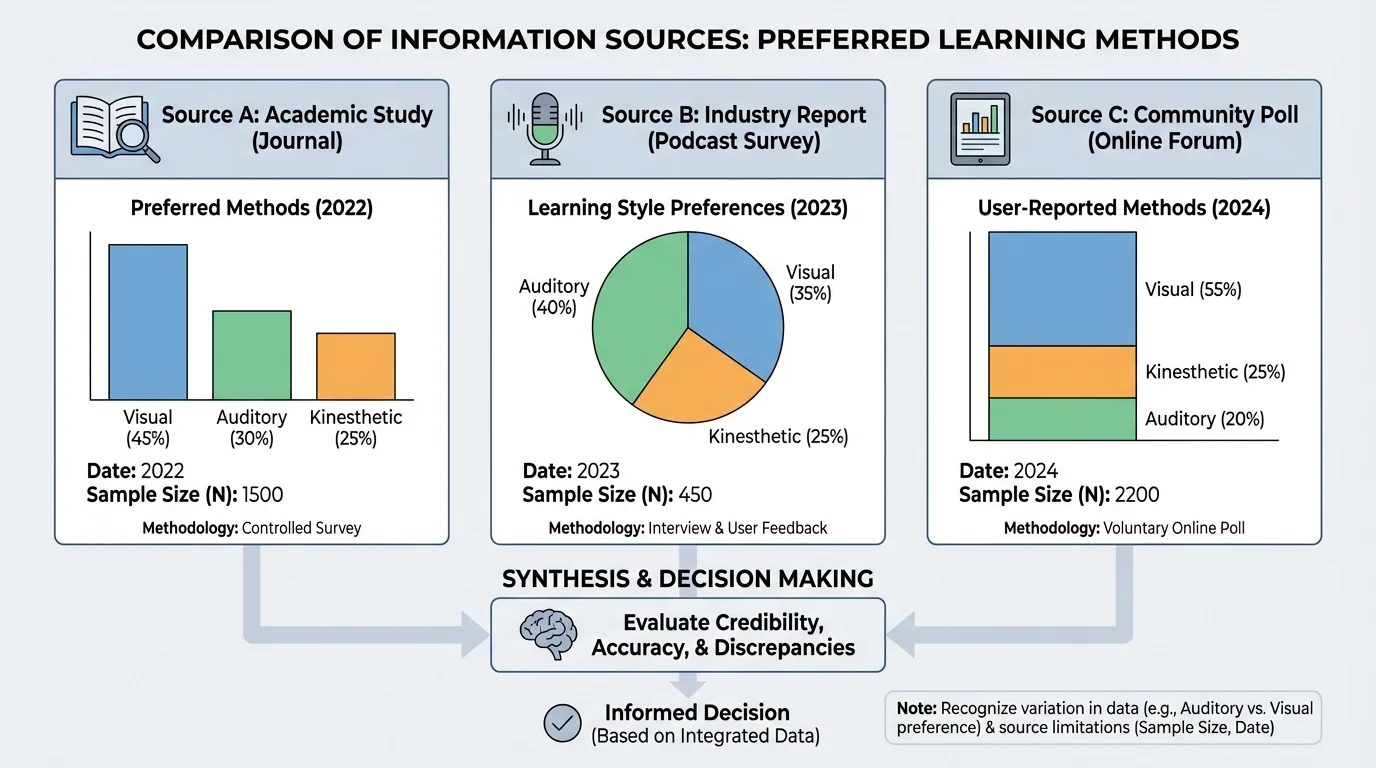

When sources disagree, do not panic. Disagreement is often a clue that deeper thinking is needed, as [Figure 3] shows through different reports on the same topic. A discrepancy is a difference between sources in numbers, descriptions, conclusions, or interpretations. Your job is to notice it and then ask why it exists.

For example, imagine three reports about teen screen time. One says the average is \(6.2\) hours per day, another says \(7.0\), and a third says \(5.8\). These values are not identical, but that does not automatically mean one source is false. The studies may define "screen time" differently, use different age groups, collect data in different years, or rely on self-reporting rather than device tracking.

Discrepancies can come from many causes: different sample sizes, different research methods, different dates, different definitions, or simple human error. They can also result from selective presentation. A speaker may mention only the data that supports a position while ignoring inconvenient evidence. This is often called cherry-picking.

When you see a discrepancy, compare the sources carefully. Which one is most recent? Which one explains its method most clearly? Which one has the largest or most representative sample? Which one may have a strong motive to persuade? Sometimes the best conclusion is not "Source A is true and Source B is false," but rather "The evidence is mixed, and Source A is stronger because its method is more transparent."

| Question to Ask | Why It Matters |

|---|---|

| Do the sources define key terms the same way? | Different definitions can produce different results. |

| Were the data collected at the same time? | Conditions may have changed. |

| Do they use similar sample sizes? | Larger samples often improve reliability. |

| Is one source trying to persuade? | Purpose can shape what is emphasized or omitted. |

| Can the claim be verified elsewhere? | Independent support strengthens trust. |

Table 1. Questions that help explain why sources may disagree.

As seen earlier in [Figure 2], source evaluation works best when you follow a structured checklist rather than jumping to the first conclusion that feels right. Discrepancies are not obstacles to thinking; they are often the reason careful thinking is needed.

To integrate sources well, move beyond summary. Listing what each source says is only the beginning. The stronger step is to connect them: Which sources support each other? Which source adds a missing perspective? Which evidence is most reliable? What conclusion best accounts for all the information, not just one part of it?

A useful process has five parts. First, identify the question or problem clearly. Second, gather diverse sources. Third, evaluate each source for credibility and accuracy. Fourth, compare them for patterns and discrepancies. Fifth, form a conclusion that is supported by the strongest combined evidence.

Decision process example: choosing the safest route to school

A student wants to decide whether walking, biking, or taking the bus is safest and most practical.

Step 1: Gather different source types.

The student checks a local traffic map, reads a city safety report, listens to a crossing guard's observations, and compares bus arrival times.

Step 2: Evaluate each source.

The city report is likely strong for accident data. The crossing guard offers useful firsthand knowledge but from one location. The bus app may be accurate for schedules but not for road safety.

Step 3: Compare evidence.

If the map shows heavy intersections on the walking route, the city report lists several recent bike accidents nearby, and the bus route has a reliable on-time rate of \(88\\%\), the bus may be the strongest choice overall.

Step 4: Make the decision.

The conclusion is not based on one source alone. It comes from integrating safety data, personal observation, and transportation reliability.

Notice that integration is not just about collecting more information. It is about weighting the information correctly. A dramatic anecdote may be memorable, but broad data may carry more weight for a policy decision. At the same time, broad data without human experience can miss how a decision affects real people.

Because this skill includes oral expression and listening, you must also evaluate how people present ideas aloud. When someone speaks, listen not only for the claim but also for the speaker's perspective. Perspective is shaped by background, role, experience, and interests. A teacher, a student athlete, a parent, and a transportation director may all speak about school schedules from very different positions.

Rhetoric refers to the way language is used to influence an audience. Strong rhetoric is not automatically dishonest. In fact, skilled speakers often use rhetorical questions, repetition, vivid examples, emotional appeal, and careful tone to make a valid point more memorable. The key is to separate the power of delivery from the strength of evidence.

For example, a speaker might say, "How many exhausted students must struggle through first period before we act?" That question is emotionally powerful. But to evaluate it well, you still need evidence about sleep, attendance, and academic performance. Emotion may draw attention to an issue, but evidence is what supports a sound decision.

A calm, confident voice often sounds trustworthy, but confidence and credibility are not the same thing. People can speak persuasively while using weak evidence, and careful speakers can sound uncertain while presenting strong facts.

Responding to others' ideas respectfully means identifying what is strong in their argument, where evidence is missing, and how another source changes the picture. Instead of saying, "You're wrong," a stronger response is, "Your personal experience is important, but the district-wide attendance data suggests a different overall pattern." That kind of response shows listening, analysis, and fairness.

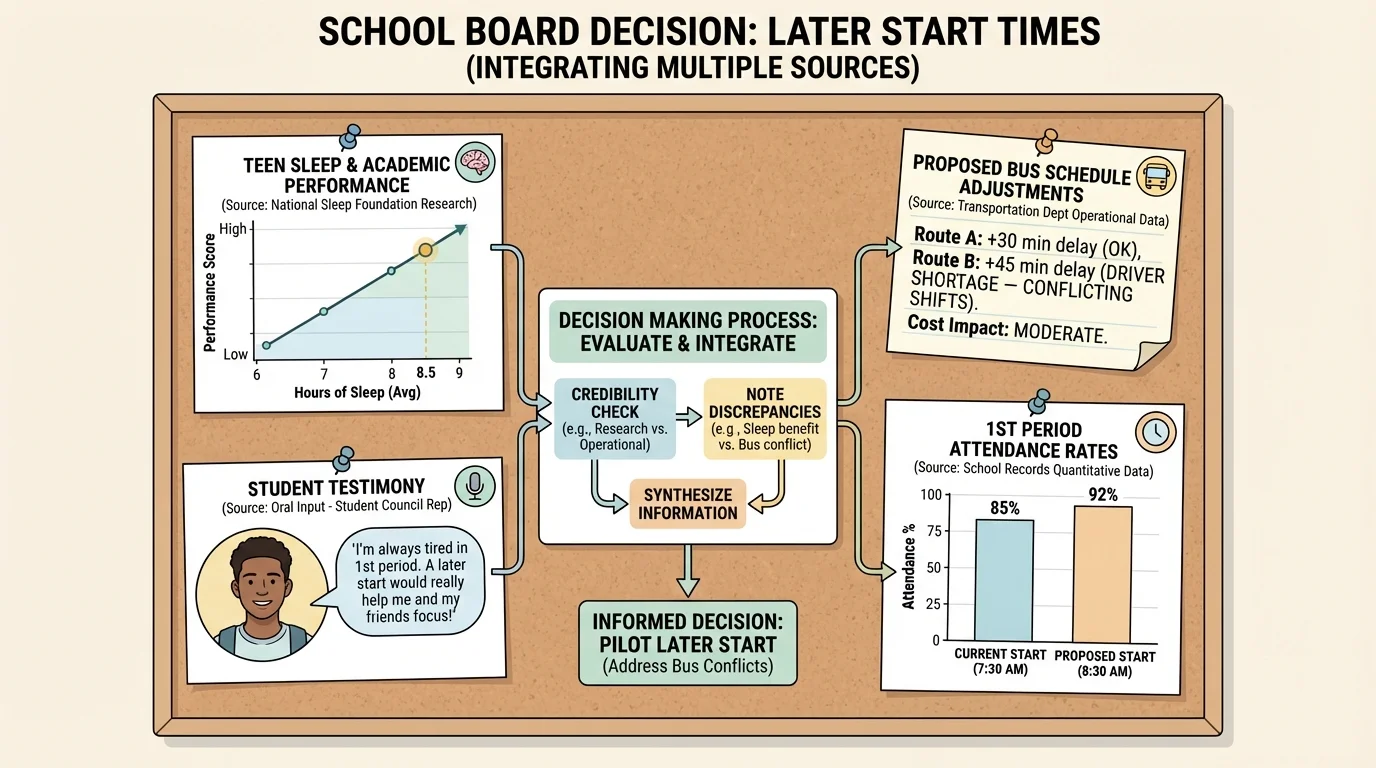

A district is considering moving the high school start time from \(7{:}20\) a.m. to \(8{:}10\) a.m. This issue requires integrating numerical evidence and spoken viewpoints, as [Figure 4] illustrates. One source is a sleep study reporting that teenagers generally perform better with later start times. Another is a student panel describing stress and fatigue. A third is the transportation department explaining that bus schedules would become more expensive and complex. A fourth is attendance data from similar districts.

If the sleep research comes from medical organizations and peer-reviewed studies, it has high credibility on the health question. Student testimony is valuable because it shows lived experience. Transportation concerns matter because good policy must also be practical. If attendance in comparable districts improved from \(90\\%\) to \(93\\%\) after a schedule change, then \(93 - 90 = 3\) percentage points of improvement becomes one relevant piece of quantitative evidence.

The best decision does not ignore any of these sources. A weak response would choose only the emotional testimony or only the budget spreadsheet. A stronger response weighs them together. Perhaps the district concludes that later start times are beneficial but should be phased in gradually because transportation changes require planning.

This kind of conclusion is stronger because it explains why some evidence carries more weight in certain parts of the problem. Health research may be strongest for the question of sleep impact. Budget data may be strongest for cost. Student testimony may be strongest for understanding daily experience. Integration means assigning each source to the part of the issue it best addresses.

Later, when someone argues that "students just need more discipline," you can respond with better reasoning: the personal opinion should be tested against research findings, attendance trends, and the variety of perspectives already considered. As the case in [Figure 4] makes clear, public decisions improve when oral testimony and numerical evidence are considered together.

Now consider a video in which an influencer claims that a certain energy drink "improves focus by \(50\\%\)." The claim sounds scientific because it includes a number. But numbers alone do not create truth. You should ask where the \(50\\%\) came from, what "focus" means, how it was measured, and whether an independent scientific source confirms the result.

You might compare four sources: the video itself, the company website, a scientific article on caffeine and attention, and guidance from a medical organization. The company has a financial motive, so its claims deserve extra caution. A scientific article may be stronger, but only if the study sample, design, and limits are clear. A medical organization may provide a broader view of risks such as sleep disruption or heart effects.

Source comparison example: testing the \(50\\%\) claim

Step 1: Examine the original claim.

The influencer says focus improves by \(50\\%\), but gives no study details.

Step 2: Check the company source.

The company cites a small in-house test of \(12\) participants. That is a weak sample for a broad conclusion.

Step 3: Compare with independent research.

An independent study finds that caffeine can improve alertness for some people, but effects vary by dose, sleep, and tolerance.

Step 4: Form a cautious conclusion.

The drink may help some users feel more alert, but the specific claim of \(50\\%\) improvement is not strongly supported by reliable evidence.

This example shows why listening critically matters. The speaker's tone, editing, and confidence may be persuasive, but careful evaluation focuses on evidence quality, method, and verification.

One common mistake is trusting a polished visual more than a plain but reliable source. Another is assuming that the loudest speaker or most emotional story must be correct. A third is ignoring date and context. Information can be real and still be outdated or incomplete.

Another mistake is treating all sources as equally valuable for every question. A personal story can be excellent evidence for describing individual experience, but weak evidence for estimating national trends. A large data set can be excellent for spotting patterns, but weak for understanding how one person feels. Strong thinkers match the source to the purpose.

From earlier reading and speaking skills, remember the difference between a claim and evidence. A claim states an idea or conclusion. Evidence supports it. Integrating sources means testing whether the evidence really supports the claim across formats and perspectives.

Strong habits include taking notes by source type, labeling where information came from, recording dates, and writing down questions about uncertainty. It also helps to separate observation from interpretation. For example, "The graph rises sharply after January" is an observation. "The policy caused the rise" is an interpretation that still needs support.

Once you have integrated information, you must present your conclusion clearly. A strong conclusion explains the issue, identifies the most credible sources, acknowledges important discrepancies, and states how the evidence leads to the final judgment. It does not pretend the evidence is perfect if uncertainty remains.

For example, a strong response might say: "The most credible evidence supports moving the start time later because medical research and attendance trends point in the same direction. Student testimony strengthens that conclusion by showing daily effects. However, transportation data shows real costs, so a phased plan is more practical than an immediate change." This response shows synthesis, credibility judgment, and attention to multiple perspectives.

That is also how you respond well to other people's ideas. You listen carefully, identify the perspective and rhetorical choices in what they say, compare their argument to other evidence, and answer with reasoning rather than reaction. Informed decisions are rarely built from one source, one voice, or one number. They are built from careful comparison, honest evaluation, and thoughtful integration.