A false claim can reach millions of people before a correction is even noticed. That fact matters not only on social media but also in school research, public policy, medicine, and law. When you search for information, the real challenge is rarely finding something; it is deciding whether what you found deserves to be trusted. Strong researchers do more than collect quotes. They test information, compare it, question it, and decide how much weight it should carry.

In advanced research, information is rarely accepted at face value. A polished website may still contain weak evidence. A viral video may sound convincing but leave out crucial context. A source can be partly accurate, strongly biased, or factually correct yet incomplete. Evaluating information means making careful judgments about both the message and the source behind it.

Research is meant to answer questions or solve problems. If the sources are weak, the conclusion will also be weak, even if the writing is organized and confident. For example, a student researching whether school start times should be later might find a blog post, a newspaper article, a government health report, and a peer-reviewed sleep study. These do not all carry the same authority, and they do not all provide the same kind of evidence. Treating them as equal would lead to poor reasoning.

Evaluating information also protects you from being manipulated. Advertisers, political groups, influencers, and even respected organizations may present information in ways that support their goals. Sometimes the problem is direct falsehood. More often, the problem is selective truth: facts are chosen, framed, or omitted in ways that push an audience toward a conclusion.

False or misleading information often spreads faster than careful reporting because it is designed to trigger emotion, surprise, or outrage. That is exactly why skilled readers slow down when a claim feels instantly persuasive.

For students in upper-level research, source evaluation is not a separate step added at the end. It is part of inquiry from the beginning. [Figure 1] As you refine a research question, gather evidence, and defend a claim, you are constantly deciding which information is dependable and which information should be challenged, limited, or rejected.

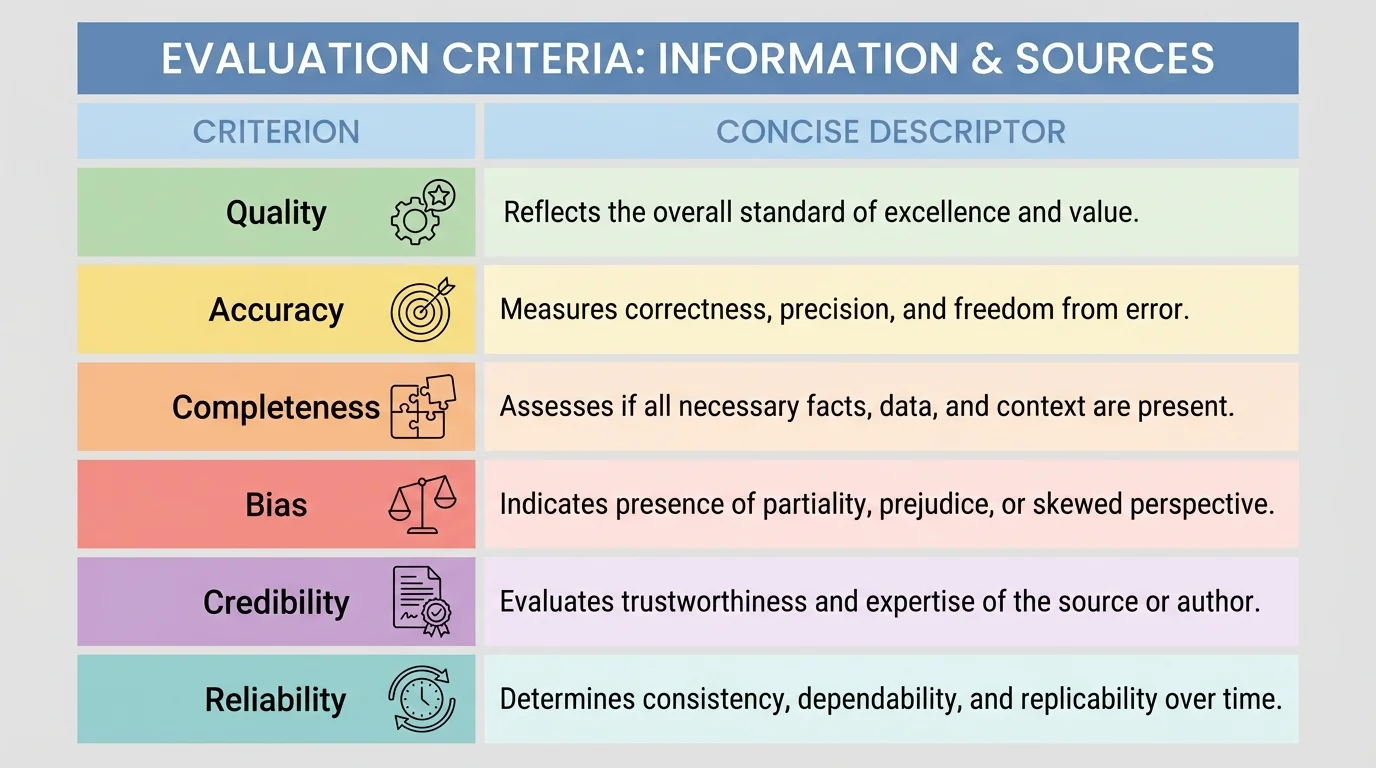

Researchers often use several criteria at once. The most important ones are quality, accuracy, completeness, bias, credibility, and reliability.

Quality refers to how strong and useful the information is. High-quality information is clear, well-supported, specific, relevant to the question, and based on sound evidence. Low-quality information is vague, unsupported, sensationalized, or overly simplistic.

Accuracy means the information is factually correct. Accurate information aligns with verifiable evidence, reports data honestly, and avoids factual errors. A source may sound professional and still be inaccurate if it misstates dates, numbers, quotations, or scientific findings.

Completeness means the information gives enough context and coverage to support understanding. A statement can be accurate but incomplete. For instance, saying that a medicine has side effects is accurate, but if the source fails to explain how common, how severe, or for whom, the reader may be misled.

Bias is a tendency to present information from a particular perspective, often favoring one side, value, or outcome. Bias does not always mean a source is useless. It means you must recognize how perspective shapes what is emphasized, ignored, or interpreted.

Credibility refers to whether a source deserves belief based on expertise, reputation, transparency, and evidence. Reliability refers to whether the source consistently produces trustworthy information over time. One article might appear credible, but reliability asks a broader question: does this author, publisher, or institution have a dependable track record?

Source evaluation is the process of judging information and its origin to determine how trustworthy, useful, and appropriate it is for a specific research purpose.

Authority means recognized expertise or legitimate responsibility in a field. Corroboration means confirming a claim by comparing it with other independent sources.

These categories overlap. A source with strong credibility is more likely to be accurate, but credibility does not guarantee accuracy. A highly reliable publication can still make a mistake. A biased source can still contain true facts. Good evaluation depends on careful distinctions rather than quick labels.

[Figure 2] One of the first questions to ask is simple: Who is speaking? Authors, editors, institutions, and publishers shape the value of a source. If an article about climate science is written by an atmospheric scientist at a major university and published in a peer-reviewed journal, it begins with a different level of authority than an anonymous post on a discussion board.

Authority depends on the topic. A medical doctor may have expertise in public health but not necessarily in economics. A historian may be authoritative about archival documents but not about chemical engineering. Strong researchers match the source's expertise to the question being investigated.

Look for signals of authority: author name, credentials, institutional affiliation, publication venue, editorial review, citations, and transparency about methods. Government agencies, university presses, peer-reviewed journals, and major research organizations often provide stronger authority than personal websites or unedited posts, though their claims should still be checked.

Domain names can offer clues, but they are not enough by themselves. A .gov site usually suggests a government source, and a .edu site often connects to an educational institution, but not every page on those domains is equally strong. A student webpage hosted by a university is not automatically authoritative. The content still needs evaluation.

Authority is not the same as popularity. A source can have millions of views and still lack expertise, evidence, or editorial standards.

When no author is listed, caution should increase. Anonymous writing is harder to verify, and missing authorship can signal weak accountability. In some cases, organizations publish without naming a single author, but they should still identify the responsible institution, methods, and publication date.

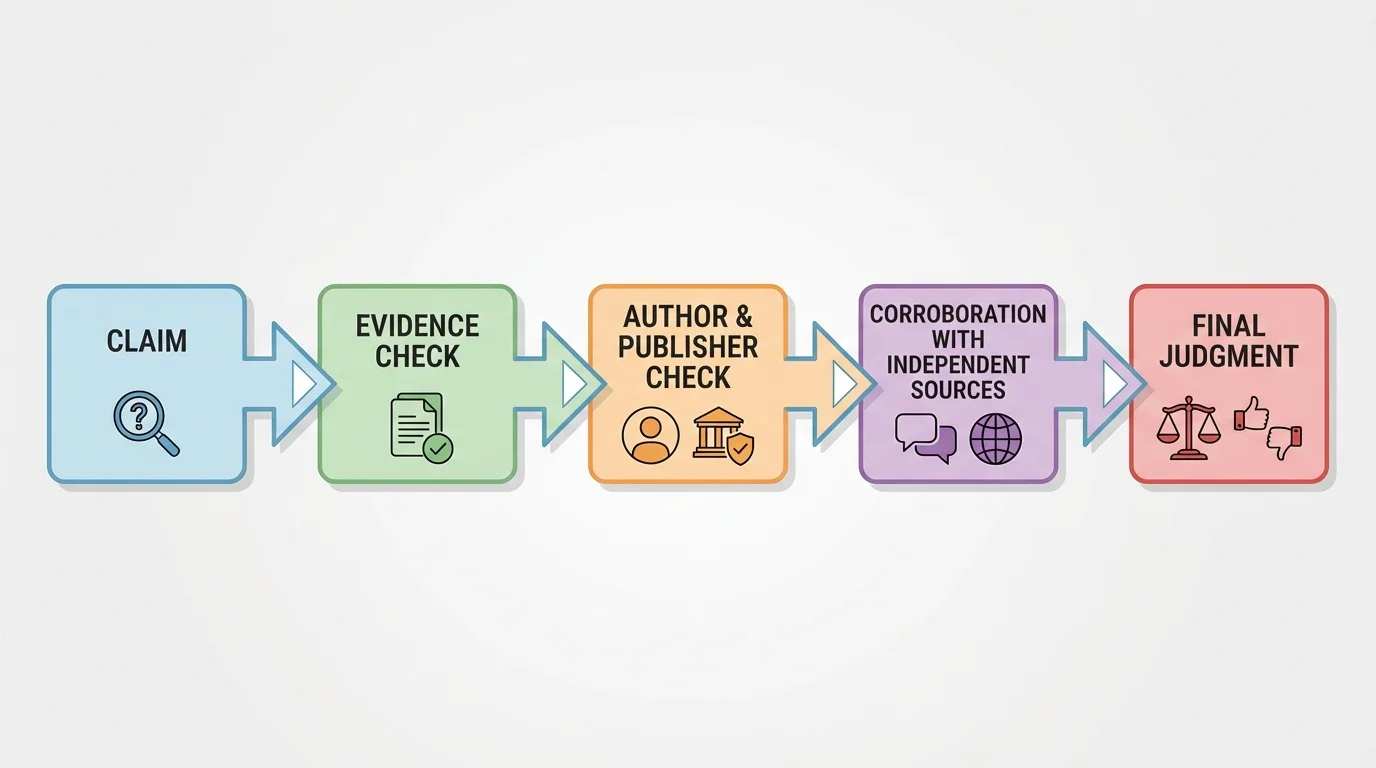

Good research depends on more than persuasive claims. Evaluation works as a process: identify the claim, inspect the evidence, verify the source, compare with other reporting, and then judge its value. A claim is only as strong as the evidence supporting it.

Ask what kind of evidence is being used. Is the source citing data, experiments, surveys, official records, expert interviews, eyewitness testimony, or personal opinion? Different questions require different forms of evidence. A claim about voter turnout should rely on election data. A claim about literary symbolism should rely on close reading and scholarly interpretation. A claim about a chemical reaction should rely on tested scientific evidence, such as measured observations of reactions like \[2\textrm{H}_2 + \textrm{O}_2 \rightarrow 2\textrm{H}_2\textrm{O}\].

Verification means checking whether the evidence actually supports the claim. If a source says, "Studies prove teenagers cannot learn before 10 a.m.," a careful reader asks: Which studies? How many participants? What methods were used? Was the result general or limited? Did the study show causation or only correlation?

Corroboration is essential because a single source can be mistaken, selective, or misleading. Independent agreement across several strong sources increases confidence. If a government report, a peer-reviewed study, and a reputable news investigation all support the same conclusion using different evidence, that conclusion becomes stronger.

This is especially important online, where one claim may be copied across many websites. Ten websites repeating the same unsupported statement do not equal ten independent confirmations. Researchers must trace information back to its original reporting, dataset, document, or study.

Case study: verifying a statistic

A student finds the claim that "nearly all teens are sleep-deprived."

Step 1: Identify the exact claim.

The phrase "nearly all" is broad and needs precise evidence.

Step 2: Locate the original source.

The student follows the article's link to a national health organization report summarizing survey data.

Step 3: Check the method.

The report defines sleep deprivation, gives the age range, sample size, and year of collection.

Step 4: Corroborate.

The student compares the report with a peer-reviewed study and a second public health source. The exact percentages differ, but all three show that a large share of teens get less sleep than recommended.

Step 5: Revise the wording.

Instead of writing "nearly all teens are sleep-deprived," the student writes a more accurate claim supported by evidence: many teenagers report getting less sleep than health experts recommend.

Notice that the revised claim is less dramatic but more defensible. Strong research often replaces exaggeration with precision.

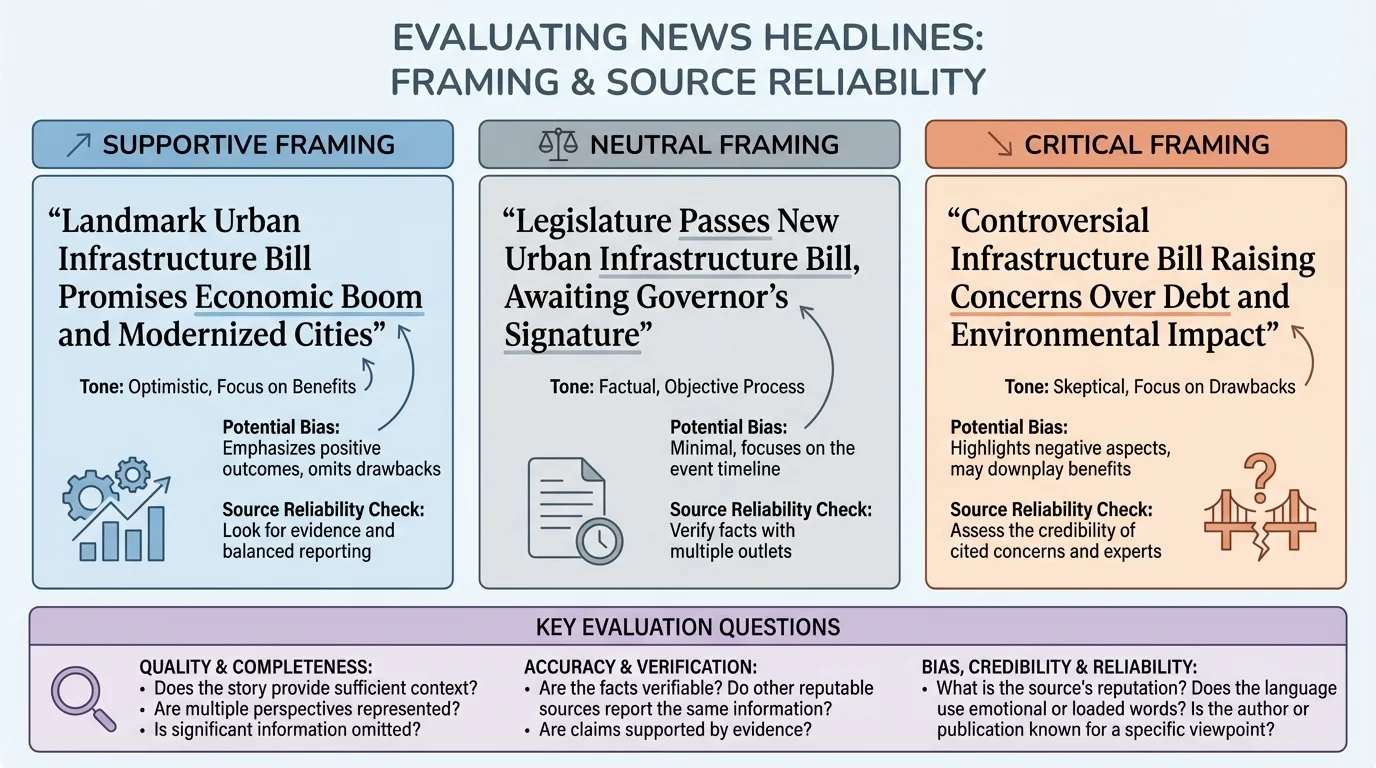

[Figure 3] Bias often appears not through obvious lies but through selection, framing, and emphasis. The same event can be described in sharply different ways. Every source has a perspective, but not every source handles that perspective responsibly.

A source may reveal bias through loaded language, one-sided evidence, emotionally manipulative wording, selective statistics, or omission of opposing views. For example, one article may describe a policy as "necessary reform," while another calls it "government overreach." Those phrases do more than describe; they guide interpretation.

Bias can also appear in what is left out. Suppose an article argues that a city should ban electric scooters because accident rates increased. If it includes accident numbers but ignores ridership growth, infrastructure issues, and comparison with other forms of transportation, the discussion may be incomplete in ways that distort judgment.

It is important to distinguish bias from point of view. A historian writing from a labor-history perspective may emphasize workers' experiences. That perspective is not automatically dishonest. Problems arise when perspective leads to unfair distortion, unsupported conclusions, or hidden agendas.

Some sources are openly persuasive, such as editorials, campaign ads, advocacy websites, and opinion columns. These can still be useful, especially when researching debate, public attitudes, or stakeholder positions. However, they should not be treated the same way as neutral reporting or empirical research.

Bias does not always cancel value

A biased source may still provide important evidence about language, priorities, assumptions, or stakeholder interests. For example, a company's statement about its own environmental practices may not be neutral, but it can still reveal what the company claims, what it avoids discussing, and which data need independent verification.

As later comparisons show, that framing pattern matters because tone influences what readers notice first. Evaluation therefore includes not only asking whether a claim is true, but also asking how the presentation shapes audience reaction.

Incomplete information can be just as misleading as false information. A graph without labels, a quote without surrounding context, or a study mentioned without its limitations can push readers toward a conclusion that the full evidence would not support.

Context includes time, place, audience, method, and scope. A source from 2014 may be outdated in a field like artificial intelligence or vaccine research. A local case study may not apply nationally. A survey of 120 volunteers may not represent an entire population. Context helps you judge whether the information fits your research question.

Completeness also requires attention to counterevidence. If every source you selected agrees with your opinion, that may not mean your position is perfectly supported; it may mean your search was too narrow. Strong research actively looks for disagreement, exceptions, and limitations.

When evaluating a source, ask: What important information is missing? What questions remain unanswered? What would I need to know before using this as evidence? These questions often reveal whether a source deserves a central role in your research or only a limited mention.

Not all sources are designed for the same purpose. Some provide original research, some report current events, some summarize expert knowledge, and some persuade audiences. A skilled researcher understands how each type functions and what it can and cannot do well.

| Source type | Typical strengths | Typical limitations | Best use in research |

|---|---|---|---|

| Peer-reviewed journal article | Specialized expertise, detailed methods, evidence-based analysis | Can be technical, narrow, or slow to reflect recent events | Core evidence for academic claims |

| Government report | Official data, large-scale surveys, public records | May reflect agency scope or policy priorities | Statistics, regulations, demographic trends |

| Reputable news article | Timely reporting, interviews, current developments | May simplify complex research or focus on immediate events | Recent context and public response |

| Advocacy organization website | Clear position, stakeholder perspective, issue-specific information | Strong persuasive intent, selective evidence | Understanding arguments and viewpoints |

| Book from university press | Depth, synthesis, broad context | May not be current in fast-changing fields | Background, historical development, major debates |

| Social media post | Immediate reactions, firsthand commentary, public discourse | Little verification, high risk of misinformation or decontextualization | Studying response or rhetoric, not as stand-alone factual proof |

Table 1. Comparison of common source types, their strengths, limitations, and uses in research.

This table does not mean one source type is always good and another is always bad. It means each source must be used according to its purpose and limitations. A social media post might be poor evidence for a scientific claim but excellent evidence for how a rumor spread or how a public figure framed an issue.

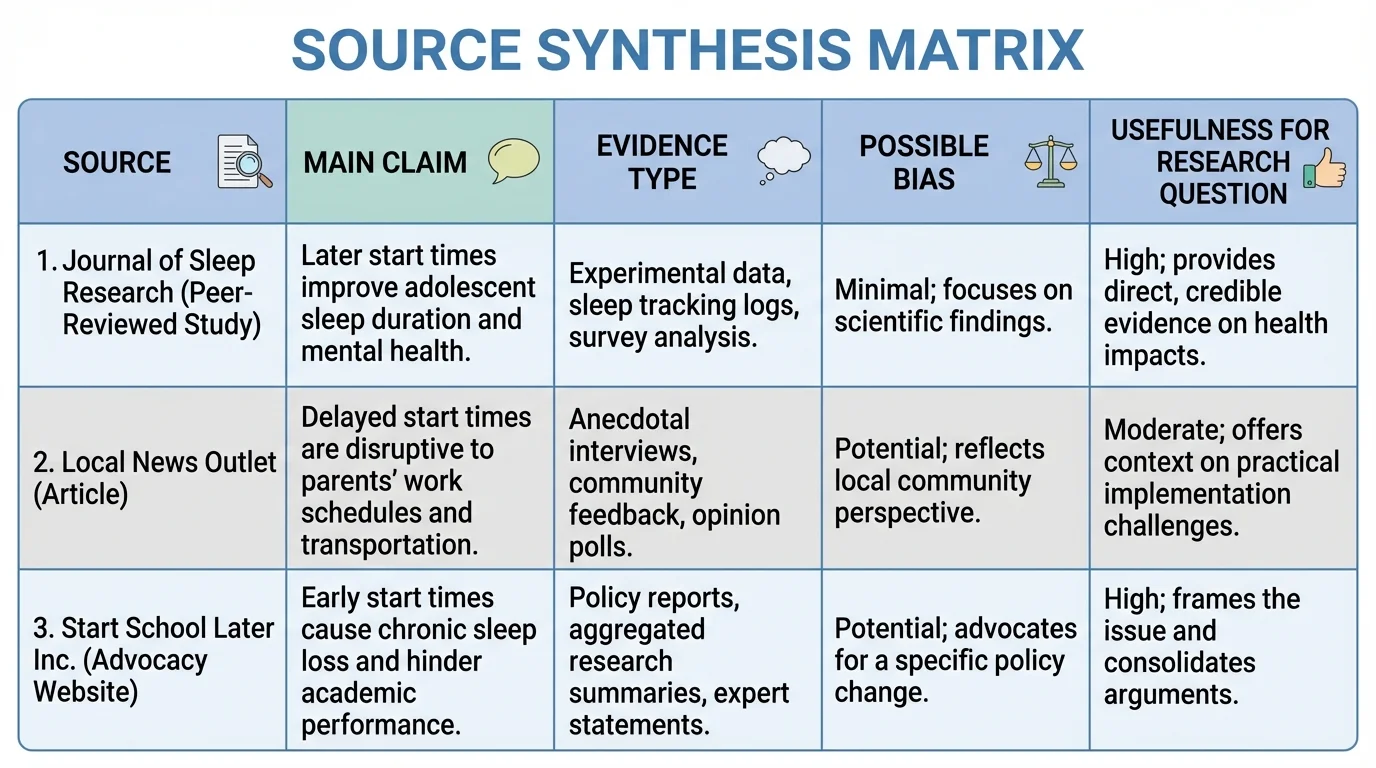

[Figure 4] Research becomes stronger when you synthesize multiple sources instead of depending on a single authority. Comparing sources side by side helps you see agreement, disagreement, and missing information and avoid overreliance on any one source. Synthesis means combining ideas and evidence from different sources to build a clear, defensible answer to a question.

Suppose your research question is: Should schools delay start times? One strong approach would combine a sleep-science study, a public health report, district attendance data, and a reputable news article covering districts that changed schedules. Each source contributes something different: biological evidence, large-scale public health patterns, local outcomes, and implementation challenges.

Instead of listing one source after another, compare them. Which sources agree that later start times improve sleep? Which mention transportation cost problems? Which provide direct evidence, and which mainly comment on policy? Which are current enough to matter? This comparison helps you develop a nuanced claim rather than a one-sided argument.

Research application: building a defensible conclusion

Question: Should a city restrict short-term rentals in order to protect housing availability?

Step 1: Gather varied authoritative sources.

The researcher collects a city housing report, a peer-reviewed urban studies article, a newspaper investigation, and a statement from a short-term rental company.

Step 2: Evaluate each source.

The housing report offers official local data. The journal article provides broader scholarly analysis. The newspaper article adds current examples and interviews. The company statement shows stakeholder interests but has clear persuasive bias.

Step 3: Compare points of agreement and disagreement.

The city report and journal article both suggest some link between short-term rentals and reduced housing supply in high-demand areas. The company statement argues the effect is small and highlights tourism benefits.

Step 4: Identify missing context.

The researcher notices that tourism income, neighborhood differences, and enforcement costs also matter.

Step 5: Form a measured conclusion.

Rather than claiming short-term rentals are the only cause of housing shortages, the researcher concludes that in some high-demand neighborhoods they appear to contribute to limited housing availability, which may justify targeted regulation.

This is what mature research looks like: not certainty without evidence, but judgment grounded in carefully evaluated evidence. The side-by-side structure helps prevent overreliance on any one source.

Evaluation is also an ethical responsibility. When you quote, paraphrase, or summarize a source, you should represent it fairly. Taking a sentence out of context, trimming away important qualifications, or paraphrasing in a way that changes meaning is a misuse of evidence, even if the citation is technically present.

Fair use of sources requires accurate quotation, honest paraphrasing, and acknowledgement of limitations. If a study finds a trend but notes uncertainty, your writing should preserve that uncertainty. If a source is useful but biased, you can still include it, but you should frame it appropriately for readers.

"The test of a first-rate intelligence is the ability to hold two opposed ideas in mind at the same time and still retain the ability to function."

— F. Scott Fitzgerald

That idea applies to research. You may need to recognize that a source offers valuable insight and still has limitations. Intellectual honesty means resisting the temptation to use sources only as weapons for your preselected conclusion.

When facing a new source, use a repeatable process. First, identify the author, publisher, date, and purpose. Second, determine the claim and the evidence supporting it. Third, evaluate authority and expertise. Fourth, check for bias, loaded language, and omissions. Fifth, corroborate with independent sources. Sixth, decide how the source will function in your research: central evidence, background context, opposing viewpoint, or example of rhetoric.

You can also ask a series of diagnostic questions: Is the source current enough for this topic? Does it cite evidence that can be traced? Is the evidence relevant and sufficient? What assumptions shape the source? Who benefits if the audience believes this claim? Are important counterarguments addressed? The answers reveal far more than surface appearance.

Over time, strong evaluation becomes a habit of mind. You begin to notice when headlines exaggerate, when statistics lack context, when experts speak outside their fields, and when a source sounds certain without earning that certainty. That habit is essential not only for school assignments but for citizenship, work, and daily life.