Your phone can send a photo across the world in seconds, a hospital can look inside a broken bone without surgery, and a scientist can identify elements in a distant star without ever touching it. These very different achievements rely on one powerful idea: waves can interact with matter in ways that allow us to create, move, detect, and decode information.

Modern life is built on signals. A song streaming to wireless earbuds, a QR code scanned at a store, a weather satellite image, and an MRI scan all depend on patterns carried by waves. Some of these waves are mechanical, like sound traveling through air. Others are electromagnetic, like radio waves, visible light, X-rays, and microwaves. In each case, the technology works because matter responds to the wave in a predictable way.

This is one of the deepest ideas in physics: waves are not just disturbances that move. They are tools for information transfer. By controlling a wave's pattern, and by understanding how materials absorb, reflect, transmit, or emit it, humans have built entire communication systems, medical tools, and research instruments.

A signal is a pattern that carries information from one place to another. When you speak into a microphone, air-pressure changes form a sound wave. The microphone converts those changes into an electrical signal. That signal can be amplified, stored, transmitted, and eventually turned back into sound by a speaker. The message is preserved because the pattern is preserved.

Waves are useful for information transfer because they can travel, change in controlled ways, and interact with materials that detect them. A signal might represent sound, an image, a temperature reading, or digital data. In digital systems, the pattern is often encoded as a sequence of bits, usually represented conceptually by two states such as 0 and 1, though the real system may use voltages, magnetic orientations, or light pulses.

Information transfer by waves happens in a chain. A source produces a wave or signal, a medium or field allows it to travel, matter interacts with it at the receiver, and a device interprets the result. If any part of that chain adds too much distortion or noise, the original message becomes harder to recover.

This is why communication engineers care about signal strength, doctors care about image clarity, and scientists care about detector sensitivity. In all of these cases, the central challenge is the same: how to separate meaningful patterns from unwanted effects.

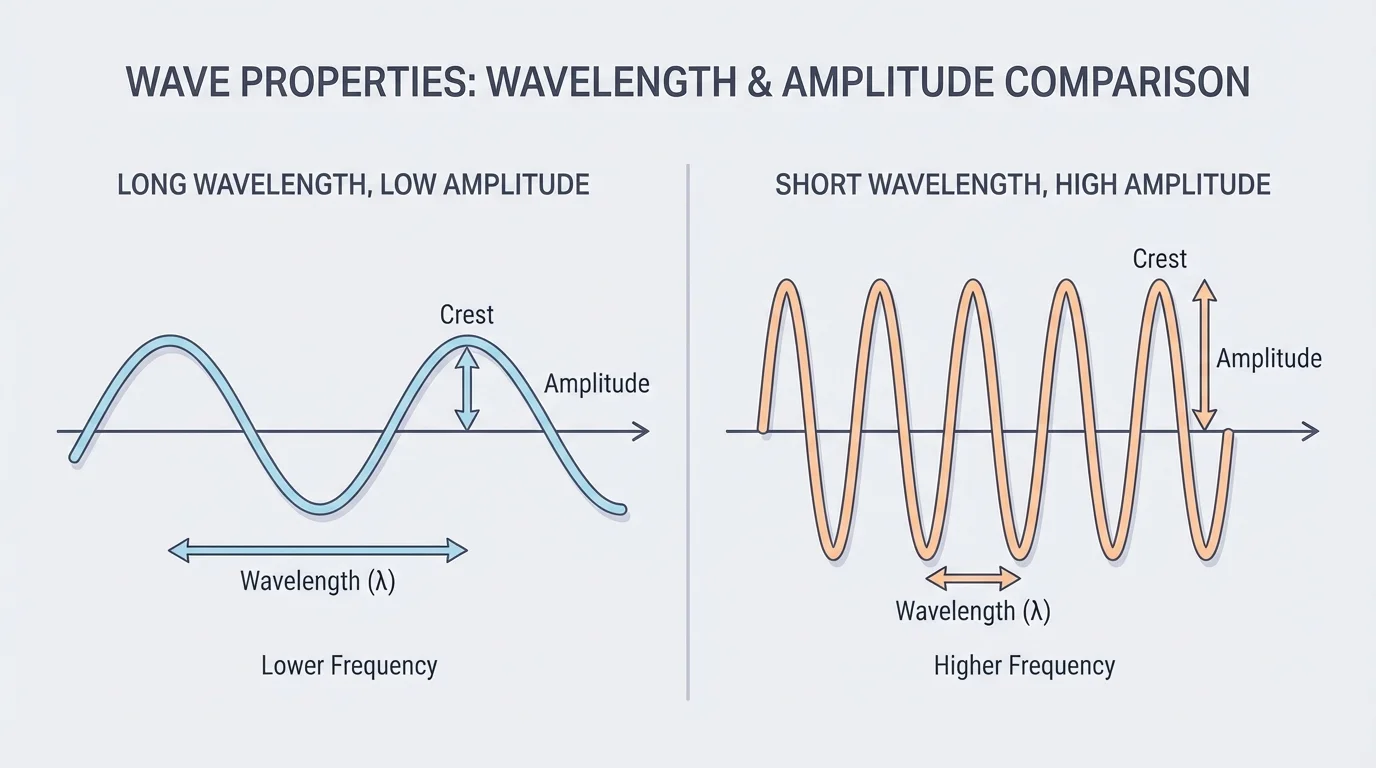

Waves have measurable properties, and those properties determine how they behave, as [Figure 1] shows through visual comparisons of wavelength, frequency, and amplitude. A wavelength is the distance from one crest to the next. Frequency is how many wave cycles pass a point each second, measured in hertz. Amplitude describes the size of the disturbance and is related to the energy carried by the wave.

These quantities are connected by an important relationship:

\[v = f\lambda\]

Here, \(v\) is wave speed, \(f\) is frequency, and \(\lambda\) is wavelength. If the wave speed stays the same, increasing frequency means decreasing wavelength.

For example, if a wave travels at \(300 \textrm{ m/s}\) and has frequency \(100 \textrm{ Hz}\), then its wavelength is \(\lambda = \dfrac{300}{100} = 3 \textrm{ m}\). If the frequency rises to \(150 \textrm{ Hz}\) in the same medium, the wavelength becomes \(\lambda = \dfrac{300}{150} = 2 \textrm{ m}\).

Mechanical waves require matter to travel through. Sound is a mechanical wave, so it cannot move through empty space. Electromagnetic waves do not require a material medium; they can travel through a vacuum. That is why sunlight reaches Earth across space.

The electromagnetic spectrum includes radio waves, microwaves, infrared, visible light, ultraviolet, X-rays, and gamma rays. They are all the same basic kind of wave but differ in wavelength, frequency, and energy. Shorter wavelengths generally mean higher frequencies and greater energy per photon.

Different parts of the spectrum are useful for different jobs. Radio waves are excellent for broadcasting and wireless communication over long distances. Visible light is ideal for cameras and fiber optics. X-rays can pass through soft tissue more easily than bone, making them useful for medical imaging. As we return to [Figure 1], the visual differences in wavelength and amplitude help explain why no single wave type is best for every application.

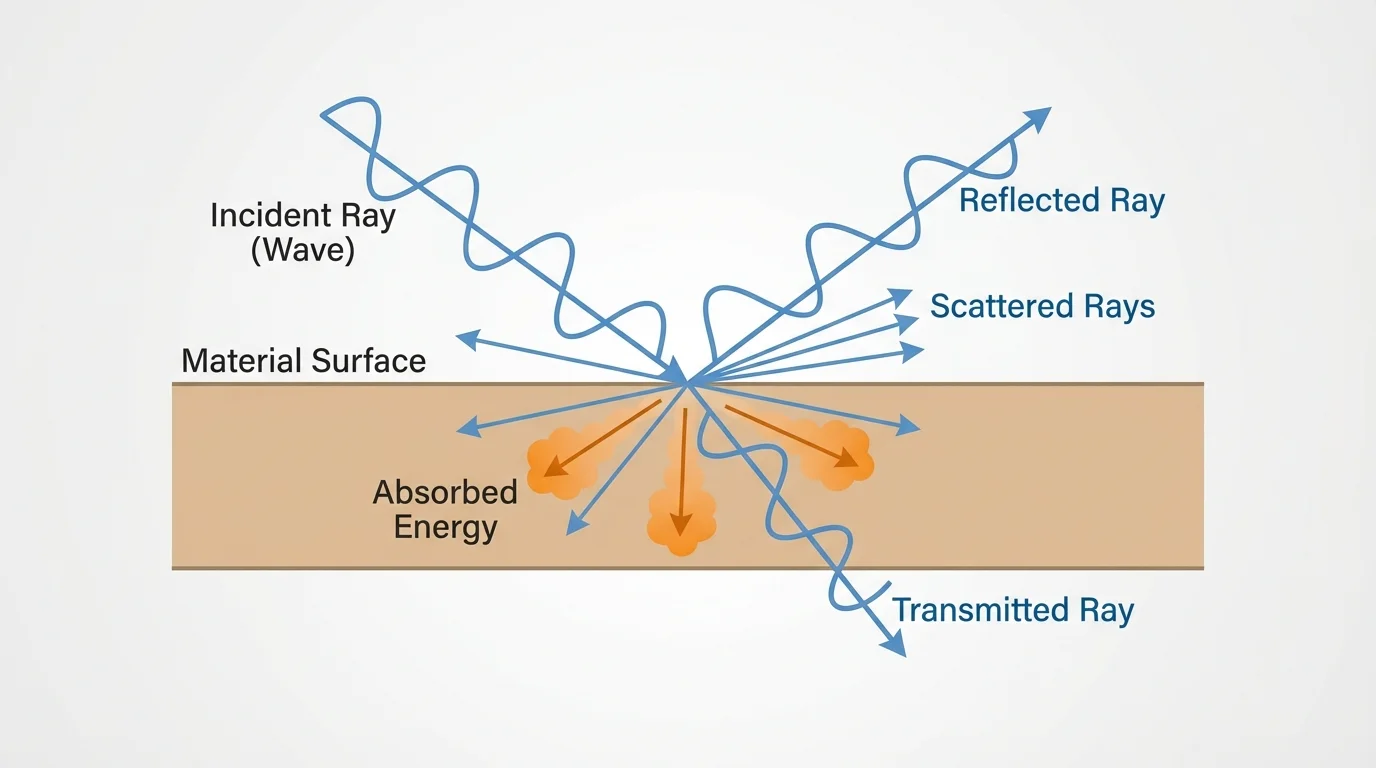

The reason waves become technology is that matter does not respond to all waves in the same way, and [Figure 2] illustrates the main possibilities when a wave meets a material boundary. A wave may be reflected, absorbed, transmitted, refracted, scattered, or combined with other waves through interference. Each effect can reveal information.

Reflection occurs when a wave bounces off a surface. Mirrors reflect visible light. Radar reflects radio waves from airplanes or storms. Ultrasound reflects sound waves from boundaries inside the body.

Absorption occurs when matter takes in wave energy. Dark clothing absorbs more visible light than light-colored clothing. Microwave ovens work because water-rich food absorbs microwave energy efficiently, increasing molecular motion and raising temperature.

Transmission happens when a wave passes through material. Refraction is the bending of a wave as it changes speed in a new medium. Lenses focus light by refraction, which is essential in cameras, eyeglasses, microscopes, and telescopes.

Scattering sends waves in many directions. Fog scatters light, making headlights less effective. In medical and scientific imaging, scattering can either help reveal structure or blur the signal.

Interference occurs when waves overlap. If the crests and troughs align in certain ways, the result may be stronger or weaker. Noise-canceling headphones use interference by creating a wave that reduces unwanted sound. Diffraction is the spreading of waves around edges or through openings, and it matters when designing antennas, microscopes, and imaging systems.

Polarization is another important property of electromagnetic waves. It describes the orientation of the wave's oscillation. Polarized sunglasses reduce glare because reflected light from flat surfaces is often polarized. Many imaging and communication systems also use polarization to improve performance.

Reflection is the bouncing of a wave from a surface. Refraction is the bending of a wave as it changes speed in a new medium. Absorption is the transfer of wave energy into matter. Interference is the combination of overlapping waves that can strengthen or weaken the result.

These interactions are not just side effects. They are the basis of detectors. A detector works because some material changes when a wave reaches it. That change may be electrical, chemical, magnetic, or thermal, but it can then be measured and turned into data.

Many natural signals are analog signals, meaning they vary continuously. The pressure changes in a spoken sentence are analog. The changing brightness of a scene entering your eye is analog. Modern technologies often convert these continuous signals into digital form because digital information is easier to copy, store, process, and correct.

A digital signal represents information using discrete values. For example, a microphone in a phone samples a sound wave many times each second. Each sample becomes a number. Those numbers are encoded as bits and transmitted electronically or by electromagnetic waves.

If a song is sampled at \(44{,}100\) times per second, the system captures enough detail to reproduce frequencies in the audible range for human hearing. The exact engineering involves more detail, but the main idea is simple: a changing wave is translated into a sequence of measurements.

Numeric example: using the wave relation

An FM radio station broadcasts near \(100 \textrm{ MHz}\), which means \(100{,}000{,}000 \textrm{ Hz}\). Radio waves travel at about \(3.0 \times 10^8 \textrm{ m/s}\) in air.

Step 1: Use the relation

\(\lambda = \dfrac{v}{f}\)

Step 2: Substitute values

\(\lambda = \dfrac{3.0 \times 10^8}{1.0 \times 10^8} = 3.0 \textrm{ m}\)

The wavelength is about \(3.0 \textrm{ m}\). Antenna design depends strongly on this scale.

Digital systems are powerful because small distortions do not always destroy the message. If a receiver can still distinguish the intended pattern, it can reconstruct the original bits. That is one reason digital photos, text messages, and streamed videos can remain useful even when the signal is not perfect.

Still, no system is immune to noise. Noise is any unwanted random or structured disturbance added to a signal. Static on a radio, grain in an image, and interference in a network connection are all examples. Engineers reduce noise through shielding, filtering, amplification, error correction, and smart choice of frequency ranges.

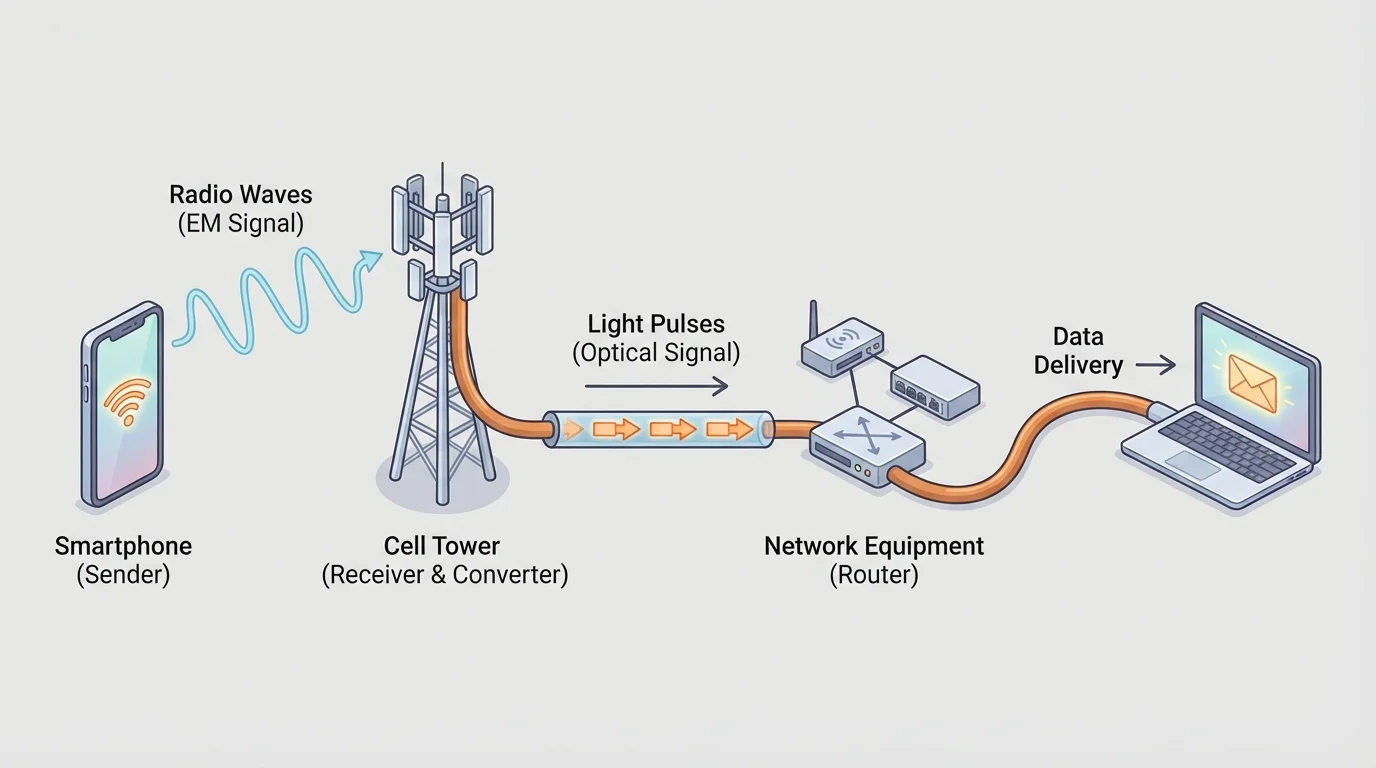

When you send a message, it often passes through several wave-based stages, as [Figure 3] shows in a typical path from a phone to a network and back to another device. Your words may begin as sound waves, become electrical signals in a microphone, convert into radio waves between your phone and a nearby tower, travel as light pulses through fiber optic cables, and finally convert back again at the receiving end.

Electromagnetic spectrum choices matter. Different frequencies travel differently through air, walls, rain, and space. Low-frequency radio waves can travel far and diffract around obstacles more easily. Higher frequencies can carry more data in some cases, but they may be absorbed more strongly or require more direct paths.

Cell phones use radio waves to communicate with nearby base stations. The system divides regions into cells so the same frequencies can be reused in different places. Wi-Fi also uses radio waves, but over shorter distances. Bluetooth is designed for very short-range, low-power communication.

Fiber optics use visible or infrared light traveling through thin glass fibers. The light undergoes repeated internal reflection, allowing signals to travel long distances with low loss. This is one reason the internet's backbone depends heavily on optical fibers.

Satellites relay signals over enormous distances. A satellite communication system sends radio or microwave signals upward, the satellite receives and retransmits them, and ground receivers decode them. Weather satellites, GPS systems, and live global broadcasts all rely on this principle.

Wave choice is always a trade-off. Engineers balance range, data rate, energy use, antenna size, atmospheric effects, and interference. Looking back at [Figure 3], the conversions between radio and light are not unnecessary complications; they let each part of the network use the wave type best suited to that part of the job.

Fiber optic cables carry information as flashes of light, and a single strand can transmit enormous amounts of data because light has extremely high frequency and can be modulated very rapidly.

Even barcode scanners and QR code readers are communication devices in a sense. They send out light, detect the reflected pattern, and convert differences in reflection into digital information. The object being scanned stores information in a spatial pattern, and the detector reads that pattern using wave interactions with matter.

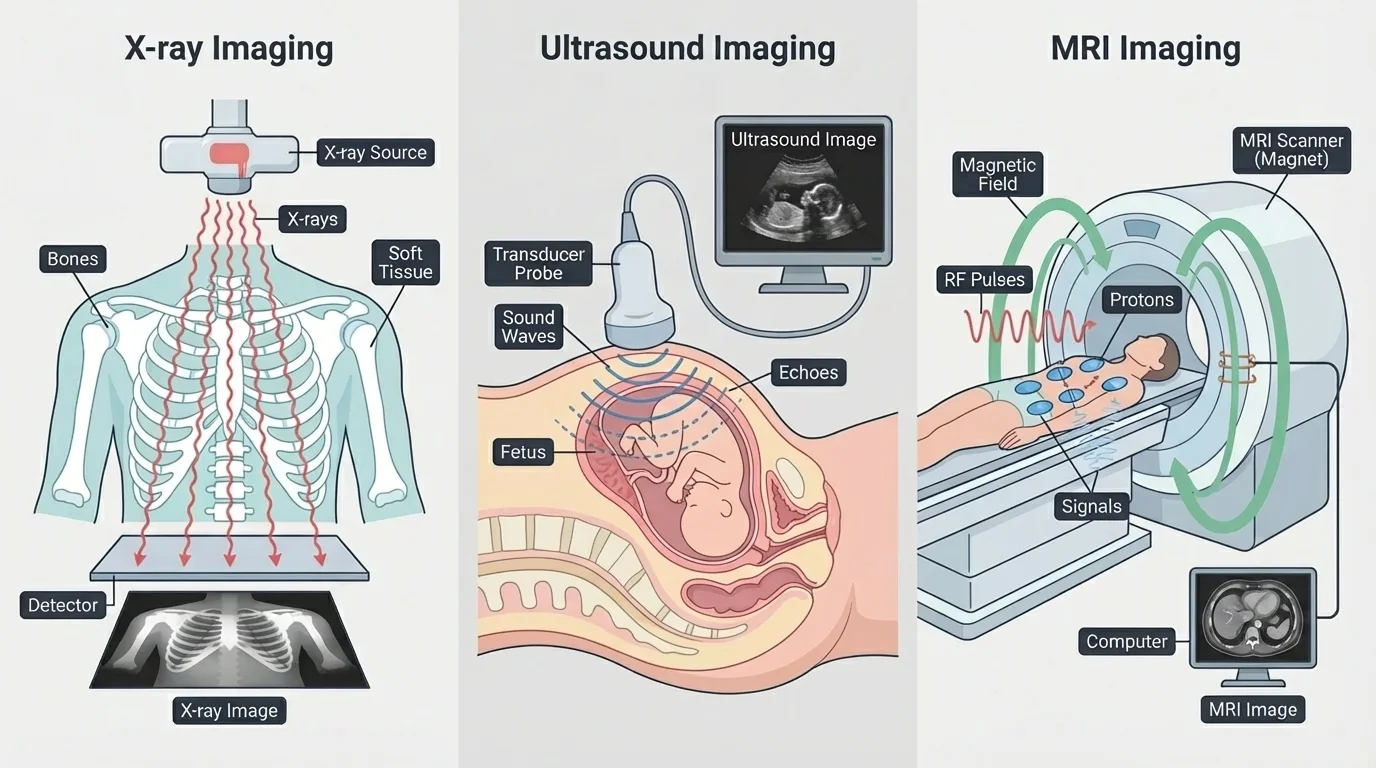

Medical imaging is one of the clearest examples of wave-matter interaction revealing hidden structure, and [Figure 4] compares three major approaches that use different physical principles. The body does not respond the same way to every wave, so each method reveals different features.

X-ray imaging uses high-energy electromagnetic waves. Soft tissue allows more X-rays to pass through than dense bone, so bones appear brighter on the detector. This is why X-rays are especially good for fractures and certain dental images.

Ultrasound uses high-frequency sound waves. The waves reflect from boundaries between tissues, and the returning echoes are used to build an image. Ultrasound is widely used in pregnancy monitoring and heart imaging because it does not use ionizing radiation.

MRI, or magnetic resonance imaging, uses strong magnetic fields and radio waves. Instead of simply passing waves through the body, MRI depends on how atomic nuclei in tissues respond to magnetic fields and radio-frequency pulses. The returning signals are processed by computers to create detailed images, especially of soft tissues.

Each method has strengths and limitations. X-rays are fast and effective for bones but involve ionizing radiation. Ultrasound is safe for many uses but may not image every structure equally well. MRI provides extraordinary soft-tissue detail but is expensive, slower, and not suitable in every situation.

Other scanners work on similar ideas. Airport scanners, industrial flaw detectors, and supermarket barcode readers all rely on producing waves, receiving altered waves, and interpreting the differences. In science labs, detectors can count photons, measure wavelengths, or record time delays to reconstruct an event or image.

Real-world comparison: why hospitals use multiple imaging tools

Step 1: Match the wave to the question

If a doctor suspects a broken arm, X-rays are useful because bone absorbs X-rays differently from surrounding tissue.

Step 2: Use echoes when boundaries matter

If the goal is to observe a fetus or heart valve motion, ultrasound is valuable because reflected sound can reveal moving soft tissues in real time.

Step 3: Use resonance for detailed soft tissue

If the concern is a ligament tear or brain structure, MRI often provides more detailed soft-tissue information.

The "best" technology depends on how the wave interacts with the matter being examined.

Resolution also matters. To detect small details, the probing wave usually needs a wavelength comparable to or smaller than the feature size. That is one reason visible light cannot resolve extremely tiny atomic structures, while shorter-wavelength methods can reveal finer detail.

Information is only useful if it can be captured and kept. A microphone captures sound because a diaphragm vibrates with incoming pressure waves and converts that motion into an electrical signal. A camera captures light because photosensitive materials or semiconductor pixels respond to incoming photons.

In digital cameras, sensors such as CCD or CMOS arrays measure light intensity at many tiny locations. Color information is often obtained using filters that separate red, green, and blue light. The result is not "a picture" at first; it is a large data set that software processes into an image.

Storage technologies also depend on controlled physical states. Optical discs use pits and reflective surfaces that are read by laser light. Magnetic storage uses differently oriented magnetic domains. Solid-state memory stores charge states in tiny electronic structures. In every case, matter is arranged so that a detector can later distinguish one state from another reliably.

The same basic logic appears again and again: encode a pattern, preserve the pattern, and retrieve the pattern. Whether the pattern is a song file, a medical scan, or telescope data, information remains meaningful only if the system can read it back accurately.

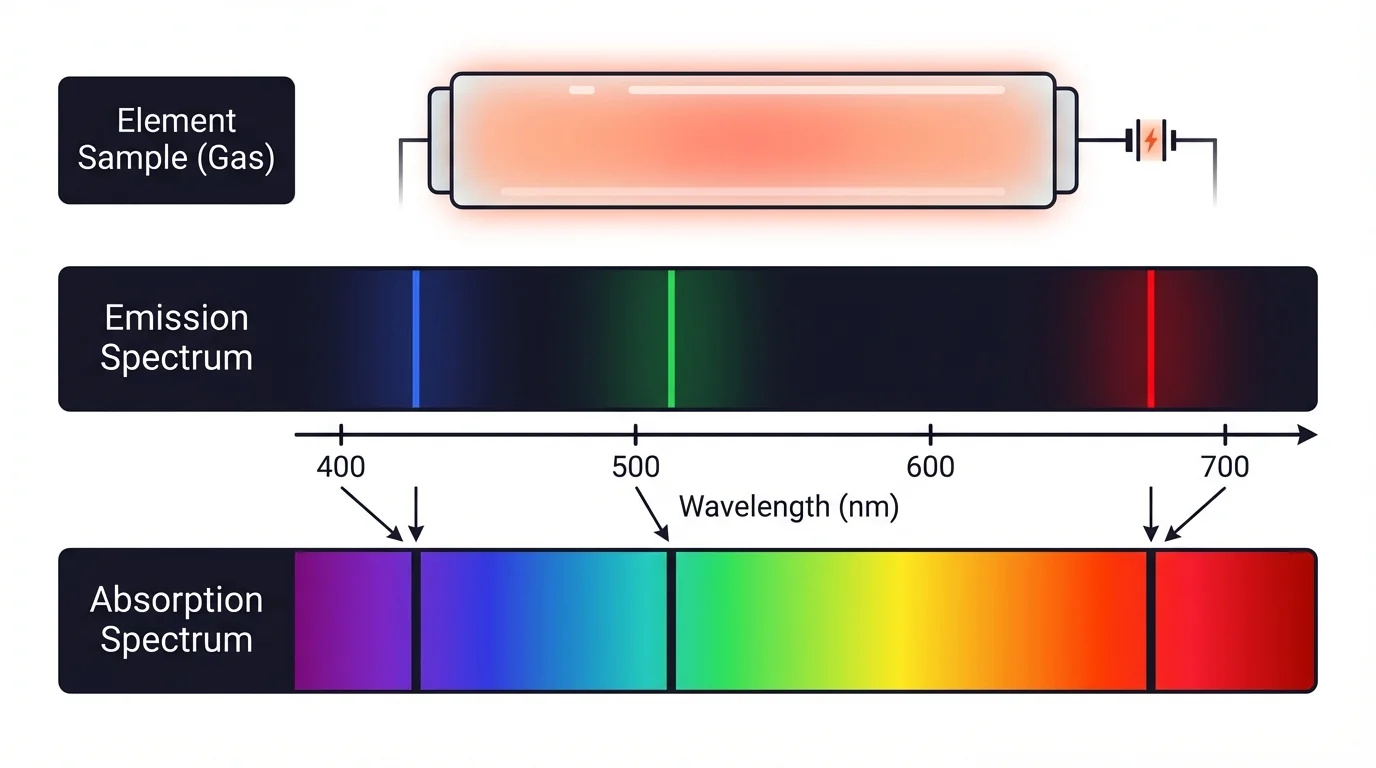

In research, waves do more than carry information; they reveal what matter is made of. Each substance can leave a characteristic pattern in the waves it emits or absorbs, and [Figure 5] highlights how a spectrum acts like a fingerprint for matter. This idea is central to spectroscopy.

A spectrum is the distribution of wave intensity across different wavelengths or frequencies. When atoms or molecules absorb particular energies, dark lines can appear in a continuous spectrum. When excited atoms emit particular energies, bright lines appear. By measuring these patterns, scientists identify elements and compounds.

Astronomers use spectroscopy to determine the composition of stars, the motion of galaxies, and even the presence of atmospheres around exoplanets. Chemists use it to study molecules. Environmental scientists use it to detect pollutants. None of this requires direct contact with the sample; the information is contained in the wave pattern.

Radio telescopes detect radio waves from space. Optical telescopes collect visible light. Infrared detectors reveal cooler objects hidden by dust. X-ray observatories reveal extreme environments such as black hole surroundings and supernova remnants. The universe looks different in each wave band because matter produces and interacts with those waves differently.

Later uses of [Figure 5] extend beyond astronomy. The same idea of characteristic spectral lines is used in laboratory analysis, materials science, and forensic work. The detector does not need to "see" in a human sense; it only needs to measure a repeatable pattern that matter produces.

Earlier studies of atoms matter here: electrons in atoms occupy specific energy levels. When they change levels, atoms absorb or emit specific amounts of energy. That is why spectral lines are not random.

Researchers also use waves to probe structures too small or too hidden for ordinary vision. Electron microscopes, scanning instruments, and diffraction-based methods all depend on interactions between waves and matter to reveal arrangement, size, and composition.

No technology based on waves is perfect. Signals weaken with distance. Materials absorb some wavelengths more than others. Scattering can blur images. Noise can hide patterns. Resolution has physical limits. Devices must balance sensitivity, power, cost, and safety.

One major safety issue is the difference between ionizing and non-ionizing radiation. Ionizing radiation, such as X-rays and gamma rays, has enough energy to remove electrons from atoms and can damage biological tissue. Non-ionizing radiation, such as radio waves, microwaves, visible light, and most infrared, generally does not ionize atoms, though high intensities can still cause heating or eye damage.

Numeric example: travel time of a signal

A pulse travels through a fiber optic cable at about \(2.0 \times 10^8 \textrm{ m/s}\). If the cable length is \(40{,}000 \textrm{ m}\), how long does the signal take to travel?

Step 1: Use the relation

\(t = \dfrac{d}{v}\)

Step 2: Substitute values

\(t = \dfrac{40{,}000}{2.0 \times 10^8} = 2.0 \times 10^{-4} \textrm{ s}\)

Step 3: Interpret the result

\(2.0 \times 10^{-4} \textrm{ s} = 0.0002 \textrm{ s} = 0.2 \textrm{ ms}\)

The pulse takes \(0.2 \textrm{ ms}\) to travel through the fiber.

There are also social issues. Scanners and sensors can improve safety and health, but they can raise questions about privacy, data ownership, and equitable access. A highly advanced imaging system is only beneficial if people can actually obtain and use it responsibly.

The deeper lesson is that understanding waves is not just about physics formulas. It is about understanding how nature allows information to be carried, transformed, and revealed. The same principles connect a radio broadcast, a medical diagnosis, a digital photograph, and a scientific discovery.