A single article can raise alarm, build trust, spark action, or be ignored completely. That is one of the most important truths about reading informational texts: the words on the page matter, but so does the audience receiving them. A public health advisory about rising measles cases may push one group to get vaccinated immediately, while another group responds with skepticism because of prior beliefs. If you can predict that impact and justify your prediction with evidence, you are reading at a much deeper level than someone who only identifies the topic.

To predict the impact of an informational text means to make a reasoned judgment about how the text is likely to affect its audience. That effect might be intellectual, such as changing what people know; emotional, such as creating fear or urgency; behavioral, such as leading people to vote, donate, or change habits; or social, such as shaping public debate. A strong prediction is never just a guess. It grows out of close reading.

When skilled readers evaluate informational writing, they ask two connected questions: What is this text doing? and What is it likely to do to the people who read it? Those questions matter in journalism, science communication, politics, advertising, law, health campaigns, and school settings. They also matter online, where headlines, graphics, statistics, and short articles can influence thousands of people within minutes.

Informational texts are often treated as if they simply deliver facts, but facts are always presented in a particular way. A climate report can present the same data in a calm, technical style for scientists or in dramatic, urgent language for the general public. The core information may be similar, yet the impact changes because the presentation changes.

This is why audience impact matters: texts do not exist in a vacuum. They are designed, intentionally or unintentionally, to produce responses. Some texts aim to inform. Others aim to persuade, warn, clarify, challenge misconceptions, or build support for a cause or policy. Even a straightforward school announcement shapes behavior by telling readers what matters and what action is expected.

Audience is the group of readers, listeners, or viewers a text addresses or reaches. Impact is the effect a text has on that audience's thoughts, feelings, beliefs, or actions. Purpose is the writer's main reason for creating the text. Claim is an assertion the writer wants the audience to accept, and evidence is the support used to back it up.

Predicting impact is not mind reading. You are not proving exactly what every reader will think. Instead, you are identifying the most likely effects on a reasonable audience or on a specific audience group, based on textual clues and context.

As you read, one of the first things to notice is the tone of the text. Tone is the writer's attitude as expressed through diction, emphasis, and style. A measured tone may create trust; an accusatory tone may provoke defensiveness; a celebratory tone may encourage approval. Tone matters because audiences often respond not only to information but to the manner in which it is delivered.

You should also pay attention to context. Context includes the circumstances surrounding a text: when it was written, where it appears, what event or debate prompted it, and what readers already know or believe. A water conservation article published during a severe drought will likely have a stronger effect than the same article published after months of heavy rain.

Another important idea is credibility, or how trustworthy the source appears. Readers are more likely to be influenced by a medical article published by a respected hospital than by an anonymous social media post with no citations. Source, expertise, evidence quality, and transparency all shape impact.

Professional communicators often test headlines, images, and wording before publication because small changes in presentation can significantly alter audience response, even when the underlying information stays the same.

Finally, readers should watch for bias. Bias does not always mean a text is false, but it does mean the writer may be presenting information from a particular angle. Recognizing bias helps you predict whether readers will find the text convincing, misleading, fair, or one-sided.

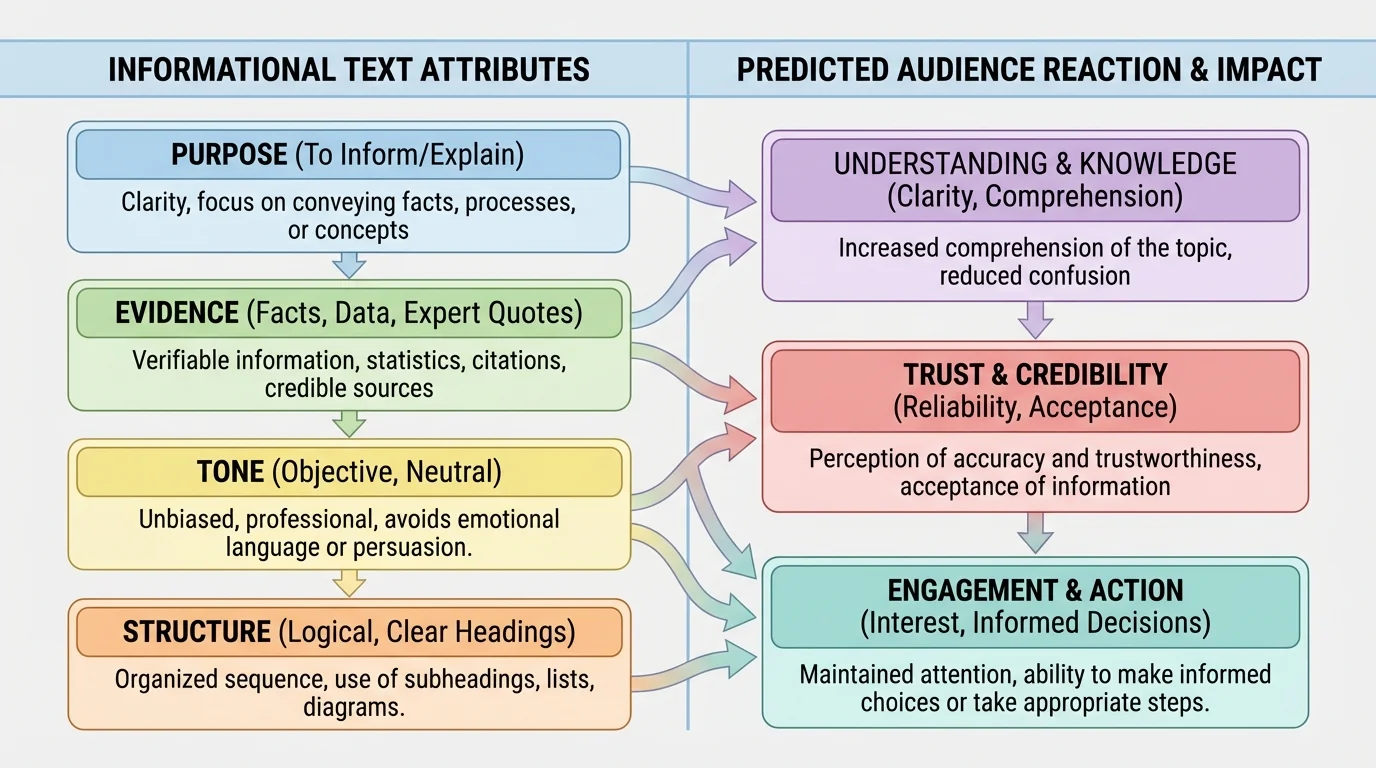

To predict audience impact well, begin with the text itself. Several textual features work together to influence readers, as [Figure 1] illustrates through the relationship among purpose, evidence, tone, and structure. You should examine the central idea, the supporting details, the kind of evidence used, the organization of ideas, and the language choices that shape emphasis.

Start with key ideas and details. What is the text mainly saying? Which facts, examples, statistics, quotations, or explanations receive the most space? Writers signal what they want audiences to notice by selecting and emphasizing certain details. If an article about teen sleep deprivation highlights brain function, accident risk, and academic performance, the likely impact is increased concern and stronger support for later school start times.

Evidence matters just as much as content. Statistics can create a sense of scale and seriousness. Expert testimony can build authority. Personal anecdotes can humanize an issue. Cause-and-effect explanations can make readers feel that a problem is understandable and solvable. Weak evidence, however, may reduce the text's impact because readers question its reliability.

Structure also shapes effect. A problem-solution structure often pushes audiences toward action. A compare-and-contrast structure may help audiences weigh options. A chronological structure can build momentum, especially in investigative reporting or historical explanation. If a report begins with a shocking local example before moving to national data, it may grab attention more effectively than a purely abstract opening.

Language choices are another clue. Words such as crisis, urgent, and alarming create intensity. Words such as preliminary, limited, and mixed results signal caution. The difference between saying a policy will "burden taxpayers" and saying it will "require public investment" is not just stylistic. It changes how audiences are likely to interpret the issue.

Later, when you justify a prediction, you should return to these textual features. As we saw in [Figure 1], audience impact rarely comes from one isolated detail. It usually emerges from the combination of message, evidence, tone, and organization.

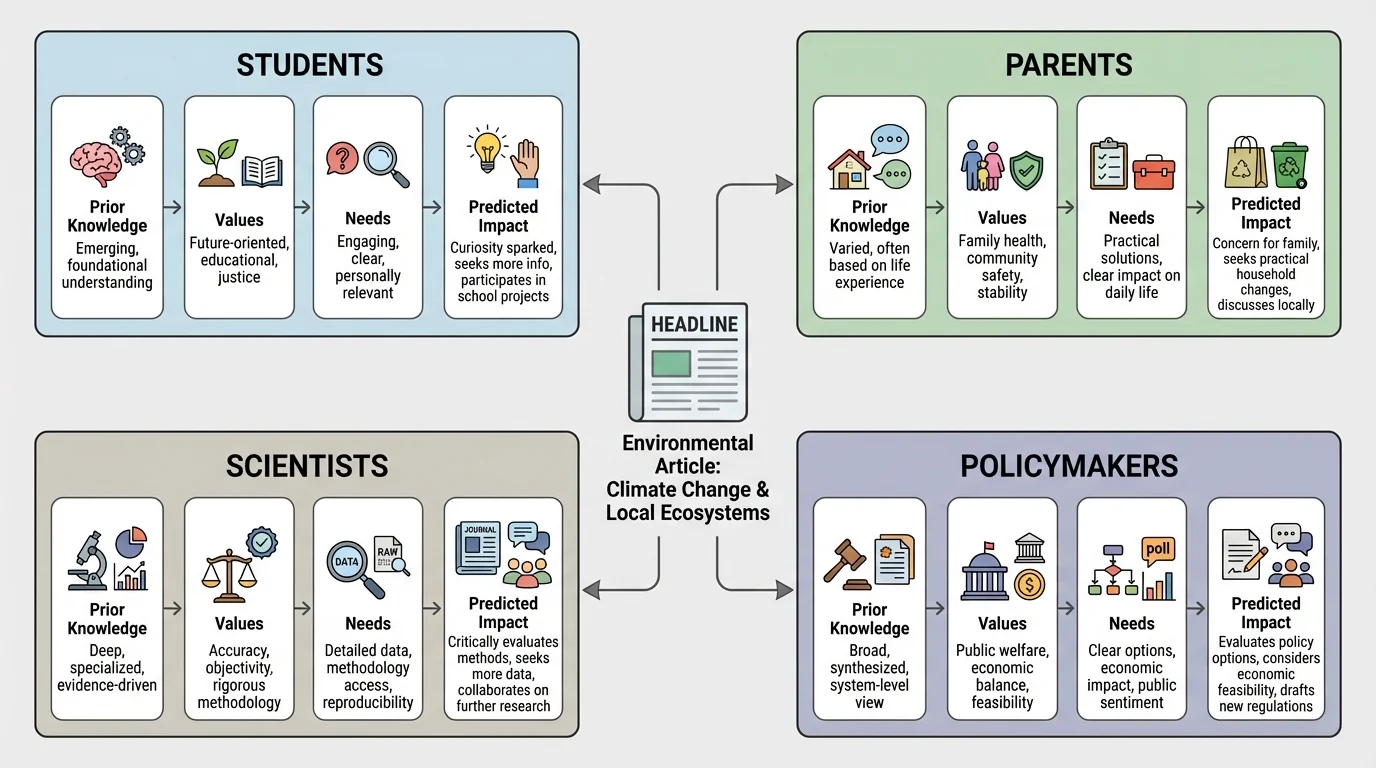

[Figure 2] Predicting impact requires attention to the audience as well as the text. The same article can affect different groups in different ways. You should ask who the intended audience is and who the broader audience might be.

One major factor is prior knowledge. Readers who already understand a topic may focus on nuance, while readers new to the issue may be shaped more strongly by introductory explanations. A detailed article on gene editing may reassure biology students who understand the science but confuse or alarm readers who do not.

Another factor is beliefs and values. As [Figure 2] suggests, a text on renewable energy might persuade readers who already prioritize environmental protection, but it may have a different impact on readers primarily worried about energy cost or government regulation. Readers do not enter a text as blank slates. They bring assumptions, identities, loyalties, and experiences.

Age, role, and immediate needs matter too. A school safety memo affects students, parents, teachers, and administrators differently because each group has different responsibilities and concerns. A student may focus on inconvenience, a parent on protection, and an administrator on implementation.

Cultural context can also shape response. References, examples, and assumptions that feel persuasive to one community may feel unfamiliar or unconvincing to another. Skilled readers therefore avoid saying, "This text will affect everyone the same way." A more accurate statement is usually, "This text will likely affect this audience in this way because..."

Impact depends on a match between text and audience. A highly credible, well-supported text may still have limited impact if the audience distrusts the source, lacks background knowledge, or feels the issue does not affect them. On the other hand, even a brief text can have strong impact if it reaches readers at the right moment with evidence and language that connect to their concerns.

This principle explains why identical information can lead to very different outcomes in public debate. Communication is not only about what is said; it is also about who hears it and under what conditions.

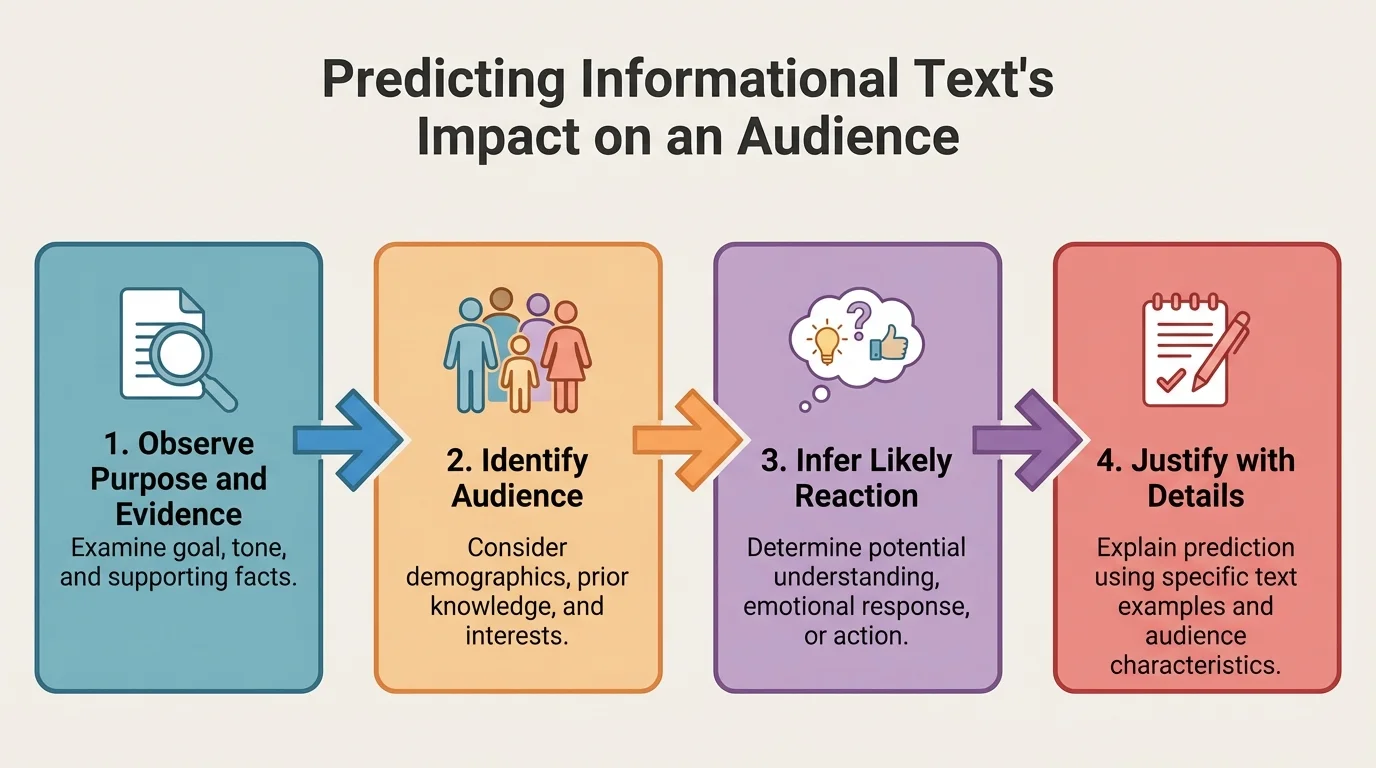

[Figure 3] A strong prediction follows a logical process. Instead of jumping straight to a reaction, move from what you can point to in the text toward what that evidence suggests about likely audience response.

One effective method is: identify the writer's purpose, determine the target audience, examine key ideas and evidence, notice tone and structure, and then infer the most likely effect. As [Figure 3] shows, after that, justify the prediction using direct references to the text.

You can frame your reasoning in a pattern like this: The text will likely affect audience X by causing response Y because it uses evidence A, tone B, and structure C. That sentence pattern forces you to connect the effect to actual features of the text.

For example, suppose a city website publishes an article about heat waves. The article includes local temperature data, quotes from doctors, maps of neighborhoods at highest risk, and clear instructions about hydration centers. A justified prediction would be: The article will likely create urgency and encourage precaution among city residents because it combines local statistics, expert authority, and practical directions. That is much stronger than saying, This article is good or This article is convincing.

Case method for building a prediction

Text: A news article reports that a nearby river contains unsafe levels of pollution and includes test data, interviews with scientists, and photos of warning signs.

Step 1: Identify the purpose.

The article aims to inform the public and warn the community about a local environmental risk.

Step 2: Identify the likely audience.

Local residents, families, anglers, and public officials are the most immediate audience because the river affects them directly.

Step 3: Examine the features that shape reaction.

Scientific test results build credibility, expert interviews strengthen authority, and warning-sign images increase urgency.

Step 4: Make and justify the prediction.

The article will likely alarm local readers and increase support for cleanup efforts because it presents verified local evidence and shows visible signs of danger.

Notice that the prediction is not random and not purely emotional. It is anchored in evidence from the text and in knowledge of the audience.

Informational texts can affect audiences in several major ways. First is intellectual impact: readers learn facts, refine understanding, or reconsider earlier assumptions. A science feature that explains antibiotic resistance may not provoke strong emotion, but it may significantly deepen understanding.

Second is emotional impact. Even texts built on facts can create fear, hope, anger, admiration, or concern. A report on wildfire damage that includes survivor accounts and satellite images can make the issue feel immediate and personal.

Third is behavioral impact. Some texts are meant to change what people do: register to vote, conserve water, get a screening test, update privacy settings, or follow evacuation guidance. When a text includes specific action steps, deadlines, or consequences, it is often trying to move readers from awareness to action.

Fourth is social or civic impact. Some texts shape public conversation, influence policy support, or shift how communities define a problem. An investigative report on unsafe working conditions may pressure companies, regulators, and consumers all at once.

When justifying an interpretation, return to concrete textual evidence. A prediction about audience impact should work the same way: claim an effect, then support it with the text's ideas, details, structure, and language.

These categories often overlap. A text may inform readers, disturb them emotionally, and motivate action at the same time. In fact, powerful informational texts often work because they combine several kinds of impact.

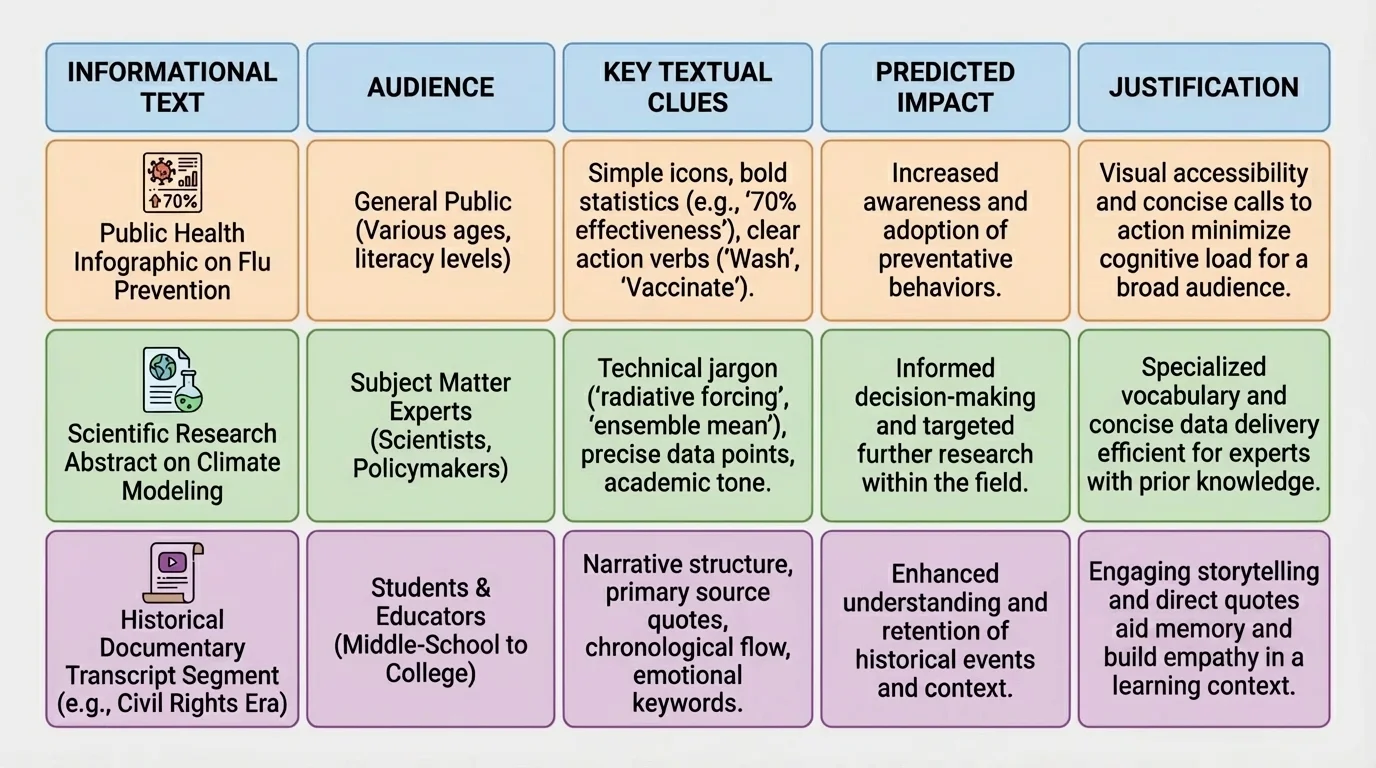

Side-by-side comparison makes differences in impact easier to see, and [Figure 4] organizes several examples by audience, textual clues, and likely effect. Looking across cases helps reveal patterns instead of treating every text as unique.

Consider a public health article titled Why Flu Vaccination Rates Are Falling Among Teens. If the article uses national data, interviews with pediatricians, and a calm explanatory tone, it will likely inform parents and school officials while also increasing concern. If it includes practical solutions, such as school clinics and myth correction, it may also encourage action.

Now consider an investigative news report on unsafe conditions in a local warehouse. If the report includes employee testimony, internal documents, and evidence of ignored complaints, its impact on the public may be anger and demand for accountability. On workers, it may create recognition and solidarity. On company leaders, it may create pressure, defensiveness, or both.

A school policy memo offers a different case. Suppose a principal sends a memo announcing a stricter phone policy during class. The memo explains the reasons, cites classroom distraction data, and outlines consequences. The likely impact on teachers may be relief or support because it promises better focus. Students, however, may respond with resistance if they see the policy as restrictive. This does not mean the memo failed; it means different audiences can experience different impacts.

We can see from [Figure 4] that strong predictions compare audience needs with textual choices. The same method works whether the text is a government notice, a science article, a nonprofit report, or a newspaper investigation.

| Text Type | Likely Audience | Key Clues | Predicted Impact |

|---|---|---|---|

| Public health article | Parents, students, school leaders | Statistics, expert quotes, practical advice | Concern, increased understanding, possible action |

| Investigative news report | General public, officials, workers | Documents, testimony, evidence of harm | Anger, scrutiny, pressure for change |

| Policy memo | Students, staff, families | Rules, reasons, consequences | Compliance, resistance, or support depending on role |

| Environmental explainer | Residents, activists, policymakers | Local examples, maps, scientific findings | Awareness, urgency, support for action |

Table 1. Comparison of informational text types, likely audiences, textual clues, and predicted impact.

A weak justification sounds like personal preference: I think people will like this article because it is interesting. That statement is vague, unsupported, and imprecise. It tells us almost nothing about the text or the audience.

A strong justification identifies a specific audience and a specific effect, then supports both with textual evidence. For example: The article will likely persuade undecided voters to support the bond measure because it emphasizes overcrowded classrooms, includes local budget figures, and presents testimonials from parents and teachers. That statement works because it points to details.

Strong justification also avoids overclaiming. Instead of saying everyone, say many readers, the intended audience, or parents concerned about safety. Instead of saying a text proves a reaction, say it is likely to create, may increase, or is likely to encourage. This language is careful and realistic.

"Good readers do not just ask what a text says. They ask what it is likely to do."

That distinction matters in advanced reading. You are evaluating probable influence, not guaranteeing identical reactions from all readers.

Some texts produce mixed effects. A report on artificial intelligence may excite investors, worry workers, and interest students. A text can also fail to persuade its intended audience if readers distrust the source or reject the assumptions behind the argument.

Some audiences are skeptical before they even begin reading. In those cases, strong evidence may reduce resistance, but it may not eliminate it. This is why predicting impact requires nuance. You are not just identifying the strongest possible effect; you are identifying the most defensible prediction.

Informational texts can also have unintended consequences. A report meant to reassure the public can accidentally increase anxiety if it repeats alarming facts without enough context. A campaign designed to motivate action can create fatigue if readers feel overwhelmed rather than empowered.

Multiple audiences mean multiple valid predictions. A single text may be welcomed by one audience, resisted by another, and misunderstood by a third. High-level analysis acknowledges these possibilities and explains them with evidence rather than forcing a single oversimplified conclusion.

That complexity is not a problem. It is a sign that you are reading with maturity and precision.

When you state your prediction, be direct. Name the audience, identify the likely effect, and explain why. A clear response might sound like this: This article will likely increase support among suburban commuters for expanded public transit because it uses cost comparisons, traffic data, and testimonials from local workers to present the issue as both practical and urgent.

That sentence succeeds because it answers three questions: Who is affected? How are they affected? Why is that effect likely? If one of those parts is missing, the prediction becomes weaker.

The highest-quality analysis remains grounded in the text while also recognizing audience complexity. It does not confuse impact with intention, and it does not confuse personal reaction with likely audience response. Instead, it treats reading as interpretation supported by evidence.