A weather app says there is a \(40\%\) chance of rain tomorrow. A friend says there is a \(50\%\) chance they will forget their umbrella. What is the chance that both happen? Many people would multiply and say \(0.4 \cdot 0.5 = 0.2\), or \(20\%\). But that answer is only correct if the two events are independent. In probability, "independent" does not just mean "seems unrelated." It has a precise mathematical meaning, and that meaning lets us decide whether multiplying probabilities makes sense.

That idea matters far beyond classroom problems. It appears in genetics, medical studies, quality control in factories, sports statistics, and survey analysis. Whenever we ask whether one event changes the likelihood of another, we are asking about independence. Sometimes the answer is yes, sometimes no, and the difference changes how probabilities are calculated.

Suppose a basketball player makes \(80\%\) of free throws. If we assume one shot does not affect the next, then the probability of making two in a row is \(0.8 \cdot 0.8 = 0.64\). But if the player is tired, injured, or under pressure after missing the first shot, the second shot may no longer have the same probability. Then the events are not independent, and simple multiplication may give the wrong result.

Independence is about influence. If knowing event \(A\) happened does not change the probability of event \(B\), then \(A\) and \(B\) are independent. If the probability changes, they are dependent. This is why independence is closely connected to conditional probability, which measures the probability of one event given that another has already occurred.

Recall: An event is a set of outcomes from a probability experiment. The notation \(A \cap B\) means "both \(A\) and \(B\) occur," and \(P(A \cap B)\) is the probability of that overlap.

Before studying independence carefully, it helps to remember that probabilities are numbers between \(0\) and \(1\), and that the probability of "both" usually depends on how the events are related. The whole question is whether the overlap behaves like a product.

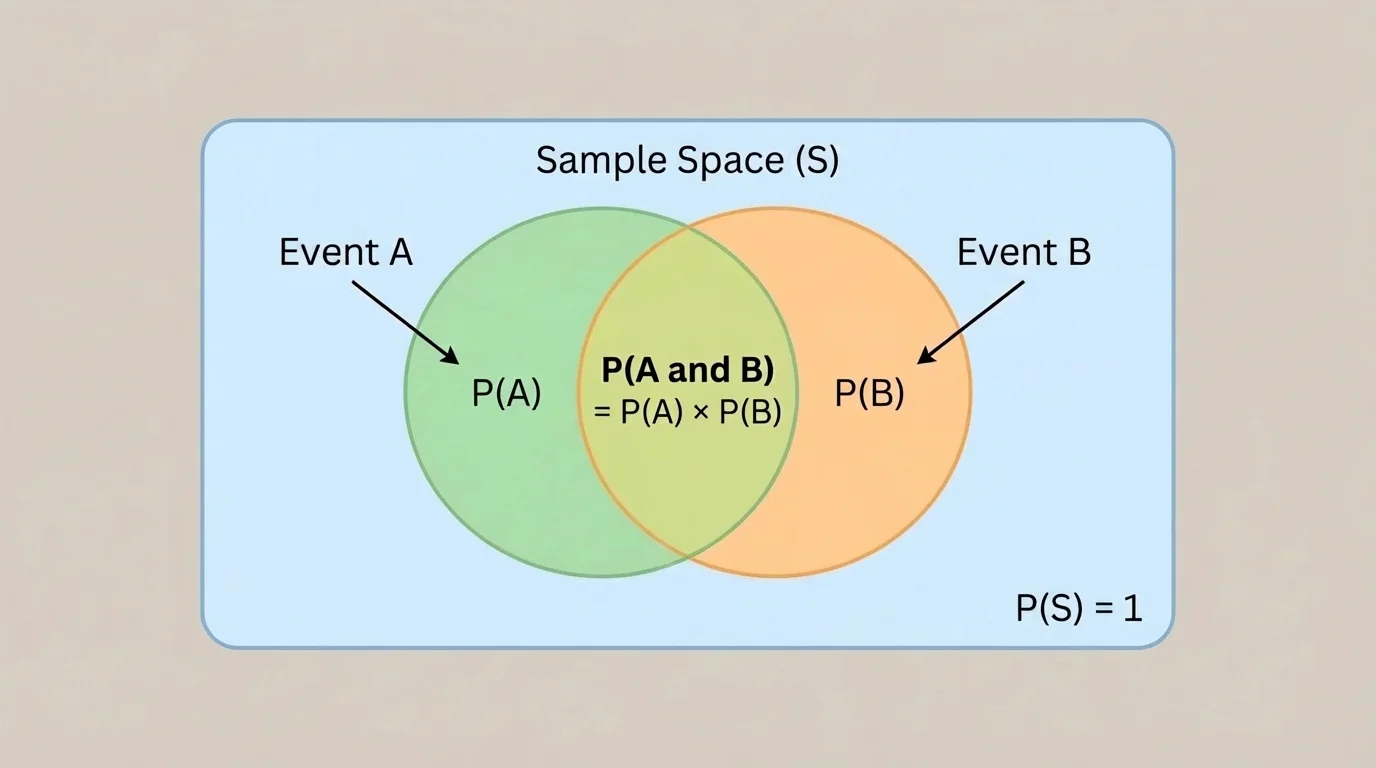

In a probability diagram, the overlap of two events, as shown in [Figure 1], represents the outcomes that belong to both events at the same time. That overlap is written as \(A \cap B\). Two events are called independent events if the probability of both occurring equals the product of their individual probabilities.

This gives the main test for independence:

Independent events are two events \(A\) and \(B\) such that

\[P(A \cap B) = P(A)P(B)\]

If this equation is true, the events are independent. If it is not true, the events are dependent.

This formula is sometimes called the product rule for independent events. It is not a rule to use automatically for every pair of events. It is a characterization: it tells you when two events are independent.

Notice what the formula says. If event \(A\) happens with probability \(P(A)\), and event \(B\) happens with probability \(P(B)\), then for independent events the chance of both is exactly what you get by multiplying those two probabilities. If the actual overlap is larger than the product, the events tend to occur together more than independence would predict. If the actual overlap is smaller, the occurrence of one makes the other less likely.

For example, if \(P(A) = 0.3\) and \(P(B) = 0.5\), then independence would require

\[P(A \cap B) = 0.3 \cdot 0.5 = 0.15\]

If the actual probability of both is \(0.15\), the events are independent. If the actual probability is \(0.10\) or \(0.20\), they are not.

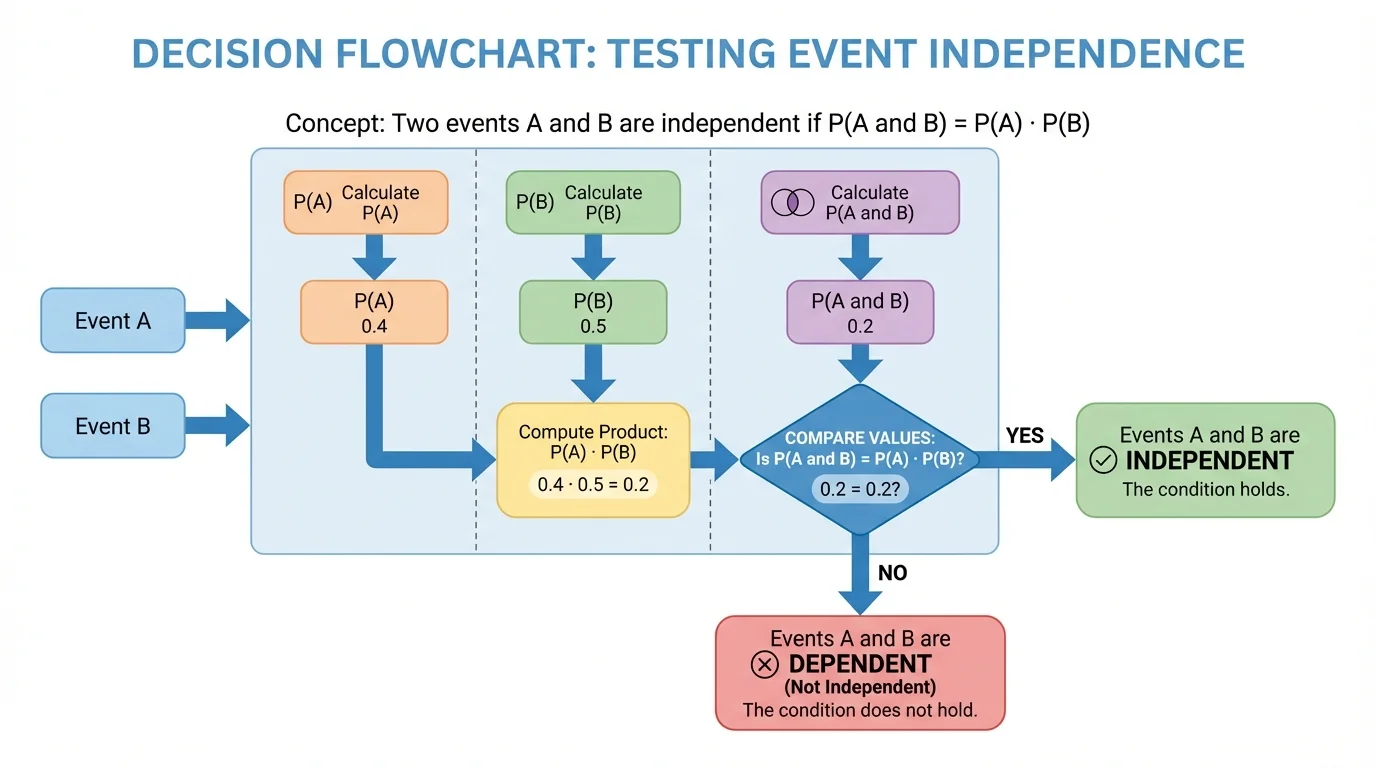

When you are given information about two events, the process helps organize the work. The test is simple: find \(P(A \cap B)\), compute \(P(A)P(B)\), and compare the two values. If they are equal, the events are independent. If they are not equal, the events are dependent.

[Figure 2] There are three common situations:

First, you may be given \(P(A)\), \(P(B)\), and \(P(A \cap B)\) directly. Then you just compare the numbers.

Second, you may be given a table of data or counts. Then you convert counts into probabilities by dividing by the total number of outcomes or observations.

Third, you may be given a real situation, like drawing cards or flipping a coin and rolling a die. Then you reason from the setup to determine the probabilities.

If you compare and get equality, be careful about rounding. For example, \(0.333\) and \(0.3333\) may differ only because of decimal approximations. In exact work, fractions often make this clearer.

Another useful viewpoint is to compare expected overlap under independence with actual overlap. If \(30\%\) of students play a sport and \(40\%\) are in the school band, then independence predicts that \(0.3 \cdot 0.4 = 0.12\), so \(12\%\) should do both. If the data show \(12\%\), that supports independence. If the data show \(5\%\) or \(25\%\), the activities are not independent.

The idea of independence becomes even clearer through conditional probability. The notation \(P(B\mid A)\) means the probability of \(B\) given that \(A\) has occurred. If knowing \(A\) happened does not change the probability of \(B\), then \(B\) should have the same probability as before. That means

\[P(B\mid A) = P(B)\]

for independent events, as long as \(P(A) \neq 0\).

How the two definitions connect

Conditional probability is defined by \(P(B\mid A) = \dfrac{P(A \cap B)}{P(A)}\), provided \(P(A) \neq 0\). If \(A\) and \(B\) are independent, then \(P(A \cap B) = P(A)P(B)\). Substituting gives \(P(B\mid A) = \dfrac{P(A)P(B)}{P(A)} = P(B)\). So the multiplication rule and the "no change in probability" idea say the same thing.

This connection is powerful because sometimes it is easier to test independence using conditional probability. If \(P(B\mid A)\) equals \(P(B)\), then \(A\) does not affect \(B\). Likewise, if \(P(A\mid B) = P(A)\), then \(B\) does not affect \(A\). Independence works both ways.

Using [Figure 1] again, you can think of conditional probability as zooming in on event \(A\) and asking what fraction of \(A\) also lies in \(B\). For independent events, that fraction stays equal to the original probability of \(B\).

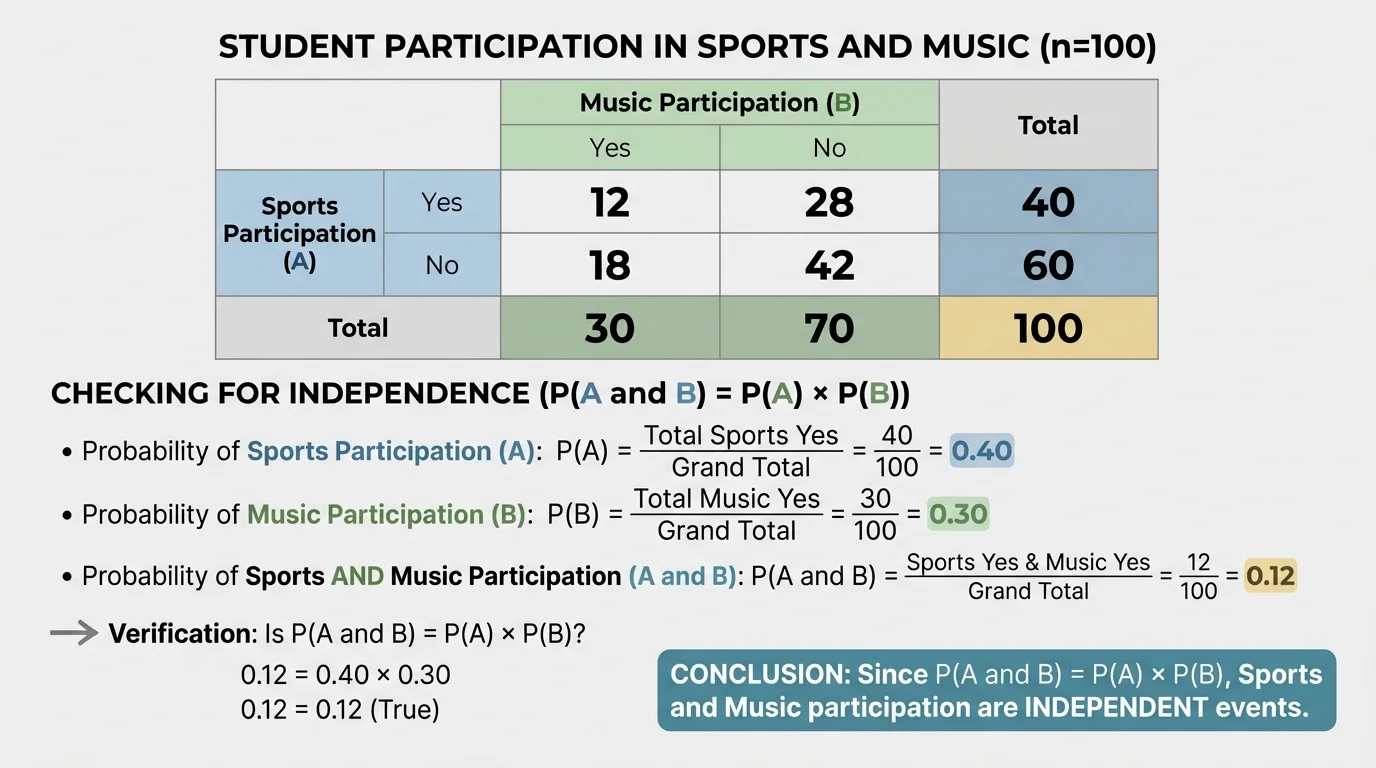

Examples are where the definition becomes practical. The survey situation in [Figure 3] is especially useful because many real decisions are based on data tables rather than theoretical experiments.

Worked Example 1: Coin toss and even number on a die

Let \(A\) be the event "a coin lands heads" and \(B\) be the event "a standard die shows an even number." Determine whether \(A\) and \(B\) are independent.

Step 1: Find the individual probabilities.

For a fair coin, \(P(A) = \dfrac{1}{2}\). For a fair die, the even outcomes are \(2, 4, 6\), so \(P(B) = \dfrac{3}{6} = \dfrac{1}{2}\).

Step 2: Find the probability of both events.

The coin toss and die roll do not affect each other, so the outcomes can be listed as ordered pairs. The favorable outcomes for both are \((H,2), (H,4), (H,6)\), which is \(3\) outcomes out of \(12\).

So \(P(A \cap B) = \dfrac{3}{12} = \dfrac{1}{4}\).

Step 3: Compare with the product.

\(P(A)P(B) = \dfrac{1}{2} \cdot \dfrac{1}{2} = \dfrac{1}{4}\).

Since \(P(A \cap B) = P(A)P(B)\), the events are independent.

This is a classic example of independence because the two experiments are physically separate. One result gives no information about the other.

Worked Example 2: Drawing a heart and a face card in a single card draw

From a standard deck of \(52\) cards, let \(A\) be "the card is a heart" and \(B\) be "the card is a face card." Determine whether the events are independent.

Step 1: Find \(P(A)\) and \(P(B)\).

There are \(13\) hearts, so \(P(A) = \dfrac{13}{52} = \dfrac{1}{4}\).

There are \(12\) face cards, so \(P(B) = \dfrac{12}{52} = \dfrac{3}{13}\).

Step 2: Find \(P(A \cap B)\).

The heart face cards are jack of hearts, queen of hearts, and king of hearts, so there are \(3\) such cards.

Thus \(P(A \cap B) = \dfrac{3}{52}\).

Step 3: Compare with the product.

\(P(A)P(B) = \dfrac{1}{4} \cdot \dfrac{3}{13} = \dfrac{3}{52}\).

Since the two values are equal, the events are independent.

This result surprises many students because the events overlap. Overlap does not mean dependence. Independent events can overlap, and in fact they usually do unless one probability is \(0\).

Worked Example 3: Sports and music survey

A survey of \(200\) students gives the following data: \(80\) play a sport, \(100\) participate in music, and \(50\) do both. Are the events independent?

Step 1: Convert counts to probabilities.

Let \(A\) be "student plays a sport" and \(B\) be "student participates in music."

Then \(P(A) = \dfrac{80}{200} = 0.4\), \(P(B) = \dfrac{100}{200} = 0.5\), and \(P(A \cap B) = \dfrac{50}{200} = 0.25\).

Step 2: Compute the product.

\(P(A)P(B) = 0.4 \cdot 0.5 = 0.20\).

Step 3: Compare.

The actual overlap is \(0.25\), but the product is \(0.20\).

Because \(0.25 \neq 0.20\), the events are not independent. Students in this survey do both activities more often than independence would predict.

Data problems like this are common in statistics. The point is not just to compute; it is to interpret what the difference means. Here, sports participation and music participation appear to be related.

Worked Example 4: A counterexample using conditional probability

Let \(A\) be "a randomly chosen integer from \(1\) to \(10\) is even," and let \(B\) be "the integer is greater than \(6\)." Are \(A\) and \(B\) independent?

Step 1: Find each probability.

The even numbers are \(2,4,6,8,10\), so \(P(A) = \dfrac{5}{10} = \dfrac{1}{2}\).

The numbers greater than \(6\) are \(7,8,9,10\), so \(P(B) = \dfrac{4}{10} = \dfrac{2}{5}\).

Step 2: Find the overlap.

The numbers that are both even and greater than \(6\) are \(8\) and \(10\), so \(P(A \cap B) = \dfrac{2}{10} = \dfrac{1}{5}\).

Step 3: Compare with the product.

\(P(A)P(B) = \dfrac{1}{2} \cdot \dfrac{2}{5} = \dfrac{1}{5}\).

Since \(P(A \cap B) = P(A)P(B)\), the events are independent.

Again, the events share outcomes, yet they are independent. This reinforces the idea that overlap alone does not determine dependence.

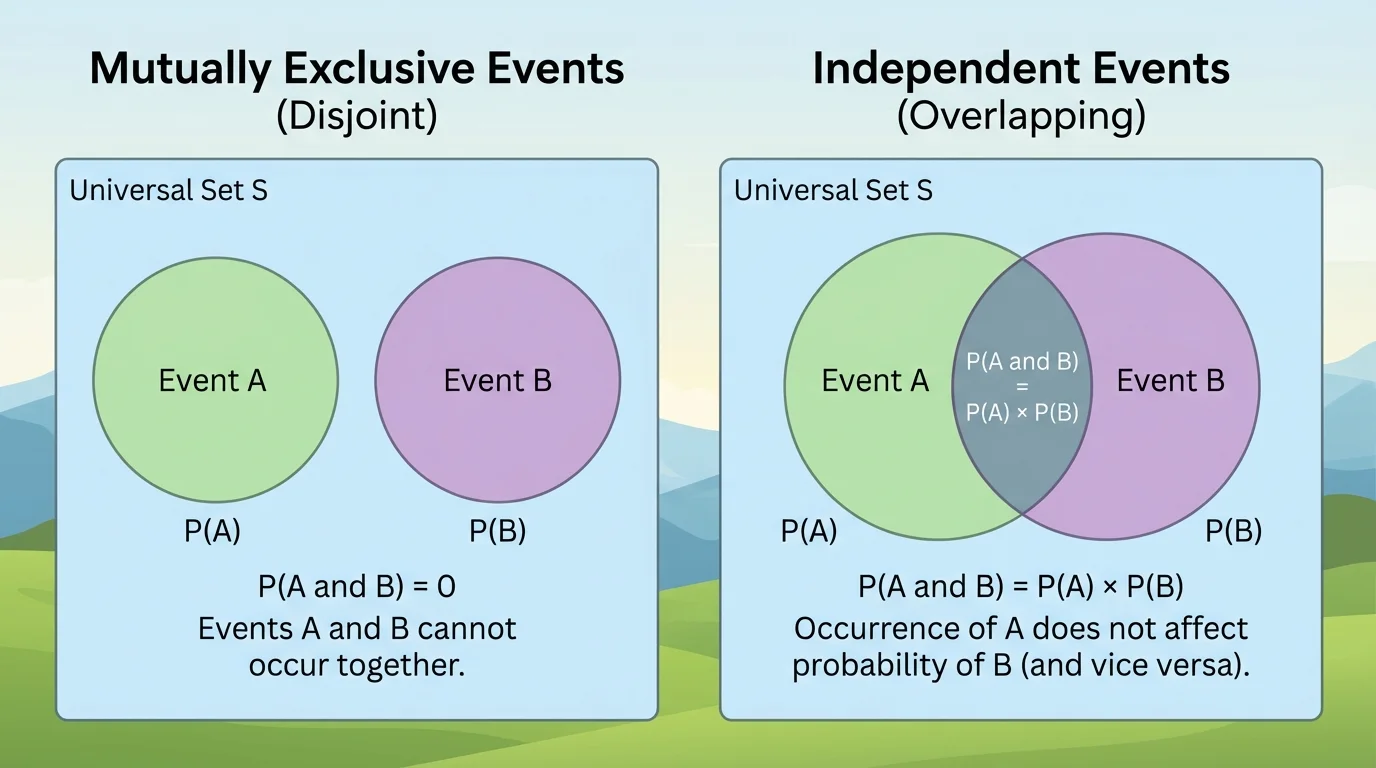

[Figure 4] One of the most important comparisons in probability is that mutually exclusive events are not the same as independent events. Students often confuse them because both describe relationships between events, but they mean almost opposite things.

Mutually exclusive events cannot happen together, so \(P(A \cap B) = 0\). Independent events satisfy \(P(A \cap B) = P(A)P(B)\). If both events have positive probability and are mutually exclusive, then

\[0 = P(A \cap B) \neq P(A)P(B)\]

because \(P(A)P(B) > 0\). So mutually exclusive events with nonzero probabilities are dependent, not independent.

For example, when rolling one die, let \(A\) be "the result is \(2\)" and \(B\) be "the result is \(5\)." These events are mutually exclusive. Since \(P(A \cap B) = 0\), but \(P(A)P(B) = \dfrac{1}{6} \cdot \dfrac{1}{6} = \dfrac{1}{36}\), they are not independent.

Another subtle case involves drawing without replacement. If you draw two cards from a deck and do not replace the first one, then the second draw depends on the first. For instance, let \(A\) be "first card is an ace" and \(B\) be "second card is an ace." Then \(P(B) = \dfrac{4}{52} = \dfrac{1}{13}\), but if \(A\) occurred, only \(3\) aces remain among \(51\) cards, so \(P(B\mid A) = \dfrac{3}{51} = \dfrac{1}{17}\). Since \(P(B\mid A) \neq P(B)\), the events are dependent.

By contrast, if the first card is replaced before drawing again, the two draws become independent because the deck is restored to its original state.

In many real data sets, two variables can look related just because of coincidence in a small sample. Larger samples give more reliable estimates of whether independence is reasonable.

A final subtle point: if \(P(A) = 0\), then \(P(A \cap B) = 0 = P(A)P(B)\) for any event \(B\). So events involving impossible outcomes satisfy the multiplication equation automatically. In school mathematics, most examples focus on events with nonzero probability so the interpretation stays meaningful.

The contrast helps keep the ideas straight: no overlap suggests mutual exclusivity, while "overlap equals product" captures independence.

Independence is not just a textbook label. In manufacturing, a company may ask whether a defect in one part of a product is independent of a defect in another part. If not, there may be a common cause in the production process. In medicine, researchers ask whether having one symptom is independent of having another, or whether a positive test result is independent of actual disease status. These questions affect how data are interpreted and how decisions are made.

In sports analytics, statisticians may study whether a player's success on one attempt affects the next attempt. If not, independent models may be appropriate. If yes, then momentum, fatigue, or strategy may matter. In polling and surveys, independence helps determine whether two characteristics of respondents are related. The sports-and-music example in [Figure 3] is the same kind of reasoning used in real survey analysis.

| Situation | Question about independence | Why it matters |

|---|---|---|

| Medical testing | Is a symptom independent of a disease? | Helps interpret risk and screening data. |

| Manufacturing | Are two types of defects independent? | Can reveal shared causes in production. |

| Surveys | Is one response category independent of another? | Shows whether characteristics are associated. |

| Games of chance | Does one trial affect the next? | Determines whether multiplication of probabilities is valid. |

Table 1. Examples of how independence is used to interpret data and probability situations.

When people say two things are "unrelated," probability gives a way to check that claim. We compare what actually happens together with what would happen together if the events had no influence on each other.

Whenever you see two events \(A\) and \(B\), train yourself to ask three questions. What is \(P(A)\)? What is \(P(B)\)? What is \(P(A \cap B)\)? Then test whether

\[P(A \cap B) = P(A)P(B)\]

If the equality holds, the events are independent. If it fails, they are dependent. If conditional probability is easier to compute, check whether \(P(B\mid A) = P(B)\) instead. These are two ways of expressing the same idea.

Probability often feels intuitive, but intuition can be misleading. The mathematical definition keeps the reasoning precise. Independent events are not simply events that "sound separate." They are events whose joint probability matches the product of their individual probabilities.