A post can go viral in minutes, but trust takes months or years to build. That is the strange reality of online life: digital tools let you create faster than ever, and they also let mistakes spread faster than ever. AI can help you brainstorm, edit, translate, summarize, or design. It can also produce fake quotes, biased answers, copied work, and realistic-looking content that misleads people. Digital ethics is about how you decide what is right, fair, and responsible when the technology makes almost anything possible.

If you use social media, messaging apps, video platforms, or AI tools, you already make ethical choices every day. You decide whether to repost a rumor, whether to use an AI-written caption without saying so, whether to upload a photo of someone else, whether to trust a screenshot, and whether to edit content in a way that changes the truth. These are not just internet issues. They affect friendships, jobs, college applications, mental health, safety, and your long-term reputation.

Doing this well does not mean being perfect. It means slowing down enough to ask better questions. Before you post, generate, edit, or share, ask: Is this accurate? Is it fair? Do I have permission? Am I hiding something important? Could this hurt someone? Those questions are the core of digital ethics.

Digital ethics is the practice of making responsible choices when using technology, especially when your actions affect other people.

Consent means getting clear permission before sharing, using, or altering someone else's personal content or information.

Transparency means being open about what you did, such as saying when AI helped create or edit something.

Another reason this matters is permanence. Even if a post is deleted, screenshots, downloads, and algorithmic copies can keep it alive. Your online actions contribute to your digital record over time. A thoughtful post can strengthen trust. A careless one can make people question your judgment.

Digital ethics becomes practical when you connect it to situations you actually face. If you ask AI to write a personal statement and submit it as fully your own work, that is an honesty issue. If you use AI to generate interview questions and then answer them in your own words, that can be a responsible use. If you edit a selfie to smooth lighting, that is one thing. If you edit a photo to create a false event or make someone look guilty, that is very different.

A few key ideas show up again and again. Your digital footprint is the trail of content, comments, likes, searches, and uploads connected to you. Your choices can also be shaped by algorithmic bias, which happens when a system produces unfair patterns because of the data it learned from or how it was designed. A person can also commit plagiarism by presenting someone else's words, ideas, art, or work as their own, even if an AI tool helped copy or remix it. And when false or misleading content spreads, it may count as misinformation, especially when people share it without checking it first.

One newer challenge is the rise of deepfake content: audio, video, or images that make it seem like someone said or did something they never did. Some deepfakes are obvious jokes, but others can damage reputations, influence public opinion, or scare people. That is why digital ethics is not just about following rules. It is about protecting truth, dignity, and trust in spaces where appearances can be manipulated.

Some AI-generated images include small mistakes such as unnatural hands, mismatched reflections, or impossible shadows. But many are now realistic enough that visual impressions alone are not a reliable way to determine whether something is true.

You do not need to become suspicious of everything online. You do need to become more deliberate. Ethical digital citizens understand that speed is not the same as wisdom, and convenience is not the same as integrity.

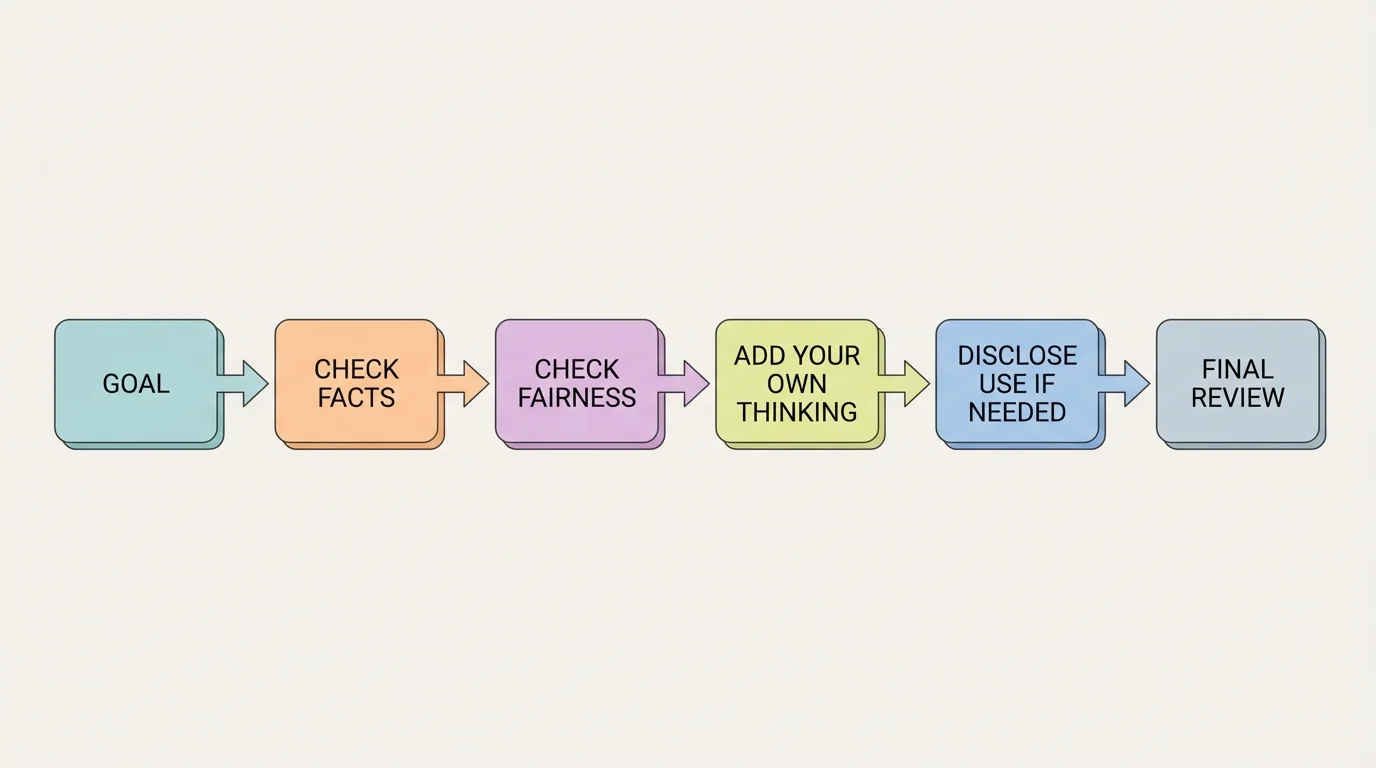

AI can be useful when you treat it like a tool, not a replacement for your judgment. A smart way to think about this, as shown in [Figure 1], is to run every AI use through a simple decision process: What is the goal, what are the risks, and what still needs a human review? AI is strongest at pattern-based help such as brainstorming, organizing, summarizing, drafting, and generating options. It is weaker at understanding your values, your lived experience, emotional nuance, and the real-world consequences of being wrong.

Responsible use starts with purpose. If you use AI to get ideas for a podcast outline, compare job descriptions, or practice interview responses, that can save time and build skills. But if you let AI make important decisions for you without checking them, you hand over responsibility while still keeping the consequences. If an AI tool gives unsafe advice, false legal information, or biased wording in a résumé, you are still the one who sends it.

Another issue is accuracy. AI systems can sound confident and still be wrong. This is sometimes called an hallucination: the system generates false or invented information that looks believable. If you ask for facts, statistics, quotes, sources, legal advice, health guidance, or historical details, you should verify them with reliable sources before using or sharing them.

Bias also matters. If an AI tool was trained on unfair, limited, or stereotyped data, it may produce answers that reflect those problems. For example, if you ask AI to describe a "professional leader" and it repeatedly leans toward one gender, race, accent, or background, that is not neutral. Ethical AI use includes noticing patterns, questioning them, and correcting them instead of repeating them.

Transparency is another key habit. If AI significantly helped create something, ask whether people deserve to know. In many cases, the honest answer is yes. If you post AI-generated art, say so. If AI helped draft a public statement, say so. If AI helped clean up grammar in an email, disclosure may matter less, because spelling support is different from generating the whole message. The bigger the AI contribution, the stronger the reason to disclose it.

Privacy matters too. Do not paste private conversations, personal data, passwords, medical details, family information, or someone else's unpublished work into an AI tool unless you clearly know how that platform stores and uses data and you have permission. Convenience is not worth exposing sensitive information.

Use AI as support, not as cover. Ethical AI use means the tool helps you think, but it does not hide your responsibility. If you would feel uncomfortable admitting exactly how you used AI, that is a sign to pause and reconsider.

A helpful personal rule is this: If the result affects someone's trust, safety, opportunity, or reputation, your review must get more careful. That includes job applications, recommendation letters, activism posts, fundraising claims, and content about real people.

Creating online content is not just about making something look good. It is also about being honest about where it came from, who it affects, and what message it sends. This applies to videos, memes, music edits, fan accounts, blogs, podcasts, digital art, and short-form posts.

Start with originality. Inspiration is normal; copying is different. If you borrow someone's words, visual style, footage, music, or design idea in a major way, give credit. If you are unsure whether your use is okay, ask permission or use licensed, public-domain, or clearly allowed material. Ethical creators respect the work of others because creative labor has value, even when it is digital and easy to copy.

Editing also has ethical limits. Color correction, cropping, and cleaning background noise are often fine because they improve quality without changing the truth. But editing becomes deceptive when it changes meaning. Cutting out important context from a video clip, changing timestamps, replacing audio, removing people from a scene, or generating fake screenshots can make an audience believe something false.

Consent is especially important when content includes other people. Before posting someone's face, voice, room, personal story, or private message, ask whether they are okay with it. This is true even if your account is small. A person may not want their image used in a joke, their voice remixed, or their message shared beyond its original context.

Sometimes people defend harmful posts by saying, "It was just content." But content still affects real people. A joke account that posts classmates' photos without permission, a fan edit that sexualizes someone, or a "call-out" clip with missing context can lead to embarrassment, harassment, or lasting damage. Ethics asks you to think beyond clicks and ask who pays the cost.

Case study: AI-generated artwork for a fundraiser

You are making a poster for an online fundraiser and want it done quickly.

Step 1: Check the source of your visuals.

If you use AI-generated art, ask whether the platform allows commercial or public use and whether the style imitates a specific living artist too closely.

Step 2: Decide what your audience should know.

If the artwork is a major part of the campaign, disclose that AI was used so you are not implying a human artist created it from scratch.

Step 3: Protect honesty.

Do not use AI images that falsely show real people receiving aid, unless those scenes are clearly labeled as illustrative rather than documentary.

Step 4: Review for harm.

Check whether the image uses stereotypes, exploits suffering, or creates a misleading emotional story just to increase donations.

An ethical result is a visually strong poster that is honest about how it was made and truthful about the cause it supports.

One strong habit is to label your work accurately. "AI-assisted," "edited for clarity," or "illustrative concept art" are simple phrases that can prevent misunderstanding. Clear labels protect your credibility.

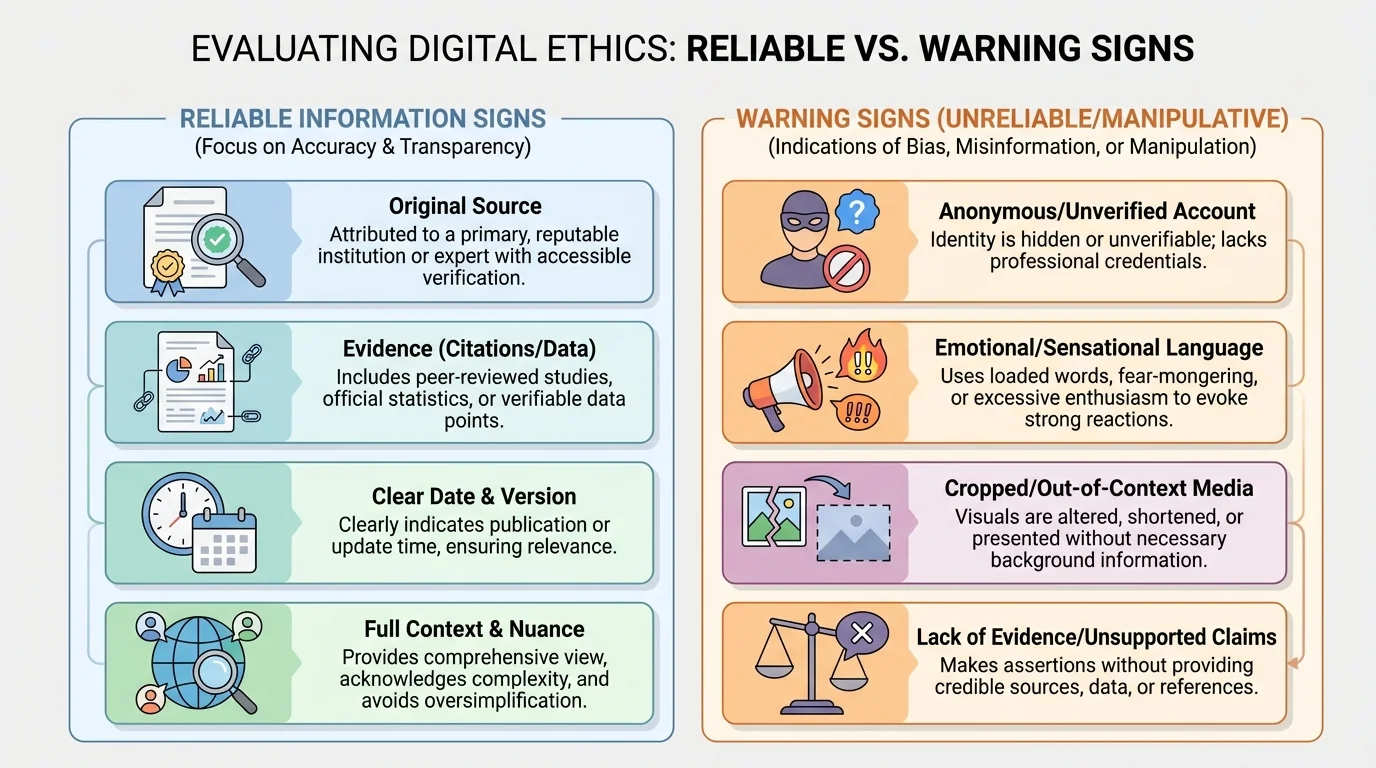

Before you repost a claim, use a credibility check, as shown in [Figure 2], instead of asking only whether the post matches your opinion. Ethical sharing starts with verification. Who created this? What is the original source? Is there evidence? Is the quote complete? Is the image old, cropped, or taken from a different event? Could the account be fake, anonymous, or trying to provoke outrage for engagement?

Many harmful posts spread because they trigger strong emotion fast. Fear, anger, and disgust make people click before they think. That does not make those people evil; it makes them human. But being ethical online means recognizing when your emotions are being used to rush you.

Context matters almost as much as truth. A real image can still mislead if it is attached to the wrong date or story. A real statistic can still mislead if key details are missing. A real quote can still mislead if part of it was cut out. Good digital citizens do not only ask, "Is this fake?" They also ask, "Is this presented fairly?"

Privacy is part of ethical sharing too. Even if information is technically available online, that does not automatically mean you should spread it. Sharing someone's address, school schedule, personal conflict, health issue, or family crisis can create real danger. The same goes for screenshots from private chats. If a post exposes a person rather than informing the public responsibly, stop and think.

It is also possible to be accurate and still be harmful. For example, sharing a video of someone at their lowest moment may be "real," but if the person is vulnerable and the post invites ridicule, your choice still has ethical weight. Truth matters, but so do compassion and proportionality.

| Question | Ethical sharing choice | Risky sharing choice |

|---|---|---|

| Do I know the original source? | Track it back before reposting. | Share a screenshot with no source. |

| Could this hurt someone? | Remove names, faces, or identifying details. | Post full details because it is "public anyway." |

| Is the claim verified? | Check multiple reliable sources. | Trust one viral account. |

| Is context missing? | Read beyond the headline or clip. | React to the most dramatic version. |

| Did AI help create this? | Disclose that clearly when relevant. | Let people assume it is fully human-made or real. |

Table 1. Comparison of ethical and risky choices when deciding whether to share digital information.

When false information spreads during emergencies, the harm can become immediate. A fake evacuation route, altered weather warning, or false report about a protest can cause panic. In high-stakes situations, share only from official or strongly verified sources.

"Not everything that can be shared should be shared."

That principle applies even when a post would bring attention to your account. Reach is not the same as responsibility.

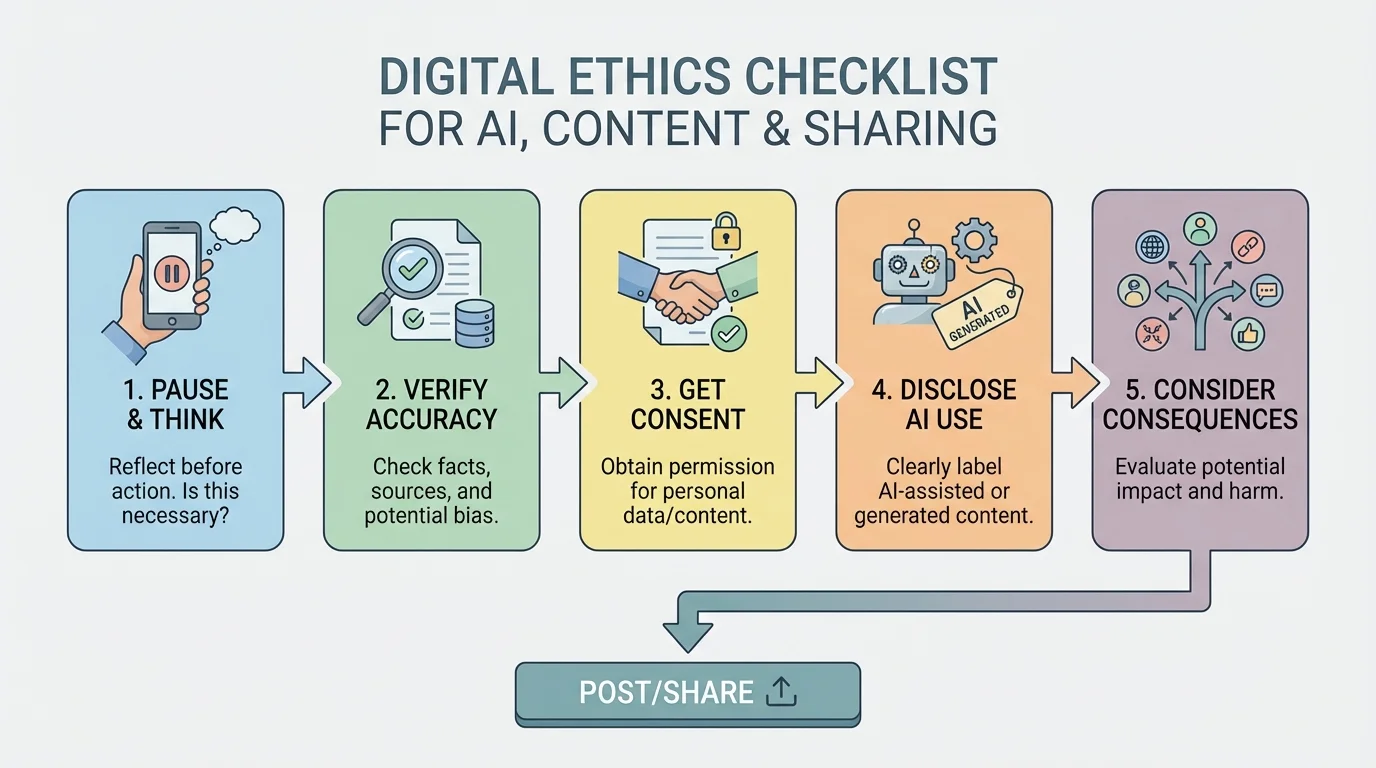

A repeatable checklist helps because digital ethics can feel messy in the moment. The five-part framework in [Figure 3] gives you a practical way to slow down without overthinking every post. Use it for AI outputs, captions, videos, screenshots, and reposts.

Pause. If a post makes you feel instantly angry, excited, or eager to expose someone, wait. Strong emotion often lowers judgment.

Verify. Check facts, dates, sources, and whether media is real and in context.

Get consent. Ask before posting identifiable content about someone else.

Disclose. Be honest if AI significantly created, edited, or altered the content.

Consider consequences. Ask who could be helped, embarrassed, misled, or harmed by this post.

This framework is not meant to make you afraid of posting. It is meant to make your choices stronger. Over time, it becomes a habit. You stop seeing ethics as a barrier and start seeing it as part of good judgment.

Quick scenario check

A trending account posts a dramatic clip claiming a local business discriminated against a customer. You feel ready to repost it with criticism.

Step 1: Pause.

You notice your reaction is emotional because the clip is upsetting.

Step 2: Verify.

You search for the full video and find out the clip was heavily cut.

Step 3: Consider consequences.

A repost could direct harassment toward workers based on incomplete information.

Step 4: Choose responsibly.

You do not repost the clip. If you discuss it at all, you explain that the viral version lacked context.

The ethical choice is not silence forever. It is refusing to amplify something misleading.

Later, when you are deciding whether to share AI-made content, the same structure still works. Your goal is not perfection. It is informed responsibility.

Social media bio and photos: If you use AI to create a profile photo that looks like a real photograph of you but is not actually you, think carefully. If it could mislead someone in a serious context such as work, dating, or activism, it crosses an ethical line.

Job applications: Using AI to brainstorm bullet points for your experience can be fine. Letting AI invent experience you do not have is dishonest. Employers care about skills, but they also care about trust.

Personal statements and introductions: If AI helps you organize your ideas, that can support your process. If it replaces your voice entirely, the result may sound polished but false. People are often better at sensing authenticity than students expect.

Meme pages and commentary accounts: Humor is not a free pass. If the joke depends on exposing a private person, using a fake quote, or encouraging pile-ons, the content may be entertaining to some viewers but still unethical.

Advocacy and activism: Caring about an issue does not excuse bad verification. A cause is hurt, not helped, when supporters spread false examples. Credibility matters. As the credibility checklist in [Figure 2] makes clear, even true causes need accurate evidence.

Friend group screenshots: Posting private messages for public entertainment or revenge may feel justified in the moment. But it breaks trust and can escalate conflict far beyond the original issue.

Your online reputation is not built by one perfect post. It is built by patterns. People learn whether you are careful with facts, respectful with other people's stories, honest about AI use, and willing to correct mistakes. Trustworthy digital citizens do not just avoid obvious harm. They also repair harm when they cause it.

If you post something inaccurate, correct it clearly. If you shared content without permission, remove it and apologize. If AI helped more than you first admitted, update the description. Accountability is part of ethics. Owning a mistake often strengthens trust more than pretending nothing happened.

You can also set personal standards before problems arise. Decide now that you will not post private screenshots without permission. Decide that you will not use AI to impersonate a person. Decide that you will not share breaking news until it is verified. Pre-deciding makes hard moments easier.

Your digital choices affect both safety and opportunity. The same habits that prevent harm online also help you build a reputation others can rely on in work, community, and personal relationships.

Digital ethics is ultimately about character in a high-speed environment. Technology changes quickly. The core questions do not. Be accurate. Be fair. Be honest about what is real and how it was made. Respect consent. Protect privacy. And remember that every click, post, and share teaches other people something about who you are.