A small sound from a speaker can suddenly become an ear-splitting squeal. A human body can keep its temperature at about \(37\,^{\circ}\textrm{C}\) even when the weather changes. A forest can recover after a fire, but a climate system can also cross a threshold and shift in ways that are hard to reverse. These situations seem very different, yet they are connected by one big scientific idea: feedback. Feedback helps explain why some systems resist change and stay stable, while others amplify change and become unstable.

In science, a system is a group of interacting parts that form a whole. A system can be natural, such as a cell, a lake, a forest, the atmosphere, or the human body. It can also be built, such as a car engine, a bridge, a traffic network, a heating system, or an electrical grid. Scientists study systems by identifying their parts, how those parts interact, and what enters or leaves the system.

A system usually has inputs, outputs, and internal processes. Sunlight is an input to Earth's climate system. Heat leaving Earth is an output. In a school building, electricity and fuel are inputs, while light, heat, and motion are outputs. The boundary of a system is the line scientists draw around what they are studying. That boundary may be physical, like the walls of a greenhouse, or conceptual, like the chosen limits of an ecosystem.

System means a set of connected parts that influence one another.

Stability means a system tends to remain near a certain condition or return to it after a disturbance.

Feedback means the result of a process influences that same process later.

Rate of change means how fast a quantity changes over time.

When scientists ask why a system changes or stays steady, they are really asking about causes, interactions, and feedback. They also ask whether the system is in equilibrium, whether it can recover from a disturbance, and how quickly it responds. Those questions matter in biology, chemistry, physics, Earth science, and engineering.

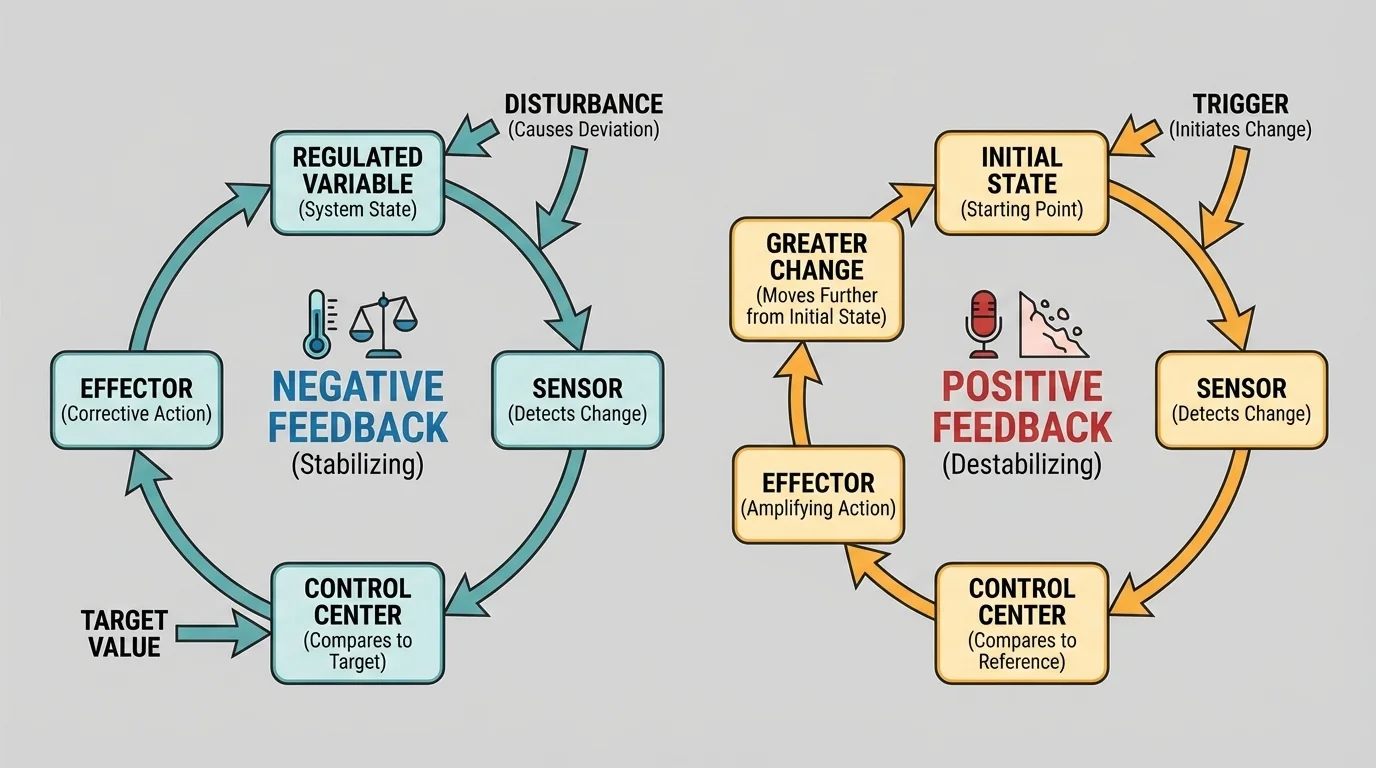

A feedback loop occurs when part of a system's output returns and affects the system again. As [Figure 1] shows, the effect can push the system back toward a target condition or drive it farther away from that condition. This is why feedback is one of the central ideas in studying stability and change.

There are two main kinds of feedback. Negative feedback reduces a change. If a system moves away from a target state, negative feedback acts in the opposite direction and tends to restore balance. Positive feedback increases a change. If a system begins to shift, positive feedback pushes it even farther in the same direction.

The word "negative" here does not mean bad, and "positive" does not mean good. These words describe the direction of the feedback effect. Negative feedback is usually stabilizing, but not always perfectly. Positive feedback can be useful in some situations, but it often leads to rapid change or instability if nothing limits it.

Many students first meet feedback in everyday technology. If you speak too close to a microphone connected to speakers, the sound from the speaker re-enters the microphone, is amplified again, and repeats. The result is a loud squeal. That is positive feedback. If you set a thermostat to hold a room near a chosen temperature, the thermostat detects the difference between the actual temperature and the set point and adjusts the heater. That is negative feedback.

The same basic feedback idea appears in fields that seem unrelated. It helps explain body temperature, climate shifts, automated braking systems, and even why internet traffic can suddenly slow down.

Because feedback appears in so many places, it gives science a powerful way to construct explanations. Rather than memorizing isolated facts, scientists look for patterns: what starts the change, what responds, and whether the response weakens or strengthens the original change.

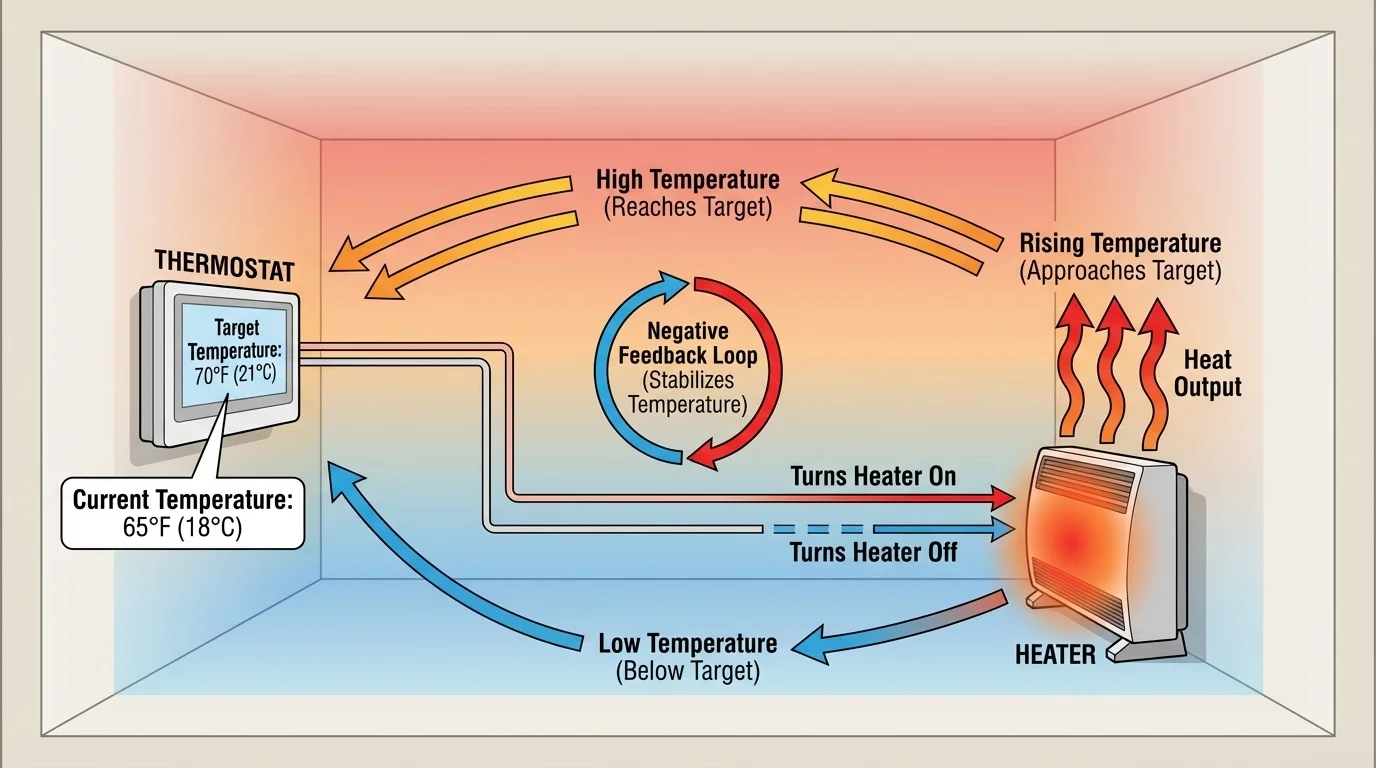

Negative feedback is one of the main reasons systems stay stable. In a negative feedback loop, a deviation from a target causes a response that reduces that deviation. A familiar example is a thermostat, and [Figure 2] illustrates the basic idea. If the room temperature drops below the set point, the heater turns on. As the room warms, the difference shrinks. When the set point is reached, the heater turns off.

This does not mean the temperature is perfectly constant every second. Real systems usually fluctuate around a target. Stability often means staying within a safe range, not remaining at one exact value. In many systems, negative feedback creates a dynamic balance rather than complete stillness.

Biology provides some of the clearest examples. The human body uses negative feedback to maintain homeostasis, which is the maintenance of relatively stable internal conditions. When body temperature rises, sweating helps cool the body. When body temperature falls, shivering helps produce heat. Blood sugar is also regulated through feedback involving hormones such as insulin and glucagon.

Consider a simple numerical example of a stabilizing response. Suppose a room is set to \(20\,^{\circ}\textrm{C}\). If the room cools to \(17\,^{\circ}\textrm{C}\), the difference from the set point is \(20 - 17 = 3\,^{\circ}\textrm{C}\). A control system detects that \(3\,^{\circ}\textrm{C}\) drop and turns on heating. If the room warms back to \(19\,^{\circ}\textrm{C}\), the remaining difference is only \(1\,^{\circ}\textrm{C}\), so the response can weaken. The shrinking difference shows negative feedback at work.

Dynamic equilibrium describes a situation in which processes continue, but the overall condition stays fairly constant because opposing effects balance one another. A lake may have water flowing in and out, yet its level stays nearly steady. A body constantly produces and loses heat, yet its temperature stays within a narrow range.

Ecosystems can also contain stabilizing feedbacks. If a prey population increases, predators may have more food and their population may grow. More predators then reduce the prey population, which can later reduce predator numbers. This kind of interaction can create cycles rather than a fixed constant value, but it still limits extreme change under many conditions. The balance is not guaranteed, however; habitat destruction or invasive species can disrupt it.

Engineers rely on negative feedback in many designs. Cruise control in a car compares actual speed with target speed. If the car slows on a hill, the engine power increases. If the car speeds up downhill, power decreases. As with the thermostat in [Figure 2], the system continuously corrects errors. Airplane autopilot systems, voltage regulators, and modern manufacturing robots use similar logic.

Positive feedback happens when a change causes more of the same change. As [Figure 3] illustrates, this can produce rapid growth, runaway behavior, or a sudden shift to a new state. Positive feedback is often associated with instability because it magnifies disturbances instead of damping them.

The microphone-speaker squeal is a clear technological example. A small sound enters the microphone, gets amplified by the speaker, re-enters the microphone louder, and repeats. Each loop makes the sound stronger until it becomes extreme. Unless the system is limited by distance, reduced gain, or another control, the effect continues to build.

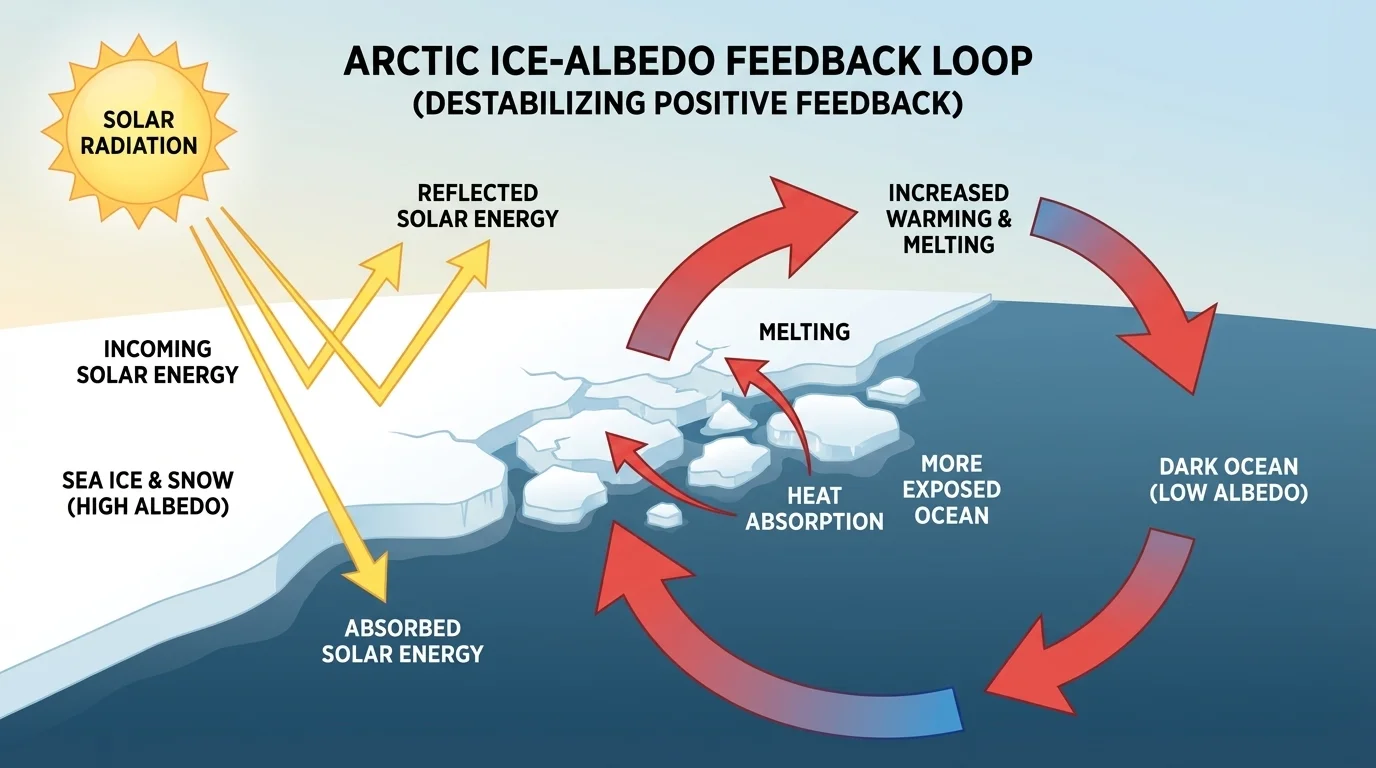

Earth science gives an important natural example: the ice-albedo feedback. Ice and snow reflect a large fraction of sunlight. Dark ocean water absorbs more energy. If warming melts some Arctic ice, less sunlight is reflected and more is absorbed. That extra absorption causes more warming, which melts more ice.

This does not mean all climate change is caused by positive feedback alone. Systems usually contain a mix of feedbacks. But positive feedbacks can make a trend stronger and push the system faster than expected.

Population growth can also show positive feedback. If a population has more reproducing individuals, it can produce even more offspring in the next generation. A simplified expression for exponential growth is \(N(t) = N_0 e^{rt}\), where \(N_0\) is the starting population, \(r\) is the growth rate, and \(t\) is time. If \(N_0 = 100\), \(r = 0.2\), and \(t = 5\), then \(N(5) \approx 100e^1 \approx 272\). The growth speeds up because a larger population creates even more growth.

Real-world case: A stabilizing system becomes unstable

Traffic flow often works well until congestion reaches a threshold. Then small disturbances can spread backward as stop-and-go waves.

Step 1: Cars travel at a steady speed when spacing is large enough.

Step 2: One driver brakes slightly.

Step 3: Drivers behind react with a delay and brake a bit more strongly.

Step 4: The disturbance grows and becomes a traffic jam even without an accident.

This is a useful reminder that delays in response can turn an apparently stable system into an unstable one.

Some positive feedbacks are useful. Blood clotting is one example: once clotting begins, chemicals attract more platelets to the site, helping seal a wound quickly. But that process must stop at the right time. A feedback that is helpful for a short period can become dangerous if it keeps going.

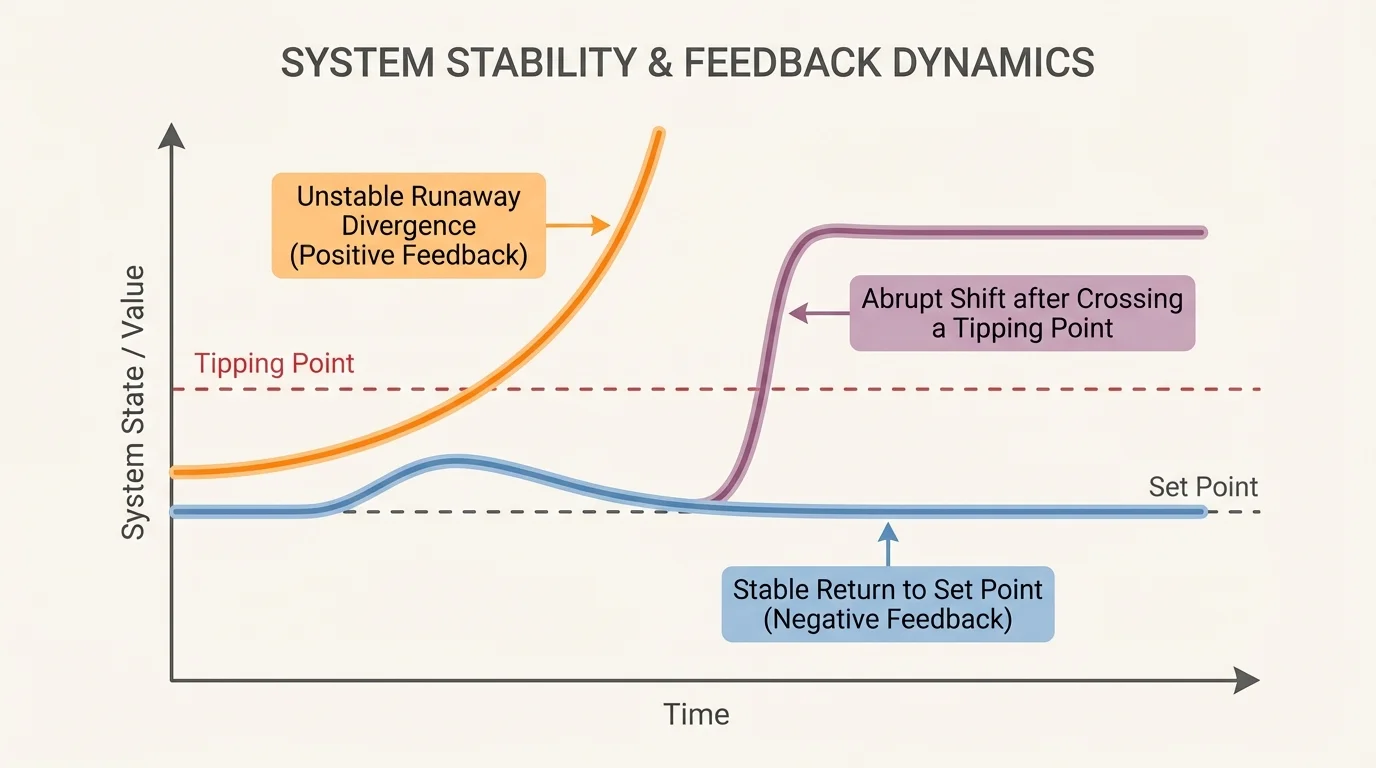

Scientists care not only about whether a system changes, but also about how fast it changes. The rate of change compares a change in quantity to the time over which it happens. In simple form, it can be written as \(\textrm{rate} = \dfrac{\Delta x}{\Delta t}\), where \(\Delta x\) is the change in a quantity and \(\Delta t\) is the change in time. As [Figure 4] shows, systems can return to a target, drift away from it, or shift suddenly after a threshold is crossed.

For example, if the concentration of a pollutant in a river rises from \(4\) units to \(10\) units in \(3\) days, the average rate of change is \(\dfrac{10 - 4}{3} = 2\) units per day. A system with a fast rate of change may be harder to control because there is less time to respond.

Another key idea is equilibrium. Equilibrium does not always mean nothing is happening. It often means competing processes balance one another. A marble at the bottom of a bowl is in a stable equilibrium: if pushed slightly, it rolls back. A marble balanced on top of a hill is in an unstable equilibrium: a tiny push sends it farther away.

Systems also differ in resilience, which is their ability to recover after disturbance. A resilient forest may regrow after a fire. A fragile coral reef may not recover easily after repeated warming and pollution. If a system crosses a threshold, it may reach a tipping point, where a small additional change triggers a large shift.

Time delays matter greatly. If a correction comes too late, negative feedback may fail to stabilize the system. Think of a driver steering on ice. If the driver overcorrects after each delayed response, the car may weave more and more. The loop is meant to stabilize motion, but delay and overshoot can make it destabilizing.

From earlier science study, remember that causes in complex systems are often not linear. A bigger input does not always produce a proportionally bigger output. Interactions, thresholds, and delays can make system behavior surprising.

The graph patterns in [Figure 4] are common across disciplines. A population can level off, explode, or collapse. A chemical reaction can proceed slowly at first and then accelerate. A power grid can absorb a disturbance or experience cascading failure. Looking at change over time is one of the main ways scientists detect which feedbacks are operating.

Natural systems rarely contain just one feedback loop. The water cycle, carbon cycle, climate, food webs, and living organisms all involve many connected loops acting at once. That is why scientific explanations often focus on the dominant feedbacks under particular conditions.

In the human body, regulation of temperature, water balance, blood pressure, and blood glucose all depend on feedback. Homeostasis works because sensors detect conditions, control centers compare them with desired ranges, and effectors produce responses. When one part fails, the whole system can become unstable.

In ecosystems, population size depends on food supply, predation, disease, competition, and habitat. A rise in plant growth may support more herbivores, which may support more predators. But drought, nutrient loss, or pollution can change those relationships. The system may remain stable for years and then shift quickly if a threshold is crossed.

Chemical equilibrium example

Some chemical systems appear stable because forward and reverse reactions occur at equal rates. Consider the reversible reaction between nitrogen dioxide and dinitrogen tetroxide:

\[2\textrm{NO}_2 \rightleftharpoons \textrm{N}_2\textrm{O}_4\]

If heating causes more \(\textrm{N}_2\textrm{O}_4\) to split into \(\textrm{NO}_2\), the mixture becomes browner. When conditions change again, the system can shift back. The visible color change helps scientists detect how the system responds to disturbance.

Earth's climate system includes feedbacks involving greenhouse gases, clouds, water vapor, ice cover, ocean circulation, and vegetation. Some of these are stabilizing and some are amplifying. Understanding which feedback dominates under certain conditions is essential for predicting future change. The ice-albedo process discussed earlier, as seen in [Figure 3], is one example of an amplifying climate feedback.

Even geological systems show these ideas. Erosion, sediment buildup, volcanic emissions, and long-term carbon cycling all help determine whether Earth's surface and atmosphere shift gradually or experience major change over millions of years.

Built systems are designed, but they still behave like systems in the scientific sense. They contain components, flows of matter or energy, and feedback loops. Engineers study stability because unstable systems can fail, oscillate, overheat, or collapse.

An electrical grid must balance supply and demand almost continuously. If too many devices draw power at once, the frequency or voltage can drift. Control systems respond to keep the grid stable. But if failures spread from one part of the network to another, a cascading outage can occur. That is a destabilizing chain reaction.

Bridges and buildings must also resist feedback effects. Wind pushing on a structure can sometimes produce oscillations. If the motion of the structure changes airflow in a way that increases the force, the vibration can grow. Engineers use dampers and design adjustments to reduce that amplification.

City traffic is another important example. Traffic lights are often timed using feedback from sensors that detect vehicle flow. If the control is too slow or too rigid, congestion builds. If it adjusts well, traffic remains smoother. Public transportation networks, internet systems, and supply chains all depend on balancing flows and preventing runaway disruption.

Control systems and set points

Many built systems operate by comparing a measured condition with a target called a set point. The difference between the actual value and the set point is called an error. Negative feedback reduces that error. Good design depends on choosing how strongly and how quickly the system should respond.

Medical technology uses the same logic. An infusion pump, a pacemaker, or a temperature-control device in a hospital must respond correctly to changing conditions. A response that is too weak may not correct the problem. A response that is too strong or too delayed can create new problems.

Science is not only about naming feedback loops; it is about building evidence-based explanations. Scientists collect data over time, compare variables, and test models. A model may be a diagram, a physical setup, a computer simulation, or a mathematical relationship.

One simple way to describe change is with slope on a graph. If a graph of temperature versus time rises steeply, the rate of warming is high. If it flattens, the rate decreases. A changing slope may suggest that a feedback is strengthening or weakening over time.

| System | Main stabilizing factor | Main destabilizing factor | Typical outcome |

|---|---|---|---|

| Human body temperature | Sweating, shivering | Illness, extreme exposure | Usually stable within a range |

| Room heating | Thermostat control | Sensor failure or delay | Set point maintained or oscillation |

| Climate ice cover | Heat loss to space, seasonal cycles | Ice-albedo amplification | Gradual or accelerated change |

| Traffic network | Adaptive signaling | Reaction delays, overcrowding | Smooth flow or jam waves |

| Population growth | Limited resources | Self-amplifying reproduction | Leveling off or rapid increase |

Table 1. Examples of stabilizing and destabilizing factors in natural and built systems.

Scientists often ask the same set of questions: What variable changed first? What responded? Was the response opposite to the original change or in the same direction? How large was the delay? Was there a threshold beyond which the behavior changed sharply? These questions help turn observations into explanations.

"The important thing in science is not so much to obtain new facts as to discover new ways of thinking about them."

— William Lawrence Bragg

That quote fits this topic well because feedback gives scientists a powerful way to think. It connects body systems, climate, machines, ecosystems, and cities through the same underlying logic of interaction, correction, amplification, and change over time.

Conditions of stability and the determinants of rates of change are critical in both natural and built systems because they affect safety, survival, and prediction. A stable airplane control system keeps passengers safe. A stable climate supports agriculture and water supplies. A stable blood glucose system protects organs and health.

At the same time, change is not always bad. Systems must sometimes change in order to adapt. The key scientific question is whether change is controlled, reversible, and within safe limits, or whether it is accelerating toward failure. Understanding feedback helps answer that question.

When scientists and engineers study a system, they are often trying to identify what keeps it in balance, what pushes it out of balance, and how fast those forces act. That is why so much of science deals with explaining both change and stability. The world is full of systems that are moving, reacting, adjusting, and sometimes crossing thresholds. Feedback is one of the main ideas that makes sense of all of it.