A phone warms in your hand, sunscreen promises protection, airport scanners are described as safe, and headlines argue about whether wireless devices cause harm. These claims all involve electromagnetic radiation, but they are not equally trustworthy. Some are based on strong evidence collected over years; others exaggerate a tiny effect, confuse correlation with causation, or leave out critical details such as dose, exposure time, and the kind of radiation involved.

To judge these claims well, you need more than a list of facts. You need to think like a scientist: What exactly is being claimed? What evidence supports it? How was the evidence collected? Are the data consistent with what we know about matter, energy, chemistry, and biological systems? Can the claim be checked against other sources?

A claim can sound scientific because it uses technical words, cites a study, or includes numbers. But those features alone do not guarantee truth. For example, a media report may say that "radiation from everyday devices damages cells." That statement may leave out which type of radiation was studied, how intense it was, whether the experiment used isolated cells instead of whole organisms, and whether the effect was repeated by other researchers.

Scientific literacy means slowing down and separating the claim from the evidence. A strong claim matches the quality and amount of evidence behind it. If the evidence is limited, then the conclusion should also be limited. Good science is careful about uncertainty.

Validity refers to whether a conclusion or claim is actually supported by evidence and sound reasoning. Reliability refers to whether results are consistent and repeatable across trials, measurements, or studies. A source can be reliable in reporting what happened in one experiment but still not justify a broader conclusion that is valid.

This difference matters. Suppose one laboratory finds a small effect from a certain radiation exposure. If other laboratories cannot reproduce it, the finding may not be reliable. If the original study had weak controls or unrealistic conditions, the larger claim may also lack validity.

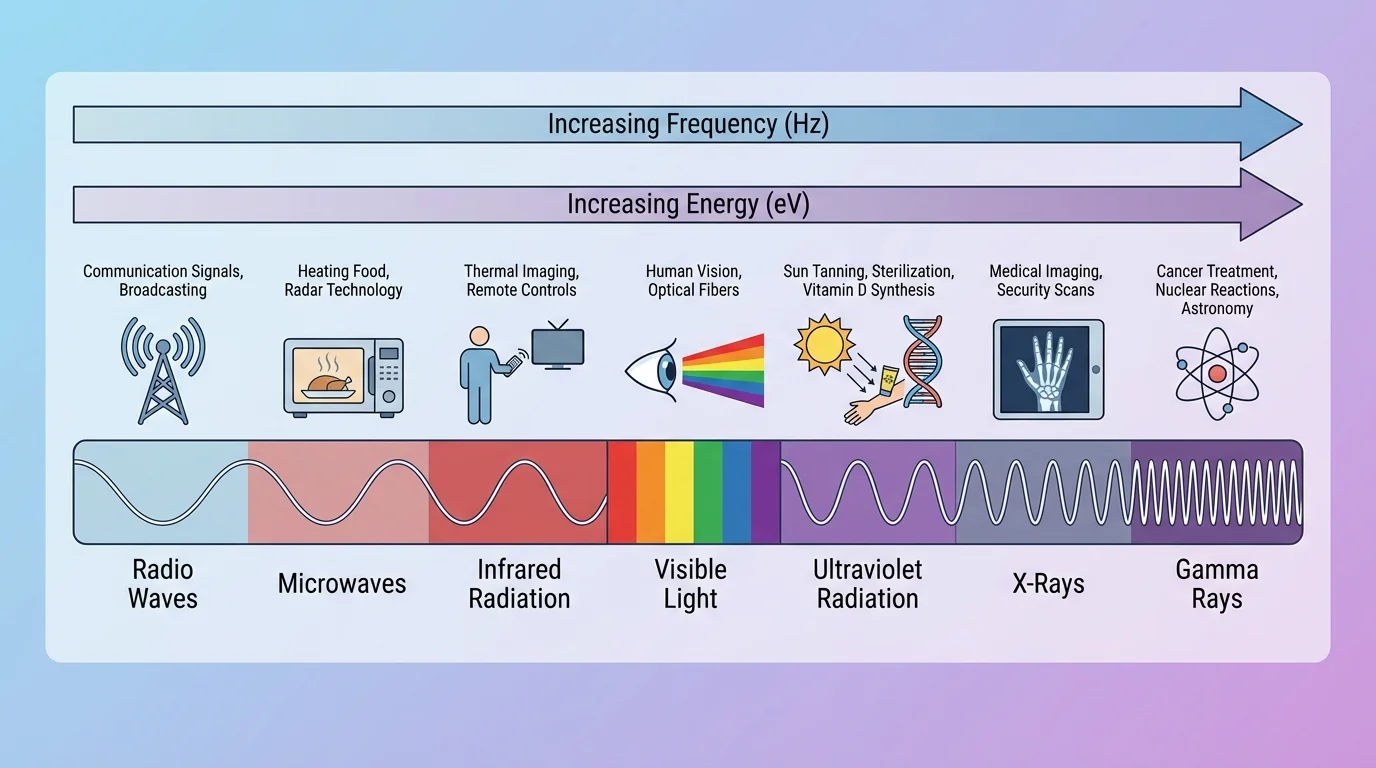

Electromagnetic radiation includes radio waves, microwaves, infrared, visible light, ultraviolet, X-rays, and gamma rays. These forms differ mainly by frequency and wavelength. Higher frequency means more cycles per second and usually more energy per photon.

The relationship between frequency and photon energy is given by \(E = hf\), where \(E\) is energy, \(h\) is Planck's constant, and \(f\) is frequency. For a simple comparison, if one photon has frequency \(5 \times 10^{14} \textrm{ Hz}\) and another has frequency \(1 \times 10^{15} \textrm{ Hz}\), the second photon has twice the energy because \(E\) is directly proportional to \(f\).

This does not mean that every exposure to high-frequency radiation is automatically dangerous or that every low-frequency exposure is harmless. The effect depends on frequency, intensity, duration, whether the radiation is absorbed, and what kind of matter absorbs it.

One major dividing line is between ionizing radiation and non-ionizing radiation. Ionizing radiation has enough energy to remove electrons from atoms or molecules, creating ions. X-rays and gamma rays are ionizing. Most radio waves, microwaves, infrared, and visible light are non-ionizing. Ultraviolet radiation sits near the boundary; some ultraviolet frequencies can cause ionization or other chemical damage.

That distinction helps explain why not all "radiation" should be grouped together in one category. A microwave oven, a tanning bed, and a medical X-ray machine all use electromagnetic radiation, but they do not affect matter in the same way.

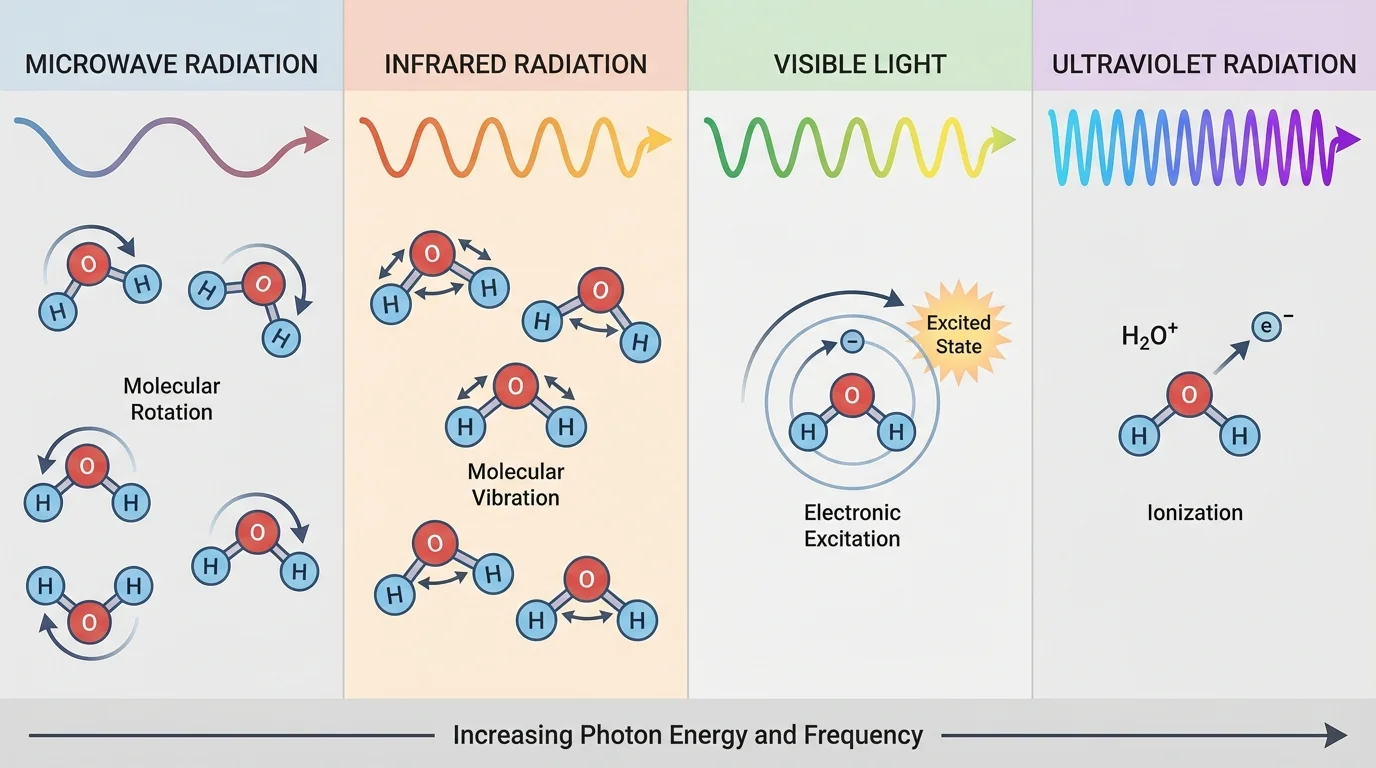

When radiation undergoes absorption, its energy is transferred to matter. That energy can produce different effects depending on the frequency and the material. In some cases it increases thermal motion; in others it changes molecular vibrations, excites electrons, or breaks bonds.

Microwaves are strongly absorbed by liquid water and some other polar substances. This absorption causes molecules to rotate and transfer energy through collisions, which increases temperature. That is why foods containing water heat effectively in a microwave oven. If a cup of water absorbs microwave energy and its temperature rises from \(20^\circ\textrm{C}\) to \(40^\circ\textrm{C}\), the important observed effect is heating, not ionization.

Infrared radiation is often associated with vibrational motion in molecules. When matter absorbs infrared, bonds such as \(\textrm{O–H}\), \(\textrm{C=O}\), or \(\textrm{C–H}\) can vibrate more strongly. Visible light can excite electrons to higher energy states, which is why pigments absorb some colors and reflect others. Ultraviolet radiation can trigger chemical reactions; for example, it can damage DNA molecules in skin cells, which is one reason excessive UV exposure raises skin cancer risk.

The same frequency can have different effects in different materials because matter has different structures and energy levels. Clear glass transmits much visible light but can block much ultraviolet radiation. Dark clothing absorbs more visible and infrared radiation than light-colored clothing, which often makes it warmer in sunlight.

This is where chemistry becomes essential. Claims about radiation should match how atoms, molecules, and materials actually behave. If a report says that a low-energy non-ionizing source "breaks DNA bonds directly," that should raise questions, because bond breaking usually requires enough energy at the molecular level to produce that effect.

Frequency alone is not the whole story

A scientifically sound evaluation considers frequency, energy per photon, total intensity, exposure time, distance from the source, and the material being exposed. A weak source at one frequency may have less effect than a stronger source at another frequency, even if the second has lower photon energy. Real risk depends on the full exposure conditions.

For example, sunlight contains visible, infrared, and ultraviolet radiation. Infrared can warm your skin, visible light allows vision, and ultraviolet can trigger chemical changes in skin cells. One source can therefore produce multiple effects at once.

Validity asks whether the study or argument measures what it claims to measure and whether the conclusion follows from the data. Reliability asks whether the result is stable and reproducible. A single dramatic experiment may attract attention, but science becomes convincing when many careful studies point in the same direction.

Imagine a report claiming that a certain lamp emits harmful ultraviolet radiation. To judge validity, you would ask whether the lamp actually emits UV, whether the instrument was calibrated, whether exposure levels were realistic, and whether the measured UV was compared with known safety limits. To judge reliability, you would ask whether repeated measurements gave similar results and whether other investigators found the same thing.

Reliability also applies to media reporting. A trustworthy report quotes the study accurately, includes limitations, and avoids overstating conclusions. A less reliable report may use emotional language such as "shocking," "deadly," or "proven" when the actual study was small or preliminary.

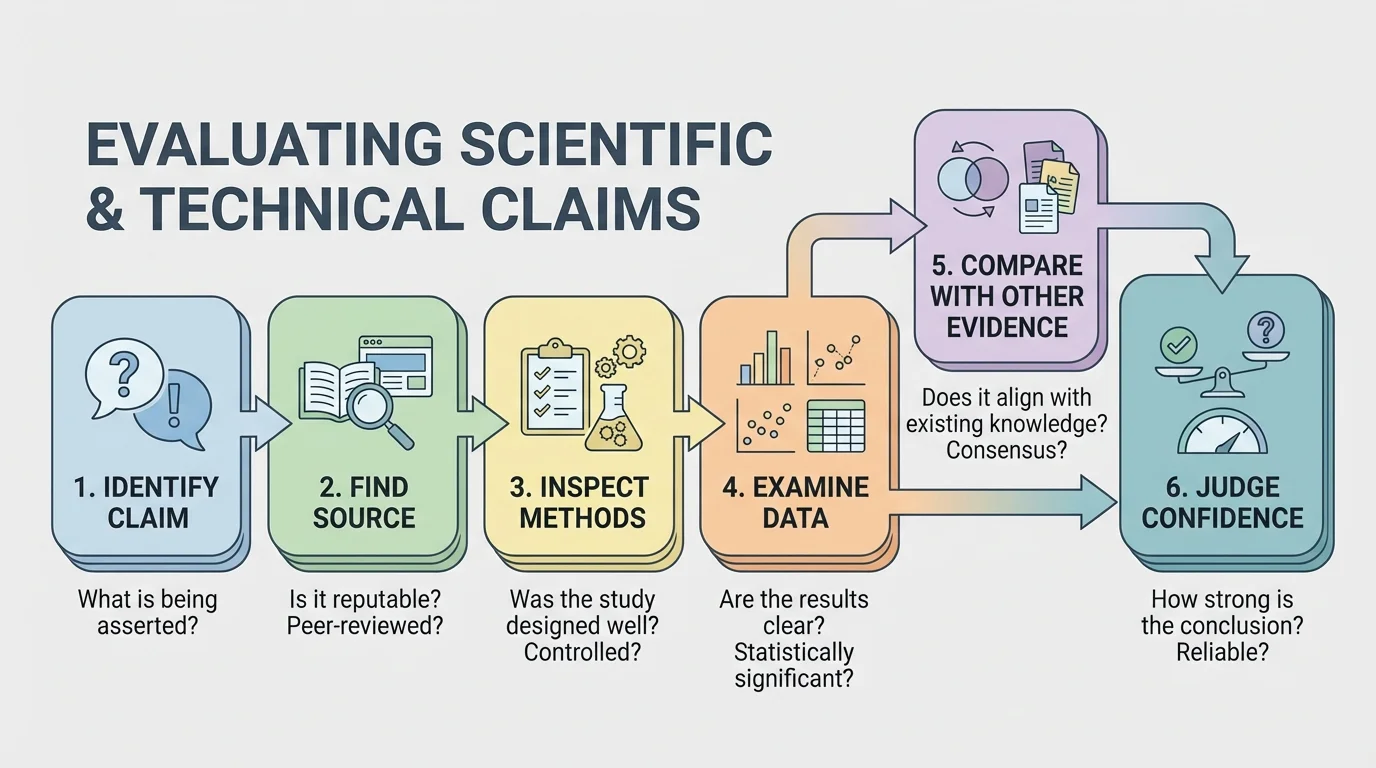

A systematic evaluation helps prevent persuasive wording from replacing evidence. Start by identifying the exact claim. Then ask where it came from: a peer-reviewed journal article, a government report, a university press release, a company advertisement, or a social media post.

Next, examine the authors and funding. Researchers can do excellent work even when funded by industry or advocacy groups, but funding can create bias in how questions are framed, how results are emphasized, or whether negative results are publicized. That does not automatically invalidate a study, but it should make you look carefully at methods and transparency.

Then evaluate the methodology. Was it an experiment, an observational study, a simulation, or a review article? Did it include a control group? Were the sample sizes large enough? Were variables controlled? If a biological effect was tested, were the exposure levels realistic compared with everyday life?

Finally, inspect the data and conclusions. Do the results show a large effect or a tiny one? Is there uncertainty? Did the authors claim only what their data support, or did they generalize too far? Strong studies often include error bars, confidence intervals, repeated trials, and a discussion of limitations.

A common problem is confusing correlation with causation. If two things occur together, that does not prove one caused the other. For instance, if people who spend more time outdoors have higher rates of sun-related skin damage, the likely cause is not simply "being outdoors" in general, but exposure to ultraviolet radiation, especially without protection. To establish causation, scientists look for mechanism, consistency, dose-response patterns, and control of other variables.

Scientific papers often sound more cautious than the headlines written about them. A paper may say the evidence suggests a possible association under certain conditions, while a headline may turn that into a direct cause-and-effect statement.

Another key question is whether the source discusses uncertainty honestly. In science, uncertainty is not weakness; it is part of accurate reporting. A source that pretends every result is final may be less trustworthy than one that openly explains what is known, what is still uncertain, and what evidence would strengthen the conclusion.

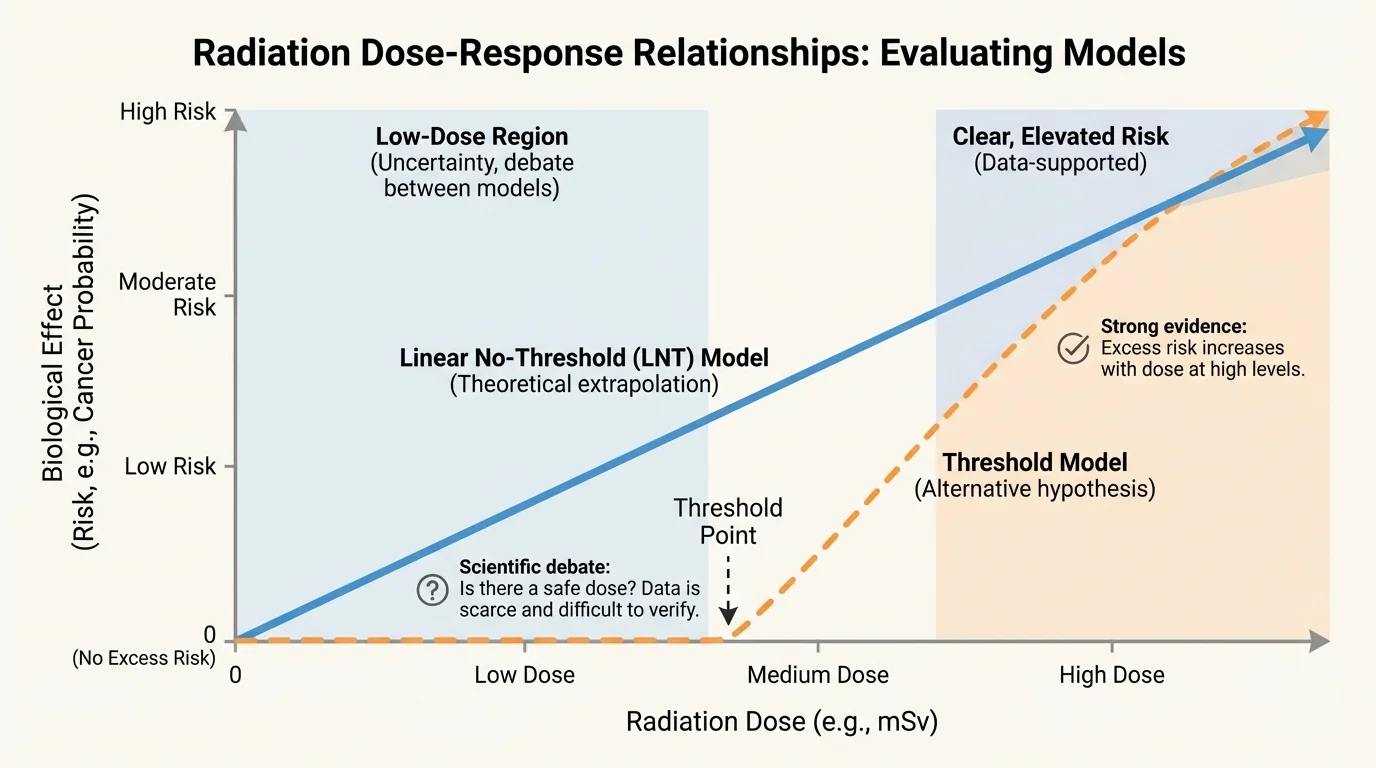

[Figure 4] The same frequency can lead to different outcomes at different doses, as a typical dose-response pattern shows. That is why reading a graph or data table matters more than reacting to a dramatic headline. Ask what the axes measure, what units are used, and whether the graph starts at zero or is visually exaggerated.

Suppose a graph shows skin damage increasing with ultraviolet exposure. If the horizontal axis is exposure time and the vertical axis is number of damaged cells, you should ask whether the measurements were taken under realistic sunlight conditions, whether sunscreen was used, and whether the increase was statistically meaningful or just random fluctuation.

Quantitative reasoning also helps. Intensity often changes with distance from a source. In many situations, the intensity follows an inverse-square type pattern: \[I \propto \frac{1}{r^2}\] where \(I\) is intensity and \(r\) is distance. If you move from \(1 \textrm{ m}\) to \(2 \textrm{ m}\) from a small source, the intensity may fall to about \(\dfrac{1}{4}\) of its original value. This does not apply perfectly in every real setting, but it shows why distance matters in exposure claims.

Units matter too. Power might be reported in watts, energy in joules, and frequency in hertz. A report that switches units carelessly can confuse readers. If one source discusses frequency and another discusses total absorbed energy, they are not necessarily contradicting each other; they may be describing different aspects of the same situation.

Later, when comparing evidence, return to the dose-response pattern: claims about "danger" often become more accurate when they specify dose, duration, and threshold rather than treating exposure as simply present or absent.

Consider the claim, "Microwave ovens make food radioactive." This claim is not valid. Microwave ovens use non-ionizing radiation to heat food, mainly by increasing molecular motion in substances such as water. The radiation is not nuclear radiation, and it does not make the food emit radiation afterward. A better claim would be that microwave ovens transfer energy into food by electromagnetic waves, causing heating.

Now consider, "Ultraviolet light can damage skin." This claim is strongly supported. It is consistent with the known chemistry of DNA damage, with laboratory evidence, and with long-term population data on skin cancer risk. Here, validity is strong because there is a known mechanism and multiple lines of evidence. Reliability is also strong because many studies support the result.

A more complicated claim is, "Cell phones cause cancer." This cannot be judged by one headline. Cell phones emit radiofrequency radiation, which is non-ionizing. That means any proposed harm must fit non-ionizing mechanisms, such as heating or indirect biological effects, not direct ionization like X-rays. To evaluate the claim, scientists compare animal studies, epidemiological studies, exposure measurements, and physical limits on energy transfer. A careful conclusion is stronger than a yes-or-no slogan.

| Claim | Relevant radiation | Main mechanism to check | Evidence pattern |

|---|---|---|---|

| Microwave ovens make food radioactive | Microwaves | Heating of polar molecules | Claim is not supported by physics |

| UV exposure can damage skin | Ultraviolet | Chemical damage to biomolecules | Strong evidence from multiple sources |

| Infrared cameras see through walls | Infrared | Detection of heat emission | Usually misleading; they detect surface temperature patterns |

| Medical X-rays increase radiation exposure | X-rays | Ionization in tissues | Supported, but risk depends on dose and benefit |

Table 1. Examples of radiation-related claims and the scientific mechanisms and evidence patterns used to evaluate them.

Notice the pattern: a claim becomes more believable when it matches known physics and chemistry, uses realistic exposure conditions, and is supported by multiple independent sources.

Verification means checking whether a claim agrees with direct data or trusted references. If a media article mentions a study, look for the original paper or abstract. If it gives a numerical value, compare that number with values reported by health agencies, scientific societies, or government laboratories.

You can also verify claims using basic scientific relationships. If a source claims that lower-frequency radiation has more energy per photon than higher-frequency radiation, you can reject that because \(E = hf\) shows that energy increases with frequency. If a source claims that a lamp emits mostly visible light but also causes strong ionization at ordinary exposure, that claim deserves careful scrutiny because visible light is generally non-ionizing.

Case study: checking a UV safety claim

A news report says that a new classroom lamp emits "dangerous UV levels." How could you evaluate that?

Step 1: Identify the exact claim.

Is the report claiming that the lamp emits any ultraviolet radiation, or that it emits enough UV to exceed safety guidelines?

Step 2: Find the source and method.

Look for the original measurement report. Check what instrument was used, whether it was calibrated, and how far from the lamp the measurement was taken.

Step 3: Compare with reference values.

If the measured UV intensity is reported, compare it with accepted exposure limits and with ordinary sunlight exposure.

Step 4: Judge the conclusion.

If the lamp emits a very small amount of UV far below safety limits, the word "dangerous" is not justified even if the lamp emits some UV.

The best conclusion may be that the lamp emits detectable ultraviolet radiation, but the evidence does not support the stronger claim that normal use is hazardous.

Verification does not always mean proving a claim true or false by yourself. Often it means checking whether the claim is consistent with trusted measurements, accepted models, and the consensus of experts.

Real scientific questions are rarely answered by one study. To synthesize evidence means combining results from different methods while noticing how each method contributes something different. Laboratory experiments can reveal mechanisms. Observational studies can show patterns in populations. Engineering measurements can determine actual exposure levels.

Suppose three sources discuss radiofrequency exposure. One laboratory study reports a small cellular effect at high intensity. One population study finds no clear increase in disease. One engineering report shows that ordinary device exposure is much lower than the laboratory condition. A strong synthesis does not ignore any one of these. Instead, it asks how they fit together.

The laboratory study may suggest a question worth further study, but if the exposure was far above normal use, it may not justify broad real-world claims. The population study may be more realistic but may also be limited by many confounding variables. The engineering report may help bridge the two by showing whether the tested conditions match real life. When these are combined, the conclusion becomes more careful and more useful.

This is why a single dramatic result should not outweigh an entire body of evidence. The most dependable scientific judgments often come from convergence: different methods, different researchers, and different settings all pointing in the same general direction.

You already know that scientific explanations depend on evidence, not authority alone. Here, that principle extends further: not only should you ask for evidence, but you should also ask whether the evidence is measured well, interpreted correctly, and confirmed by other work.

As seen earlier in the evaluation flowchart, synthesis is the stage where separate pieces of evidence are compared rather than treated as isolated facts. That comparison often reveals that two sources are not really disagreeing; they are answering slightly different questions.

In medicine, X-rays and CT scans use ionizing radiation, so doctors weigh benefit against risk. The radiation can help detect fractures, infections, or tumors, but unnecessary exposure is avoided. A scientifically sound claim here is not "X-rays are bad" or "X-rays are harmless." A better claim is that medical imaging has benefits and risks, and the decision depends on dose and clinical need.

In public health, sunscreen recommendations are based on strong evidence about ultraviolet absorption and skin protection. Sunscreen contains molecules that absorb or scatter UV radiation, reducing the amount that reaches living tissue. That is an example of scientific knowledge being applied to reduce harm.

In engineering, wireless communication devices are designed with power limits and testing standards. Evaluating claims about safety involves both physics and biology: how much energy is emitted, how much is absorbed by tissue, and whether observed effects are reproducible. Here reliability matters because measurements and safety tests must be consistent.

Case study: comparing two headlines

Headline A says, "Phone radiation proven dangerous." Headline B says, "New study explores possible biological effect under high exposure conditions."

Step 1: Compare the certainty of the language.

"Proven dangerous" is much stronger than "explores possible effect." Scientific writing usually avoids absolute wording unless evidence is overwhelming.

Step 2: Check what was studied.

If the study used isolated cells and unusually intense exposure, it may not justify claims about everyday phone use.

Step 3: Compare with broader evidence.

One new study should be weighed against existing reviews, exposure data, and replication by other groups.

Headline B is more likely to reflect how science actually works: cautious, specific, and open to further testing.

These examples show that evaluating radiation claims is not about fear or dismissal. It is about matching statements to mechanisms, measurements, and the strength of evidence.

A strong conclusion uses words that match the evidence. Instead of saying "all radiation is dangerous," say which radiation, under what conditions, at what dose, and with what observed effect. Instead of saying "there is no risk," say whether current evidence shows low risk, uncertain risk, or risk only above certain exposure levels.

Scientific conclusions should also separate established facts from open questions. For example, it is well established that ultraviolet radiation can damage skin and that X-rays are ionizing. It is more complex to judge certain low-level exposure claims when results are mixed or mechanisms are uncertain. Good science communicates both confidence and limits.

When you evaluate a published claim, look for alignment among physical principles, chemical behavior, data quality, reproducibility, and agreement with other studies. If several of these are weak, confidence should be low. If all of them are strong, confidence rises.

"Extraordinary claims require extraordinary evidence."

— A core principle of scientific skepticism

That principle is especially useful in media reports about electromagnetic radiation. The more dramatic the claim, the more carefully you should inspect the evidence behind it.