You do not need someone to directly tell you what to believe for your beliefs to start changing. A feed you scroll every day, a creator you trust, a fan community, or a group chat full of strong opinions can quietly shape what seems normal, true, funny, scary, or important. That matters because your online choices can affect your mood, your friendships, your spending, your safety, and the way you treat other people.

Think about how often you get information from short videos, posts, livestreams, comment sections, or recommendation pages. If you keep seeing the same kind of message, it can begin to feel like "everyone knows this" even when it is only one slice of the internet. That is one reason media literacy is a life skill, not just a school skill. It helps you make better decisions about what to trust, what to share, what to buy, and what kind of person you want to be online.

Media can shape behavior in small ways and big ways. A small way might be copying slang, a fashion trend, or a study routine because people online make it look appealing. A bigger way might be joining a pile-on against someone, trying a risky challenge, believing a rumor about a health product, or assuming a whole group of people is bad because of repeated negative posts.

Media includes messages shared through videos, posts, ads, podcasts, livestreams, articles, memes, and images. Online communities are groups of people who gather on platforms, servers, forums, chats, or fan spaces around shared interests or identities. Bias is a leaning or preference that affects how information is created, chosen, or interpreted.

When you understand how influence works, you are less likely to be pushed around by it. You can still enjoy your favorite creators and communities, but you can do it with your eyes open.

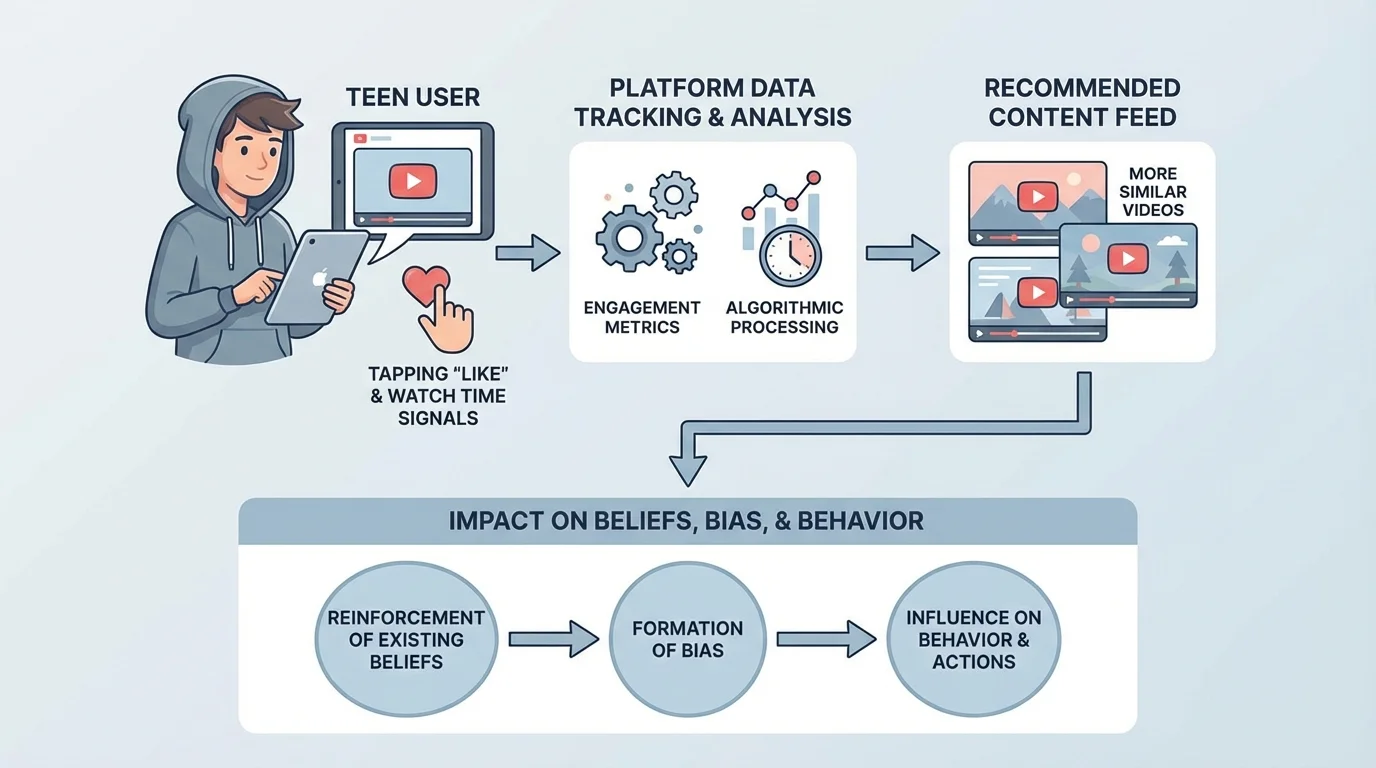

A algorithm is one major reason your online world can start feeling narrow. Platforms track what you click, how long you watch, what you search, what you replay, and what you react to. Then they suggest more of the same. This is useful when you want more of something you like, but it can also trap you in one mood, one viewpoint, or one type of content.

[Figure 1] Repetition is powerful. If you see a claim over and over, your brain may start to treat it as more believable, even if the claim is weak or false. That can happen with rumors, stereotypes, conspiracy-style thinking, and even product hype. A video does not become true because it has been reposted many times.

Emotion also drives attention. Content that triggers anger, fear, shock, or excitement spreads fast because people react quickly. Media creators and platforms know this. A calm, balanced explanation often receives less attention than a dramatic headline, even when the calm explanation is more accurate.

Another influence is design. Bright thumbnails, urgent wording, countdowns, dramatic music, and before-and-after images are not random. They are built to keep you watching or clicking. That does not automatically mean the content is bad, but it does mean you should separate presentation from truth. A polished post can still be misleading.

This is how a feed can become a tunnel. If you interact with one kind of content for a week, your recommendations may lean harder in that direction. As [Figure 1] shows, your own actions train the system, and the system then shapes what you see next.

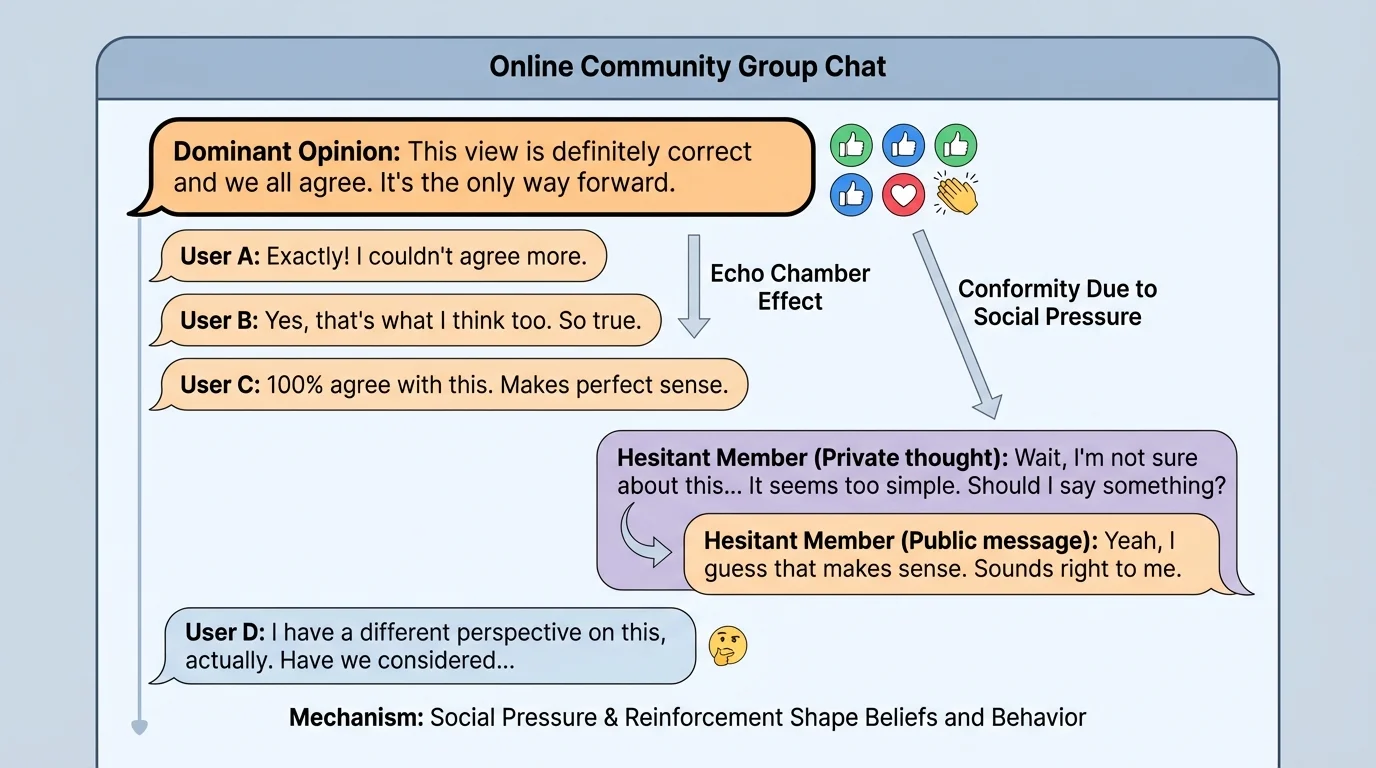

[Figure 2] An online community can make ideas feel normal very quickly. In a server, fandom space, gaming group, or discussion forum, people learn what gets praise, what gets mocked, and what opinions seem acceptable. That social pressure can be subtle. Nobody may say, "You must think this," but likes, replies, jokes, and silence all send signals.

Belonging feels good. Humans naturally want connection, and communities can be amazing for support, creativity, and friendship. But the same need to belong can make people hide doubts, copy harsh behavior, or agree with bad information so they do not feel excluded.

Communities often create shared language, inside jokes, and "us versus them" thinking. That can strengthen group identity, but it can also reduce empathy. If a space constantly treats outsiders as stupid, evil, or fake, members may stop evaluating claims fairly and start defending the group automatically.

This is especially important when a group pushes risky behavior. Maybe a private chat encourages bullying someone, spreading a rumor, joining a hate campaign, skipping sleep to stay online, or trying a dangerous challenge. In those moments, the group is shaping behavior, not just conversation.

Healthy communities allow questions, corrections, and respectful disagreement. Unhealthy communities shame people for asking for evidence, pressure members to prove loyalty, or act like leaving the group means betrayal. Looking back at [Figure 2], notice how quickly one dominant reaction can influence everyone else.

People are more likely to accept weak information when it comes from someone they already identify with. That means trust in a favorite creator or group can sometimes lower your guard instead of raising it.

That is why it is smart to ask yourself, "Do I believe this because the evidence is strong, or because my group expects me to?"

Confirmation bias is the habit of noticing and accepting information that matches what you already think, while ignoring information that challenges you. Everyone does this sometimes. The goal is not to feel bad about it. The goal is to catch it before it controls your choices.

There is also source credibility. A source might look trustworthy because it has a confident tone, lots of followers, or professional editing, but those things are not proof. Credibility comes from accuracy, evidence, expertise, honesty about uncertainty, and a record of correcting mistakes.

Creators have bias too. They choose what facts to include, what examples to show, and what emotion to create. Sometimes that bias is obvious, such as an ad pretending to be a review. Sometimes it is subtle, such as showing only one side of a conflict. Platforms can have bias as well because their systems are built to increase engagement, not necessarily fairness or truth.

You bring your own bias every time you open an app. Your past experiences, likes, fears, and identity affect how you read a post. A mature digital citizen does not pretend to be perfectly neutral. Instead, you learn to say, "Here is what I might be missing."

| Type of bias | What it looks like | Practical response |

|---|---|---|

| Personal bias | You trust claims that fit your opinions | Read one strong source from another viewpoint |

| Creator bias | A post leaves out facts that weaken its argument | Check whether important context is missing |

| Platform bias | Recommendations keep showing similar content | Search intentionally beyond your feed |

| Group bias | Your community rewards one opinion and mocks others | Pause before copying the crowd |

Table 1. Common kinds of bias and practical ways to respond to them.

Some posts are not trying to inform you. They are trying to move you fast. Watch for common red flags.

False urgency: "Share this now before it gets deleted." Urgency can stop you from checking facts.

Extreme certainty: "Only an idiot would disagree." Confident language can hide weak evidence.

Cherry-picking: showing only the examples that support one side while leaving out the rest.

Emotional bait: using outrage, disgust, or fear so you react before you think.

Bandwagon pressure: "Everyone knows this" or "real fans agree." This tries to replace evidence with social pressure.

Fake expertise: a person speaks as if they are an expert but gives no reliable sources, training, or proof.

Edited context: clips, screenshots, or quotes are cropped so the meaning changes.

These tactics work because they target quick reactions. Slowing down is one of the best digital safety habits you can develop.

Fast feelings, slow thinking

Your brain often reacts emotionally before it reasons carefully. Online content is built to trigger that first reaction. When you pause, you give yourself time to move from "I feel something strong" to "What evidence actually supports this?" That short pause can protect your reputation, your relationships, and your safety.

A useful rule is this: the more a post tries to rush you, the more carefully you should check it.

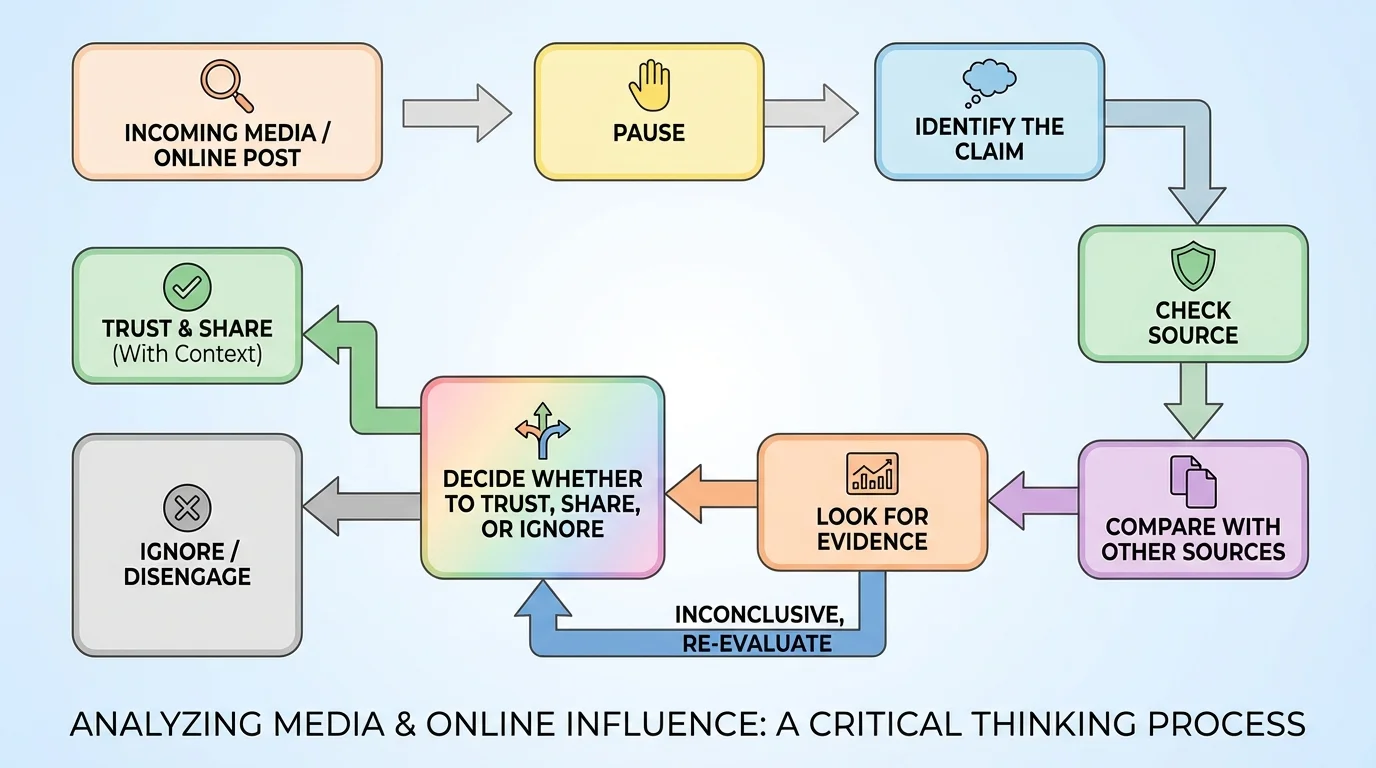

[Figure 3] You do not need a complicated system. A short routine works well, and it can guide you before you like, share, buy, join, or argue.

Step 1: Pause. If a post makes you instantly angry, scared, or thrilled, do not act yet.

Step 2: Identify the exact claim. What is the post actually saying? Be specific.

Step 3: Check the source. Who posted it first? Are they reliable? Are they selling something or pushing a side?

Step 4: Compare sources. Look for at least two other trustworthy places covering the same claim. If only one corner of the internet is saying it, be careful.

Step 5: Look for evidence. Is there data, firsthand reporting, named experts, or clear proof? Or is it mostly opinions, reactions, and screenshots?

Step 6: Notice what is missing. What would someone on the other side say? Is there context left out?

Step 7: Decide your action. You might trust it, save it to verify later, ignore it, or report it if it is harmful.

This process helps with more than fake news. It also works for trends, product claims, celebrity drama, health advice, and community rumors. When you follow the path in [Figure 3], you are training yourself to think clearly instead of react automatically.

Case study: a viral "study booster" drink

Step 1: A creator says a drink will help you focus for hours and links a discount code.

Step 2: You identify the claim: the drink improves focus, not just that the creator likes the taste.

Step 3: You check the source and notice it is sponsored content, so the creator benefits if you buy it.

Step 4: You look for other trustworthy information, such as reliable health sources and honest reviews that mention downsides.

Step 5: You decide not to treat one influencer video as proof.

That does not mean the product is automatically bad. It means you separate advertising from evidence.

Using a routine like this protects you from being pulled into bad decisions just because a post was exciting or popular.

Suppose a gaming community starts spreading a rumor that a new update "secretly steals your personal data." People are angry, and everyone keeps reposting the same screenshot. A careful response is to find the original update notes, check the company's official statement, and see whether trusted tech reporters or security experts confirm the claim. If not, the community may be amplifying fear instead of facts.

Or maybe a fan community turns against a creator based on a clipped video. Before joining the pile-on, ask whether the clip is complete, whether there is missing context, and whether multiple reliable sources agree on what happened. A few seconds of edited footage can shape thousands of people's opinions.

Another example is a health trend. A post claims that a certain habit "detoxes" your body or cures skin problems. If the evidence is mostly testimonials and dramatic before-and-after pictures, you should be cautious. Personal stories can feel convincing, but they are not the same as solid evidence.

Behavior matters too. If your group laughs at cruel comments, you may start posting things you would never say on a video call with family nearby. Online spaces can lower your sense of responsibility. Good digital citizenship means remembering that real people are still on the other side of the screen.

"Not everything that trends deserves your trust."

A strong habit is to ask two questions before reacting: "What do I know?" and "How do I know it?"

You do not need to quit the internet to think clearly. You need better habits.

Curate your feed. Unfollow or mute accounts that constantly trigger rage, spread rumors, or pressure you into buying or hating. Follow a mix of reliable sources and thoughtful creators instead of only the loudest ones.

Take emotional pauses. If content spikes your feelings, wait before reposting or replying. Even a few minutes can reduce impulsive decisions.

Search on purpose. Do not rely only on what the platform serves you. Use search tools to look up different sources yourself.

Read beyond headlines. Headlines and captions are often designed for clicks. The details may tell a more balanced story.

Keep your identity bigger than one group. If one online community becomes your whole social world, it gets harder to question it. Stay connected to different interests and people.

Practice respectful disagreement. You can question a claim without attacking a person. This protects relationships and makes truth-finding easier.

Respect, self-control, and safety rules still apply online. The screen can make actions feel less serious, but screenshots, reposts, and digital records can make consequences last longer.

These habits help you stay informed without becoming cynical. The goal is not to distrust everything. The goal is to trust more wisely.

Sometimes online influence goes beyond persuasion and becomes dangerous. Watch for communities or creators that isolate members, pressure them to keep secrets, encourage illegal acts, target specific groups with hate, or push self-harm, eating disorder content, or dangerous stunts.

Another warning sign is when someone uses private messages to slowly gain control: giving lots of praise, asking for secrecy, testing boundaries, then pushing for personal information, images, money, or risky behavior. Influence can be emotional as well as informational.

If something online makes you feel trapped, threatened, deeply confused, or pressured to hide it from a trusted adult, that is a signal to get help. Blocking, muting, leaving a server, reporting content, saving evidence with screenshots, and talking to a trusted adult are smart actions, not overreactions.

Quick safety response

Step 1: Stop responding if a person or group is pressuring you.

Step 2: Screenshot important evidence, including usernames and timestamps.

Step 3: Block or mute the account if needed.

Step 4: Report the content or user on the platform.

Step 5: Tell a trusted adult, especially if there is harassment, threats, sexual content, scams, or self-harm pressure.

Fast action matters when safety is involved.

Being independent online does not mean handling serious problems alone.

A healthy digital mindset is not about being suspicious of everything. It is about being thoughtful. You can enjoy humor, fandoms, trends, and creators while still asking smart questions. You can belong to communities without giving them control over your judgment. You can be open-minded without becoming easy to manipulate.

The most reliable people online are usually not the loudest. They are the ones who check facts, admit uncertainty, update their views when evidence changes, and treat other people fairly. That is the standard worth copying.

Every time you pause before sharing, check a source, resist group pressure, or refuse to join an online pile-on, you are strengthening your digital character. Those choices shape who you become just as much as the content you consume.